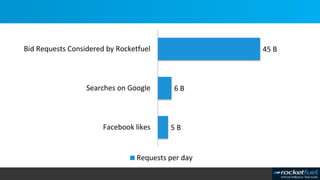

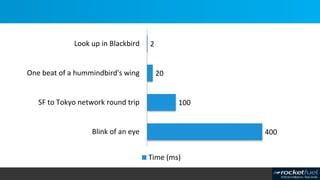

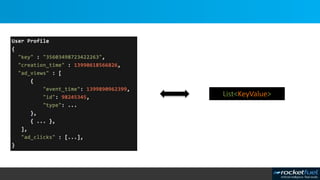

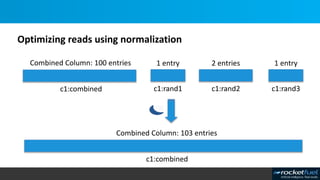

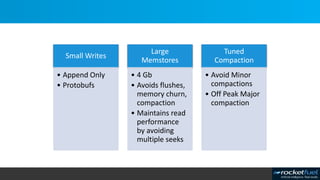

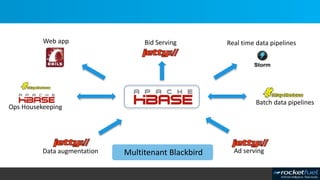

Blackbird is Rocket Fuel's real-time bidding platform that processes billions of rows and bid requests in milliseconds. It uses HBase for its data store and has implemented various optimizations to achieve high performance, such as using protocol buffers, caching, append-only writes, and off-peak compactions. It also focuses on reliability through techniques like aggressive monitoring, dynamic blacklisting, and handling client failures without relying on a proxy server.

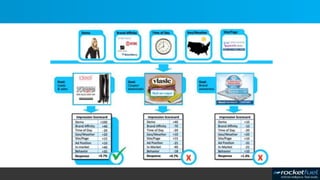

![$0.56

$2.42

1.25

$2.11

$1.26

$2.78

$0.756

$0.809

$2.42

1.25

$2.11

$1.26

$2.78

$1.256

$1.809

$2.42

1.25

$2.11

$1.26

Site/PageGeo/WeatherTime of DayBrand AffinityUser

[ + ][ + ]](https://image.slidesharecdn.com/casestudies-session2-140616154221-phpapp02/85/Case-studies-session-2-3-320.jpg)

![» In absence of proxy: ‘The client is part of the cluster’ [1]

» Client must report availability error to calling application thread in short time span

» Follow circuit breaker pattern for read calls (Anecdote)

» ‘pseudo’ puts (local file) for write calls

[1] Blog post from Lars Hofhansl http://hadoop-hbase.blogspot.com/2012/09/hbase-client-timeouts.html](https://image.slidesharecdn.com/casestudies-session2-140616154221-phpapp02/85/Case-studies-session-2-40-320.jpg)