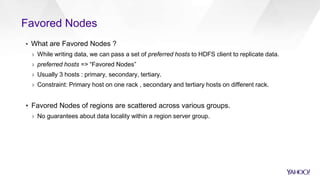

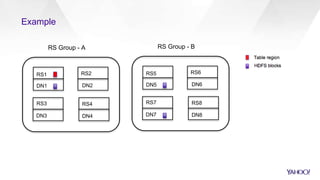

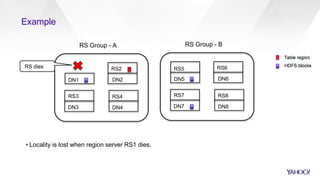

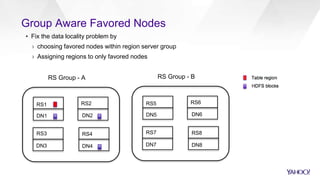

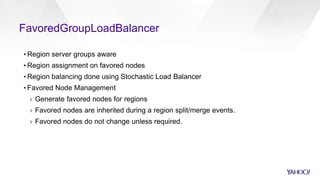

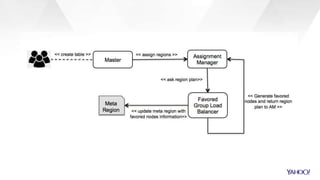

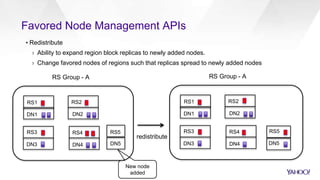

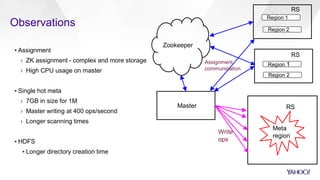

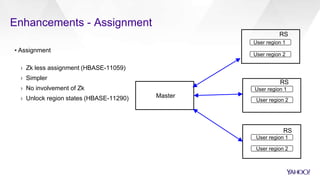

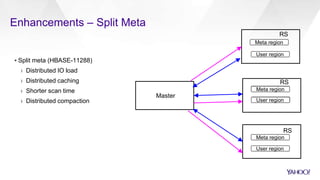

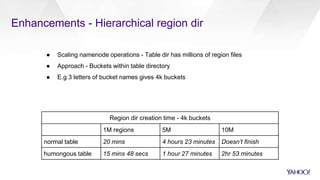

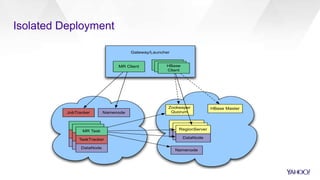

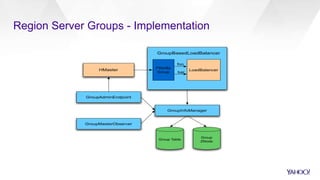

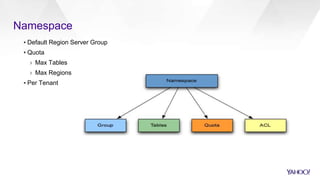

The document discusses the architecture and scalability of HBase at Yahoo!, focusing on scaling to 1 million regions, multi-tenancy, and resource isolation through region server groups. It details favored nodes for data locality, replication strategies, and enhancements to improve performance and operability. Additionally, it presents operational experiences at scale, including challenges with metadata and improvements in region assignment and directory management.

![Replication + Group

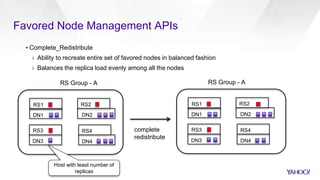

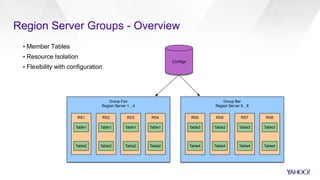

▪ Region Server Group Aware

▪ Rule based API

› Source: {namespace},[Table], [CF]

› Slave: {Peer}

› Effective Time

Group Foo

Group Bar

Table1

Table2

Group Foo

Table1

Table2](https://image.slidesharecdn.com/operations-session4-150605170217-lva1-app6892/85/HBaseCon-2015-Multitenancy-in-HBase-11-320.jpg)