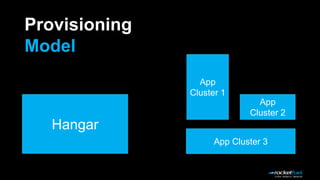

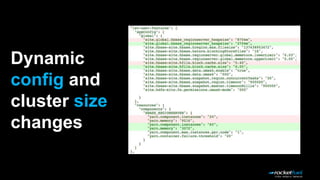

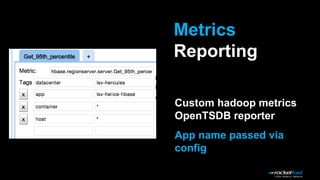

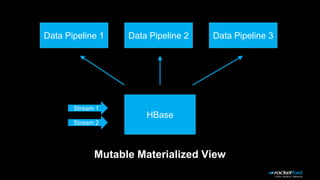

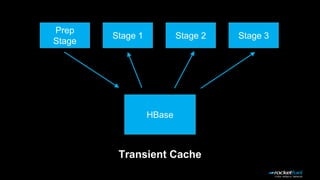

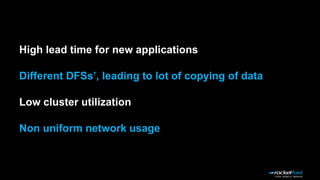

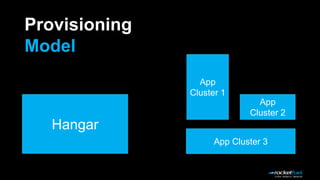

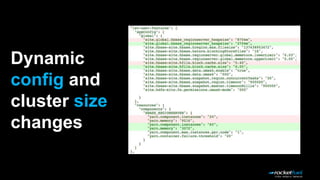

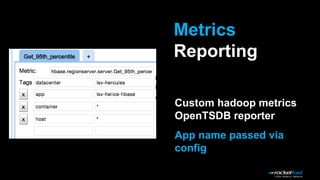

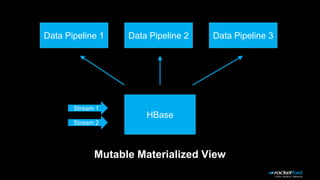

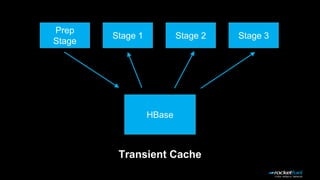

The document discusses the implementation of Deathstar, a dynamic, multi-tenant HBase framework running on YARN, addressing challenges such as capacity planning, application customization, and resource utilization. It highlights a provisioning model that supports both dynamic and static clusters, enabling easier HBase upgrades, scaling, and isolation within a common HDFS layer. Key challenges like logging and scheduling constraints are acknowledged, with ongoing debugging and potential solutions proposed.