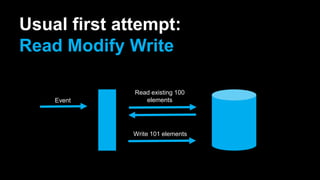

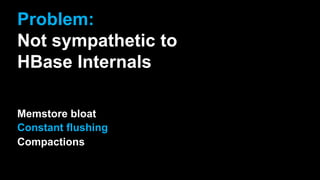

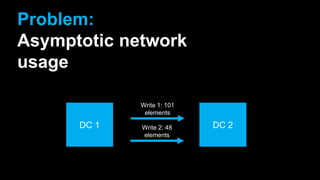

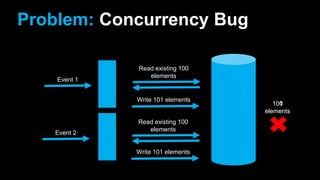

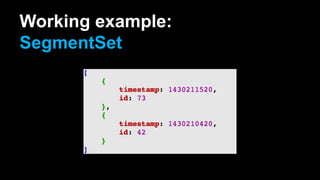

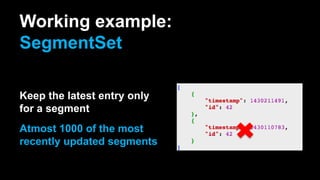

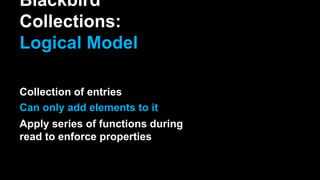

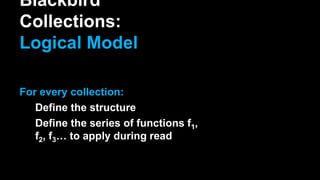

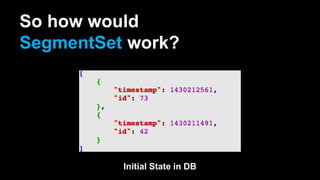

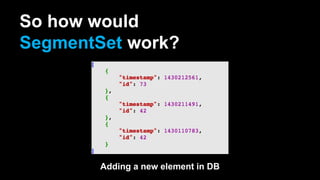

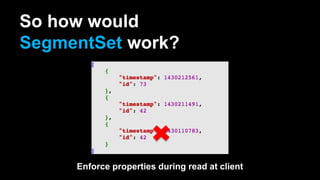

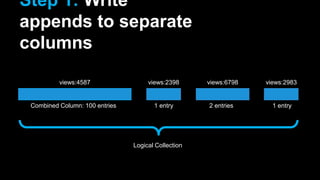

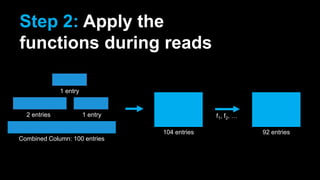

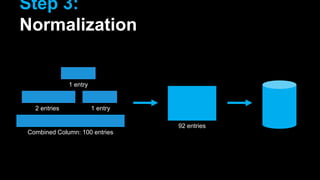

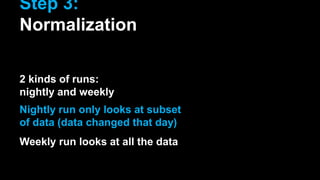

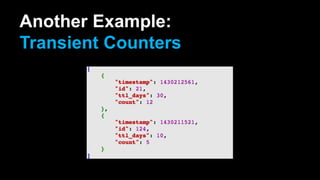

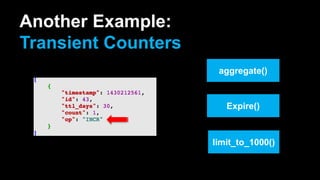

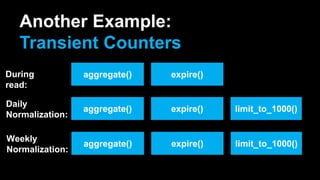

The document discusses the implementation of Blackbird collections for in-situ stream processing within HBase at Rocketfuel Inc., focusing on the challenges faced such as memstore bloat and concurrency bugs. It outlines a logical model for collections that allows for the addition of elements and applies various functions during reads and normalization processes to maintain data integrity. The document also presents examples of different collection types, including segment sets and transient counters, along with their operational methodologies and performance optimizations.