Abstract

This study introduces a self-supervised learning (SSL) approach to hyperscanning electroencephalography (EEG) data, targeting the identification of autism spectrum condition (ASC) during social interactions. Hyperscanning enables simultaneous recording of neural activity across interacting individuals, offering a novel path for studying brain-to-brain synchrony in ASC. Leveraging an unlabeled, large-scale single-brain EEG dataset for SSL pretraining, we developed a multi-brain classification model fine-tuned with hyperscanning data from dyadic interactions involving ASC and neurotypical participants. The SSL model demonstrated superior performance (78.13% accuracy and 98.90% recall) compared to supervised baselines and logistic regression using spectral EEG biomarkers. These results underscore the efficacy of SSL in addressing the challenges of limited labeled data, enhancing EEG-based diagnostic tools for ASC, and advancing research in social neuroscience.

Similar content being viewed by others

Introduction

Autism Spectrum Condition (ASC) is a neurodevelopmental condition marked by difficulties in social communication, repetitive behaviors, and restricted interests (Hodges et al., 2020). Diagnosis typically involves behavioral observations and standardised interviews conducted at various stages of childhood and adolescence (Brihadiswaran et al., 2019; Newschaffer et al., 2007). Diagnosing ASC in adults, however, presents challenges due to a lack of validated tools, as many methods are derived from assessments for children (Lord et al., 2020).

Studying the brain is crucial in ASC research as it provides a direct window into the neural mechanisms underlying social and cognitive differences observed in the condition. Traditional behavioral assessments offer valuable insights but may overlook the biological basis of these challenges, particularly in adults (Murphy et al., 2011). Prior research has revealed alterations in brain structure and function in autistic individuals, such as differences in cortical thickness, connectivity, and activity within social brain networks (Ecker et al., 2015; Uddin et al., 2013). These findings underscore the potential of brain-based approaches to refine diagnosis and deepen understanding of the condition.

A promising diagnostic method, complementary to behavioral assessments, is electroencephalography (EEG), which measures neural activity and offers insight into brain connectivity (Gurau et al., 2017). Resting-state EEG studies in autistic individuals have suggested a U-shaped profile of electrophysiological power alterations (Hull et al., 2018; Milovanovic & Grujicic, 2021), characterized by excessive power in both low- (Hornung et al., 2019) and high-frequency bands (Rojas & Wilson, 2014), abnormal functional connectivity patterns, and enhanced power in the left hemisphere of the brain (Wang et al., 2013). These findings offer valuable neurobiological insights into ASC, particularly concerning the left-hemisphere asymmetry, which is especially relevant given the language abnormalities commonly associated with the condition (Dawson et al., 1989; Wang et al., 2013). However, it is important to recognise that such neurophysiological markers do not necessarily indicate a causal relationship with language impairments (Bishop, 2013; Nielsen et al., 2014).

However, these approaches focus on individuals and task-independent activity and might not be enough to capture specific features unique to social interactions, such as dynamicity and reciprocity. In the last two decades, researchers have turned to hyperscanning, which monitors brain activity in multiple individuals simultaneously (Montague et al., 2002; Moreau & Dumas, 2021; Novembre & Iannetti, 2021). This approach has unveiled inter-brain correlation (IBC) and inter-brain synchrony (IBS) in a wide range of social scenarios, including imitation (Dumas et al., 2010), mutual gaze (Leong et al., 2017), shared attention (Hirsch et al., 2017), deceptive interactions (Zhang et al., 2017a, b), verbal communication (Hirsch et al., 2018), coordinated activities (Zamm et al., 2018), interpersonal synchronization (Cui et al., 2012), and collaborative tasks (Matusz et al., 2019). These findings challenge the traditional view of the brain as a self-contained system, instead highlighting its role in dynamic, interactive processes (Bottema-Beutel et al., 2019). To advance our understanding and our potential markers of ASC, it is critical to investigate individuals in natural, reciprocal interactions (Dumas, 2022; Nadel & Pezé, 2017). Since social dynamics underpin many psychiatric conditions, hyperscanning offers a promising path to detect and quantify disruptions in social alignment. Difficulties in aligning neural activity with others could serve as a tangible marker of atypical social cognition, offering a more authentic alternative to conventional measures like resting states, but also social perception tasks or Theory of Mind evaluations (Dumas, 2022). Yet, despite its potential, the use of hyperscanning in ASC research is still in its early stages. A pioneering fMRI hyperscanning study by Tanabe et al. (2012) revealed reduced inter-brain synchronization in the right inferior frontal gyrus of ASC-TD (i.e., typically developing) pairs during joint actions, suggesting distinct neural processing in ASC. Similarly, Wang et al. (2020) found that autistic children showed increased frontal cortex synchronization with their parents during cooperative tasks, and this neural synchronization negatively correlated with autism severity. Kruppa et al. (2021) further explored brain-to-brain synchrony in autistic and TD children, finding behavioral but not neural differences, emphasizing the need for deeper investigation.

Hyperscanning with EEG, offering high temporal resolution and real-time insights into social interactions, is particularly well-suited for studying ASC. Nam et al. (2020) reviewed 60 EEG hyperscanning studies, none of which focused on ASC, revealing a significant gap in understanding the social dynamics of this population. This gap is further underscored by the scarcity of ASC-specific research, as noted by Liu and colleagues (2018). Yet recently, it was found that neural synchrony increased during conversations among ASC individuals, with lower synchrony linked to greater social difficulties (Key et al., 2022), as well as differences in inter-brain synchronization between adult ASC-TD pairs during social imitation tasks (Moreau et al., 2024). These findings underscore the potential of EEG hyperscanning to provide valuable insights into the social dynamics and neural underpinnings of ASC individuals.

Despite the great promises of EEG, it is constrained by a low signal-to-noise ratio, non-stationary signals, and substantial inter-subject variability due to individual physiological differences (Cohen, 2017). These challenges make it difficult to extract meaningful neuromarkers from EEG data using traditional univariate statistical methods (Lotte et al., 2018). To address these issues, computational models are essential for automating pattern recognition, enabling more accurate, reliable, and scalable analysis. Deep learning (DL) models, in particular, are capable of detecting complex patterns in noisy and unstable signals, generalizing across subjects, and automatically extracting relevant features from raw data. This reduces the need for manual feature engineering (Roy et al., 2019). Such capabilities are vital for handling the complexities of EEG data, especially in clinical settings where individual variability is a concern (Wronkiewicz et al., 2015; Zhang et al., 2021). As a result, DL models enhance the ability to detect subtle neural signals, significantly advancing EEG analysis.

DL includes various paradigms, such as supervised, unsupervised, and self-supervised learning. While supervised learning relies on labeled data to train models, self-supervised learning provides an alternative by generating pseudo-labels through data transformations or augmentation (Liu et al., 2021). This allows models to train without the need for external labels, making it particularly advantageous in EEG analysis, where labeling is resource-intensive and requires specialized expertise (Banville et al., 2021; Roy et al., 2019). Given the abundance of unlabeled EEG data, self-supervised learning becomes an invaluable tool, enabling more efficient and scalable analysis. By utilizing self-supervised learning, EEG analysis can become both more efficient and accurate, ultimately enhancing clinical insights. This method reduces the burden of traditional labeling, offering a more scalable solution for advancing data-driven research in the field. Recently, Banville and colleagues (2021) introduced the concept of self-supervised learning to acquire meaningful representations from EEG data. They presented two specific self-supervised learning (SSL) tasks, namely relative positioning (RP) and temporal shuffling (TS), and adapted a third technique called contrastive predictive coding (CPC) (Oord et al., 2019) to apply to EEG data.

This study aims to comprehensively analyze hyperscanning EEG data obtained from both autistic and neurotypical participants. The primary objective is to develop a DL model explicitly designed to extract and recognize patterns and relationships within individual EEG signals, employing a self-supervised learning methodology. Building on the work of Banville et al. (2021), we aimed to apply a self-supervised learning approach to the Healthy Brain Network (HBN) and Brain-to-Brain Communication V2 (BBC2) datasets. We first developed a “single-brain model” using SSL on the HBN dataset, which encodes EEG recordings into a concise feature vector. This vector captures characteristics of individual brain activity and extracts distinctive details. For the downstream task, we introduced a “multi-brain model” that simultaneously processes two single-brain models. This model is specifically designed for binary classification, distinguishing interactions involving at least one autistic individual (ASC) from those between two neurotypical individuals (TD). The representations acquired from these EEG signals will play a pivotal role in developing a multi-brain architecture specifically tailored for hyperscanning EEG classification. The development of objective EEG-based markers for ASC could significantly benefit the autistic community by addressing current diagnostic challenges, including lengthy waiting periods, subjective assessments, and missed diagnoses, particularly in adults (Crane et al., 2018; McKenzie et al., 2015).

Methods and Materials

At its core, self-supervised learning is a two-stage process: a pretext task followed by a downstream task (Jing & Tian, 2019). Pretext tasks serve as the foundational building blocks of SSL, forming a crucial bridge between raw data and the goal of training a model for downstream tasks. In the initial pretext task phase, the model is presented with a set of self-generated challenges or auxiliary objectives. These challenges are carefully designed to encourage the model to extract meaningful and informative features from the unlabeled data. The model learns to uncover patterns, relationships, and representations within the data itself, effectively transforming it into a more structured and informative format.

Once the model has successfully completed the pretext task phase, it has acquired latent knowledge about the data that is then employed in the downstream task phase, where the model is fine-tuned or transferred to specific target tasks (Liu et al., 2021). These downstream tasks can range from image classification and object detection to natural language understanding and recommendation systems. The model’s pre-trained features, gained through the pretext tasks, enhance its performance on these target tasks.

Self-supervised learning has shown strong performance in diverse domains. In computer vision, models like SimCLR and MoCo learn representations by distinguishing whether two image crops come from the same source (Chen et al., 2020; He et al., 2020). In natural language processing, BERT uses masked word prediction to capture contextual structure from raw text (Devlin et al., 2019). In EEG research, SSL has been applied to pretext tasks such as temporal reordering or contrastive learning to support downstream classification of clinical or cognitive states (Banville et al., 2021; Wagh et al., 2021).

Datasets

We used two EEG datasets: the Healthy Brain Network (HBN) dataset for the pretext task and the Brain-to-Brain Communication V2 (BBC2) dataset for the downstream task. The HBN dataset (Alexander et al., 2017), from the Child Mind Institute, includes EEG recordings from over 3,000 participants aged 5–21 years, collected using a 128-channel HydroCel Geodesic EEG system at 500 Hz. The HBN dataset contains both eyes-open and eyes-closed resting-state intervals. These conditions were combined to introduce heterogeneity during pretraining, which can promote the learning of invariant and transferable representations. Due to computational constraints, pretraining was limited to the first 1,000 available EEG recordings; no demographic, clinical, or quality-based filtering was applied.

For the downstream task, we used the BBC2 dataset (Dumas et al., 2014; Moreau et al., 2024), which includes hyperscanning EEG data from 36 participants in dyads: 9 mixed (TD-ASC) and 9 control (TD-TD). The data, recorded with a 64-channel system at 500 Hz, was collected during three interactive phases: observation of hand gestures, spontaneous imitation, and video-guided imitation. Each phase lasted 1.5 min, preceded by a 15-second resting period. This dataset was used to classify dyads based on group composition (TD-TD vs. TD-ASC) and enabled us to fine-tune the pre-trained model from the HBN dataset, assessing its performance in distinguishing neurotypical and autistic interactions.

Preprocessing Pipeline

First, channels that were labeled as “bad,” either manually from the BBC2 dataset or by design in the HBN dataset, were interpolated using the spherical spline method to ensure consistency in the data. A notch filter of 50 Hz for the BBC2 and of 60 Hz for the HBN was applied to remove line noise.

Using EOG channels in BBC2, or proxy EOG channels by creating a bipolar reference from frontal EEG sensors for HBN, we applied Independent Component Analysis (ICA) with the extended infomax method to identify and remove ocular artifacts. Component classification was performed automatically using the mne_icalabel package (Pion-Tonachini et al., 2019), which assigns each independent component a predicted source label. We retained components labeled as “Brain” or “Other” based on the highest-probability class, without manual inspection or thresholding.

To improve ICA performance and minimize contamination, the continuous EEG was band-pass filtered between 0.1 and 48 Hz. The lower cutoff mitigates slow drifts that can destabilize decomposition, while the upper cutoff attenuates high-frequency non-neural artifacts (e.g., EMG, line noise). Although scalp EEG can reflect frequencies above 50 Hz (Dvorak et al., 2018; Fitzgerald & Watson, 2018), reliable estimation of high-gamma activity is constrained by low signal-to-noise ratio and pervasive muscle contamination (McMenamin et al., 2010; Miller et al., 2007; Muthukumaraswamy, 2013). We therefore adopted a conservative upper bound at 48 Hz.

EEG data was then segmented into constant 1-second epochs, a critical step for accurate inter-brain synchrony (IBS) computation (Ayrolles et al., 2021). Remaining noisy epochs were automatically rejected using Autoreject (Jas et al., 2017).This approach balances temporal resolution and statistical power; methodological trade-offs are further detailed in “Epoch Length” section.

Although downsampling to ~ 96 Hz would satisfy the Nyquist criterion for signals below 48 Hz, we chose to retain the original 500 Hz rate. This decision preserved fine-grained temporal resolution (~ 2 ms) and functioned as a form of data augmentation by increasing the number of samples per epoch, which is beneficial in deep learning workflows.

Since the HBN and BBC2 datasets employed different EEG montages (HBN using the 128-channel HydroCel Geodesic Sensor Net (GSN) system (The Geodesic Sensor Net, n.d.) and BBC2 following the 64-channel 10–10 Standard System), we aligned the montages for cross-dataset compatibility. The HBN montage was downsampled to match the BBC2 channel count by carefully mapping corresponding channels between the two systems based on established equivalents (EGI Technical Note). This alignment ensured consistency across datasets, enabling effective model training and evaluation. Appendix 1 and Online Resource 1 (ESM_1.csv) provide the full channel mapping details.

While our preprocessing pipeline was not adapted specifically for self-supervised learning, these steps, such as artifact removal and channel interpolation, are essential for improving the quality of the input data, which in turn facilitates more robust representation learning during SSL (Banville et al., 2021; Roy et al., 2019).

Pretext Task

In this study, the model is pre-trained on unlabeled single-brain EEG data using a temporal shuffling (TS) task adapted from Banville et al. (2021). This task trains the model to recognize temporal order within EEG signals, encouraging it to learn meaningful representations from the intrinsic structure of resting-state data.

The TS task is structured around labeled samples drawn from the EEG time series, \(S\epsilon\:{R}^{E\times C\:\times T},\)where E is the number of epochs, C is the number of EEG channels, and T is the number of time samples per epoch. Each sample contains three epochs: two “anchor” epochs, \(\:{x}_{e\:}\) and \(\:{x}_{e^{\prime\prime}}\), and a third epoch, \(\:{x}_{e^{\prime}}\). The index e’ indicates the epoch index at which the window starts in S, and \(\:\tau\:\) is the duration of each window. Since we are dealing with resting state EEG, we establish labels assuming that epochs close in time share similar characteristics. Only windows containing epochs that are less than three seconds away are processed.

The positive context \(\:{\tau\:}_{pos}\epsilon\:N\) defines the duration within which two anchor epochs are selected, while the negative context \(\:{\tau\:}_{neg}\epsilon\:N\:\)provides temporal contrast. Epoch triplets are then classified as either ordered (e.g., e < e’ < e’’) or shuffled (e.g., e < e’’ < e’), with the label \(\:{y}_{i}\) indicating the arrangement. This framework enables the model to learn temporal dependencies and capture local neural dynamics, ultimately improving its ability to detect meaningful patterns in hyperscanning tasks.

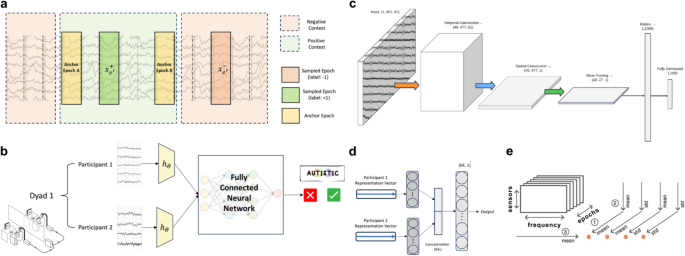

Figure 1a illustrates the sampling process for this pretext task. In each iteration, two anchor epochs, designated in the figure as “Anchor Epoch A” and “Anchor Epoch B”, are selected, with their temporal distance controlled by a hyperparameter that defines the boundary of the positive context. This positive context is specified as a temporal interval, often represented as a set number of epochs. In the example shown in the figure, the positive context is four epochs (equivalent to four seconds)

a Sampling in the TS Pretext Task: Positive context defined by temporal distance between anchor epochs, with positive and negative labels assigned accordingly. b Downstream Task Model: Visualization of task-specific architecture utilizing pretext embeddings. c Shallow ConvNet Architecture: Embedder network \(\:{h}_{\theta\:}\) for feature extraction. d Multi-Brain Architecture: Fully connected neural network binary classifier. e EEG Biomarker Extraction: Methodology based on Denis A. Engemann et al. (2018)

To achieve end-to-end learning in discerning epochs, two essential functions \(\:{h}_{\theta\:}\) and \(\:{g}_{TS}\) are required. The function \(\:{h}_{\theta\:}\::{\:R}^{C\:\times\:T}\:\to\:\:{R}^{D}\:\)serves as a feature extractor with learnable parameters, \(\:\theta\:\) mapping an input EEG epoch into a meaningful \(\:D\)-dimensional feature-space representation. The aim is for this feature extractor to capture the underlying structure of the epoched EEG input, enabling effective use of these representations in the downstream task.

Following feature extraction, a contrastive module, \(\:{g}_{TS}\), aggregates and evaluates relationships between epochs by contrasting their feature representations. Specifically, for temporal shuffling, the module \(\:{g}_{TS}\::{\:R}^{D}\:\:\times\:\:{\:R}^{D}\:\times\:\:{\:R}^{D}\to\:\:\:{R}^{2D}\:\:\)operates by concatenating the elementwise absolute differences between a test epoch representation, \({h}_{\theta}\left({x}_{e^{\prime}}\right)\) and two anchor epochs, \(\:{h}_{\theta\:}\left({x}_{e\:}\right)\) and \(\:{h}_{\theta\:}\left({x}_{e^{\prime\prime}}\right)\):

This concatenated output emphasizes the temporal relationships between the epochs, facilitating contrastive learning by focusing on differences between ordered and shuffled samples. A linear context-discriminative model then predicts the target label \(\:{y}_{}\) associated with each epoch triplet by applying binary logistic loss on the outputs of the contrastive module. This setup results in a joint loss function, defined as follows:

where Z is a normalization factor, ensuring that the sum is taken over a set N of samples, and (\(\:{x}_{e^{\prime}}\), \(\:{x}_{e\:},\:{x}_{e^{\prime\prime}}\), \(\:{y}_{}\)) represents a sample from the set N with \(\:{y}_{}\) indicating the ordered or shuffled label. Parameters \(\:{w}^{T}\) and \(\:{w}_{0}\) are those of the linear context-discriminative model, representing the weight vector and bias term, respectively.

By combining the logistic loss with contrastive representations derived from the \(\:{g}_{TS}\:\)module, this joint loss function penalizes deviations from the true labels \(\:y\), thereby encouraging the model to learn and generalize temporal dependencies across epochs effectively. Moreover, summing over a set of samples, N, further reinforces the model’s ability to discern temporal patterns across varied EEG epochs.

The representations learned through the temporal shuffling task capture structural features of the EEG signal (e.g., spectral regularities, temporal continuity, and inter-channel dependencies) that serve as individualized neural signatures. These embeddings generalize well to downstream tasks, including those involving dyadic classification, because of their grounding in stable signal properties (Banville et al., 2021; Wagh et al., 2021).

Downstream Task

Leveraging representations learned through the temporal shuffling pretext task, we used the pre-trained SSL model as a feature extractor for a downstream binary classification task. The hyperscanning EEG data used for this phase captured interactions either between two neurotypical participants (TD–TD) or between one neurotypical and one autistic individual (ASC–TD).

We fine-tuned a fully connected neural network to classify dyads based on features extracted from two simultaneously recorded EEG streams (Fig. 1B). This end-to-end model, combining the self-supervised encoder with a downstream classifier, enabled accurate prediction of whether a given interaction stemmed from a control or mixed dyad. This approach highlights the potential of self-supervised learning to improve model performance in specialized tasks, particularly in classifying neurotypical and autistic social interactions.

Model Architecture

The EEG self-supervised learning framework employs embedders as feature extractors to convert each 1-second epoch of data into a 100-element vector that captures the underlying neural activity. These embeddings are subsequently input into a contrastive module to evaluate temporal dependencies between epochs.

For feature extraction, the framework employs a customized Shallow ConvNet (Schirrmeister et al., 2017), originally developed for brain–computer interface applications. Unlike conventional 1D CNNs that jointly convolve across time and channels, this architecture applies temporal convolutions independently per channel to capture short-term temporal patterns before performing spatial aggregation across electrodes. The ConvNet consists of a single convolutional layer followed by a squaring non-linearity, average pooling along the temporal axis, a logarithmic non-linearity, and a linear output layer. Batch normalization is applied after the convolution to stabilize feature distributions.

Despite its simplicity, Shallow ConvNet has demonstrated strong performance in EEG-based tasks, including pathology detection (Gemein et al., 2020), and has been effectively used in the temporal shuffling pretext task, as reported by Banville et al. (2021). Following the configuration proposed by these authors, we fixed the embedding dimension at 100. This dimensionality provides sufficient capacity to capture temporal and spectral dynamics while mitigating overfitting, therefore, it is a pragmatic balance between expressivity and computational feasibility given our dataset size and available resources.

In this study, the model is tailored to process 1-second resting-state EEG epochs with 61 channels sampled at 500 Hz, resulting in an input tensor size of (501 × 61). Adjustments to kernel sizes and dense layer dimensions are made to ensure compatibility with the altered input dimensions, optimizing performance for the task at hand, as illustrated in Fig. 1C. Complete architecture specifications, including layer parameters and hyperparameters, are provided in Appendix 3 and Online Resources (ESM_3 and ESM_4).

For the downstream task described in “Pretext Task” section, we utilized two pre-trained embedders to generate vectorized representations of hyperscanning EEG data. These representation vectors were subsequently input into a binary classifier, which was implemented as a fully connected neural network. The architecture of the classifier is depicted in Fig. 1D.

Baseline Comparison

To evaluate the performance of our model on the downstream task, we compared it against two baseline approaches: (1) a purely supervised model and (2) a logistic regression classifier using extracted spectral EEG biomarkers. Each baseline approach was designed to highlight different aspects of model performance and compare the effectiveness of the SSL framework with traditional methods.

Supervised Model

In this baseline approach, no pretext training is conducted, meaning the model starts from scratch without any learned representations from the SSL phase. The model is directly trained on the downstream task using the BBC2 dataset, with no prior knowledge or weight adjustments. This comparison evaluates whether the SSL pre-training adds value, as it allows us to assess if the model benefits from prior learning or if training from scratch performs similarly. The goal is to determine the importance of transferring knowledge from the pretext task to the downstream task.

Logistic Regression Classifier with Spectral EEG Biomarkers

In this baseline, we implement a traditional machine learning pipeline based on spectral EEG biomarkers. Following the methodology introduced by Engemann et al. (2018) for single-subject analysis, we adapt the approach to the hyperscanning context by extracting features separately from each participant in a dyad. For each individual, we compute power spectral density (PSD) values from preprocessed EEG across five standard frequency bands—delta (2–4 Hz), theta (4–8 Hz), alpha (8–12 Hz), beta (12–30 Hz), and gamma (30–48 Hz). Each band is represented by two metrics: absolute PSD and normalized PSD (i.e., relative to total broadband power), yielding ten spectral biomarkers per participant.

To summarize the PSD values for each marker, we calculate four statistical descriptors: the mean of means, the mean of standard deviations, the standard deviation of means, and the standard deviation of standard deviations. These measures are aggregated across epochs, sensors, and frequency bins to form a compact feature representation.

For each dyad, the resulting feature vectors from the two participants are concatenated, creating a joint representation that reflects the spectral characteristics of the pair. These vectors are then used to train a binary logistic regression classifier that predicts whether the dyad corresponds to a neurotypical–neurotypical (TD–TD) or a neurotypical–autistic (ASC–TD) interaction. Details of the individual biomarkers are provided in Appendix 2 and Online Resource 2 (ESM_2.csv).

Results

Pretext Task Model Performance

The pretext model, a single-brain neural network, demonstrated strong feature extraction capabilities on a proxy dataset of 150,123 epoch triplets derived from 1,000 HBN subjects. Each triplet, consisting of two anchor epochs and one sampled epoch, was created using the TS algorithm with a positive context of 10 s. Epochs contained 501 time samples across 61 EEG channels. The dataset was split into training (90%) and evaluation (10%) subsets, with the evaluation portion further divided into validation (90%) and test (10%) sets.

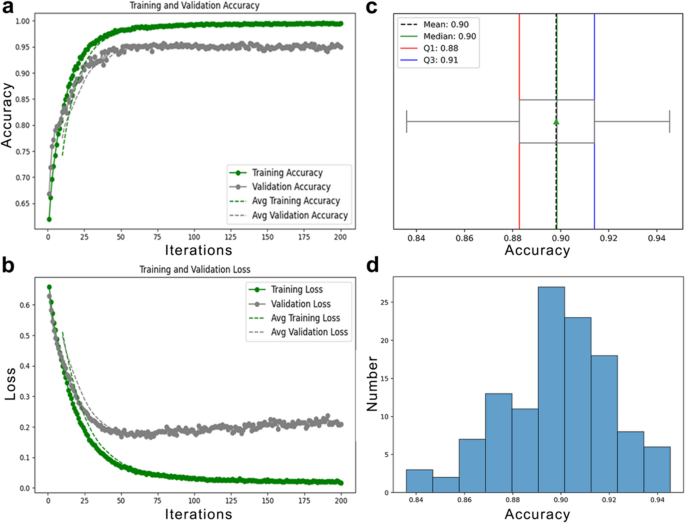

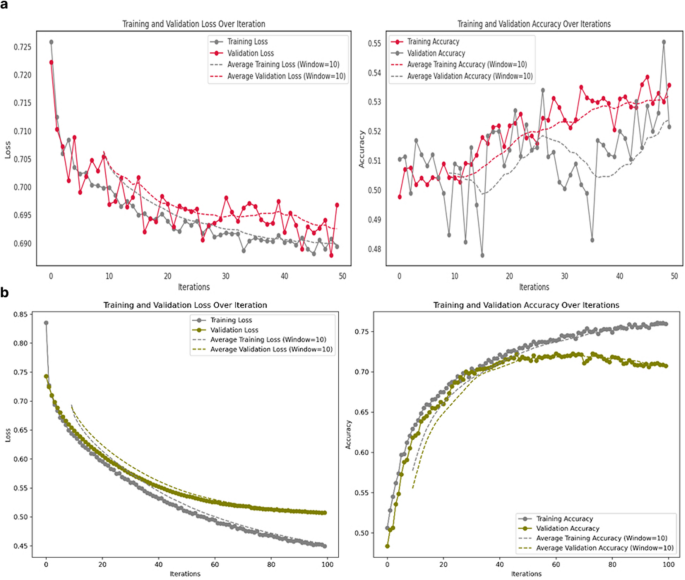

The model was trained for 200 iterations using the Adam optimizer (learning rate: 1e-5), a batch size of 128, a dropout rate of 0.4, and 207,021 trainable parameters. As shown in Fig. 2a, training accuracy reached 100% with near-zero loss (Fig. 2b), while validation accuracy plateaued, indicating overfitting. Early stopping at iteration 34 helped mitigate this issue.

a Performance of the pretext model with the Shallow ConvNet embedder during training, showing training and validation accuracy over iterations. b Corresponding training and validation loss curves during the same phase. c Statistical summary of testing accuracy, including mean, median, and interquartile range. d Distribution of test set accuracy values, highlighting statistical characteristics and variability

Evaluation on the test set, conducted with a batch size of 64, yielded an average accuracy of 90%, as depicted in Fig. 2c, with accuracy values distributed mostly between 88% and 92% (Fig. 2d). These results confirm the model’s ability to learn and generalize meaningful temporal features from EEG data.

Downstream Task: Performance of the SSL Multi-Brain Model

A downstream binary classification task was performed using hyperscanning EEG data from the BBC2 dataset. The objective was to classify dyadic interactions as either neurotypical (TD) or involving at least one autistic individual (ASC). The dataset comprised 142,120 dyadic epochs, each containing data from 61 EEG channels and 501 time samples, representing a one-second temporal window.

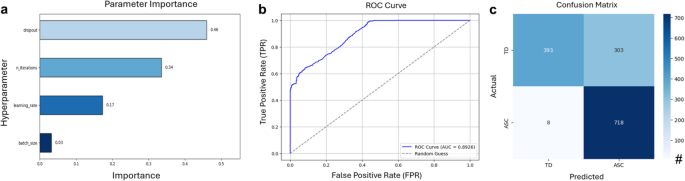

To process these epochs, a multi-brain model was employed, integrating two pre-trained single-brain embedders based on the Shallow ConvNet architecture. The data were divided into training (90%) and evaluation (10%) subsets, consistent with the partitioning strategy used during the pretext task. Model training was conducted using the Adam optimizer with a logarithmic logistic loss function. Hyperparameter tuning was carried out using Optuna (Akiba et al., 2019), a Python-based framework that leverages the Tree-structured Parzen Estimator (TPE). All computations were executed on the Compute Canada Narval Cluster. Among the optimized hyperparameters, dropout emerged as the most influential factor (Fig. 3a). The finalized configuration included 79 optimization iterations, a batch size of 8, a learning rate of 9.3e-4, and a dropout rate of 0.38.

a Hyperparameter importance in the multi-brain model. b ROC curve, and (c) Confusion matrix of SSL Multi-Brain Model

The multi-brain model achieved a mean test accuracy of 78.13% when evaluated on batches of five unseen dyadic epochs, closely approaching the benchmark accuracy of 79.4% reported by Banville et al. (2021). To further assess performance, additional metrics were calculated, including the ROC curve, binarized predictions at a threshold of 0.5, and a confusion matrix (Fig. 3b and c).

Key performance metrics underscored the effectiveness of the SSL multi-brain model in distinguishing between neurotypical and ASC interactions. The model achieved a precision of 70.32%, indicating its capacity to accurately identify ASC interactions. Recall (sensitivity) was notably high at 98.90%, reflecting robust detection of ASC cases. The F1 score, a harmonic mean of precision and recall, was 82.20%, highlighting the model’s overall reliability. However, specificity was lower at 56.47%, indicating reduced accuracy in classifying neurotypical interactions. Despite this limitation, the model demonstrated strong overall accuracy (78.13%) and a robust ROC AUC of 0.8926, confirming its strong discriminative ability across both classes.

Baseline Comparisons

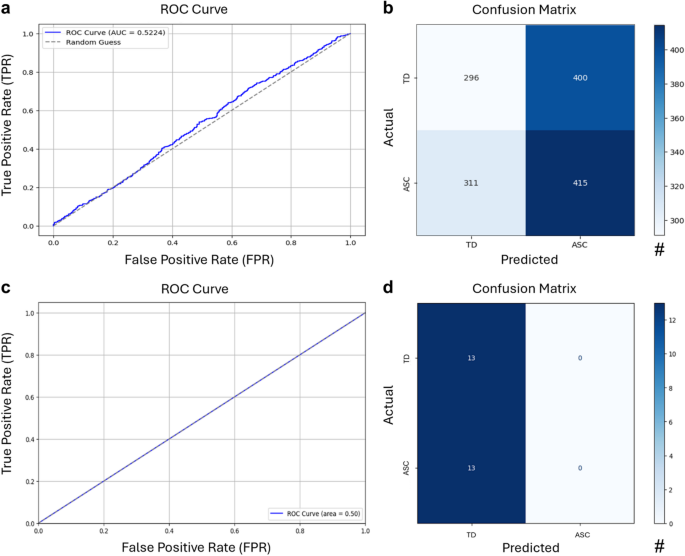

To evaluate the impact of SSL pre-training, we conducted baseline experiments using two approaches: a supervised multi-brain model with untrained embedders and a logistic regression classifier trained directly using the BBC2 dataset. The first baseline utilized the same multi-brain architecture as the SSL model but without pre-training the embedders. This supervised model was trained on labeled hyperscanning EEG data from the BBC2 dataset, using identical architecture, dataset splits, hyperparameters, and optimization procedures as the SSL model. Testing on batches of five dyadic epochs resulted in an average accuracy of 52%, slightly above random chance (50%).

Moreover, the model’s performance was further assessed using the following metrics: It achieved an ROC AUC of 0.5224, precision of 0.5290, recall of 0.5275, an F1 score of 0.5283, specificity of 0.5101, and overall accuracy of 0.5190. The confusion matrix (Fig. 3B) and the ROC curve (Fig. 3A) confirmed the model’s limited discriminative capacity, as its results were only marginally better than random chance.

The second baseline involved a logistic regression classifier trained on spectral EEG biomarkers extracted following the methodology outlined in “Logistic Regression Classifier with Spectral EEG Biomarkers” section. These biomarkers included five primary frequency bands—delta (δ), theta (θ), alpha (α), beta (β), and gamma (γ)—and their normalized versions, resulting in a total of 10 spectral biomarkers per participant. Each feature was summarized using four statistical measures—mean-mean, mean-std, std-mean, and std-std—resulting in 40-dimensional feature vectors per participant. Dyadic feature vectors were formed by concatenating the features from both participants, producing 80-dimensional inputs for binary classification.

Despite hyperparameter tuning using GridSearchCV, the logistic regression model achieved a cross-validated accuracy of 50%, a test accuracy of 50%, and an ROC AUC score of 0.50 (Fig. 3A). The classification report revealed that the model failed to predict one of the classes (ASC), with predictions heavily biased toward the majority class (TD). For the class TD, the model achieved a precision of 0.50, a recall of 1.00, and an F1-score of 0.67, suggesting that while it successfully identified all instances of TD, half of its TD predictions were incorrect. However, for the ASC class, the model showed poor performance with a precision and recall of 0.00, resulting in an F1-score of 0.00. The confusion matrix confirmed this bias, demonstrating that the handcrafted features were insufficient to enable reliable classification of the dyads (Fig. 4D).

a ROC curve and (b) confusion matrix of the supervised multi-brain model. c ROC curve and (d) confusion matrix of the logistic regression classifier

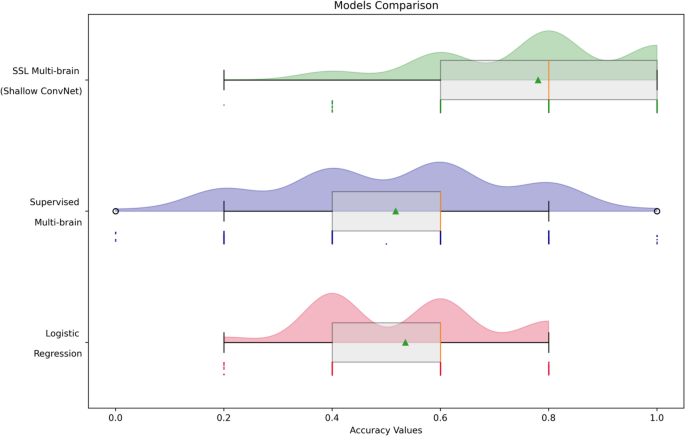

SSL Multi-Brain Model Outperforms the Baseline Models

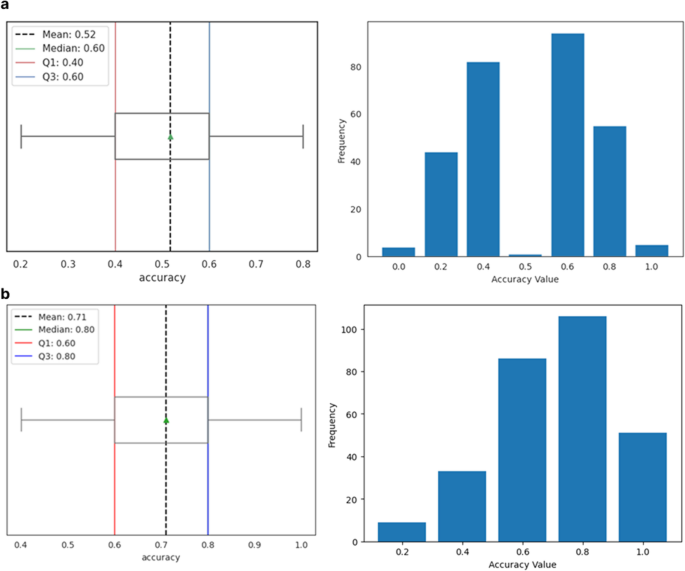

Our analysis reveals significant performance differences among the models (Fig. 5). The SSL Multi-Brain model with Shallow ConvNet embedders achieved the highest classification accuracy (78.13%), demonstrating the effectiveness of self-supervised learning in extracting robust features from EEG data. Additionally, it exhibited greater consistency, with a narrow interquartile range and the third quartile aligning closely with the maximum, reinforcing its status as the top performer.

Comparison of the models’ performances for the hyperscanning-EEG classification task

In contrast, the supervised multi-brain model without pre-training achieved only 52% accuracy, which is comparable to random chance. This highlights the difficulty of training on hyperscanning data without SSL pre-training, which is crucial for learning rich, meaningful feature representations.

For all deep learning models, testing was conducted on 285 dyadic epochs, and accuracy was calculated in batches of five epochs. In contrast, the logistic regression model, which used features extracted from each dyad rather than individual epochs, was evaluated on a testing set of 26 dyads. To ensure comparability of the results, a resampling method was applied to calculate accuracy for these 26 samples in batches of five. The logistic regression model achieved a mean accuracy of 50%, which is equivalent to chance performance, highlighting the challenges of classifying hyperscanning data using traditional machine learning techniques.

In terms of class balance, the SSL model performed well despite the natural class imbalance in the dataset, as it maintained a high recall (98.90%) for detecting ASC interactions. This is critical, as class imbalance often skews model performance, leading to models that favor the majority class (neurotypical). The precision-recall trade-off here highlights the model’s effectiveness in detecting ASC interactions, with a precision of 70.32%. This is a strong performance in identifying ASC cases, even though specificity was lower (56.47%), reflecting the challenge of correctly identifying neurotypical interactions.

The baseline models, on the other hand, struggled with these challenges. The supervised multi-brain model’s precision, recall, and F1 score were low, indicating poor discrimination between the classes. The logistic regression model also demonstrated a heavy bias toward the majority class (neurotypical), with a recall of 1.00 for TD and a recall of 0.00 for ASC, further highlighting its failure to capture the complexity of the data.

These findings demonstrate the superiority of the SSL Multi-Brain model, which not only outperforms traditional and supervised deep learning models but also handles the class imbalance effectively. By leveraging unlabeled data through pretext tasks, SSL extracts more reliable, transferable features, setting a new benchmark for hyperscanning EEG analysis.

Discussion

In this study, we demonstrated that Self-Supervised Learning (SSL) can be effectively applied to epoched EEG data, yielding strong results in hyperscanning analyses. Specifically, SSL enhanced the performance of binary classification tasks, distinguishing between Autism Spectrum Condition (ASC) and neurotypical (TD) interactions. By leveraging large-scale, unlabelled EEG data, SSL enabled the extraction of meaningful temporal features, which significantly improved the performance of downstream classification tasks. Notably, SSL-based pretraining achieved a mean accuracy of 78.13%, outperforming baseline models and underscoring SSL’s potential to address the challenges posed by unlabeled and multivariate EEG data. These results establish a new benchmark for future research in hyperscanning studies.

ASC Diagnosis Via Deep Learning in Hyperscanning EEG

Despite the growing application of deep learning in EEG studies, its use in diagnosing ASC remains relatively underexplored compared to traditional methods. As noted by Khodatars et al. (2021), neuroimaging modalities such as EEG, MRI, resting-state fMRI, and fNIRS have been widely employed in ASC research, with resting-state fMRI being the most commonly used.

While a handful of studies (Bouallegue et al., 2020; Tawhid et al., 2021; Ranjani & Supraja, 2021; Dong et al., 2021) have applied deep learning techniques to EEG data for ASC detection, they primarily focus on individual brain activity. Despite demonstrating promising classification accuracy, these approaches overlook the dynamics of social interactions, which are crucial for understanding ASC. In contrast to these studies, our work shifts the focus to interactions between individuals in hyperscanning, where we analyze simultaneous brain activity from two individuals. This novel approach provides a fresh perspective on ASC detection, offering new insights into how interaction-based neural dynamics can contribute to understanding and diagnosing neurodevelopmental conditions like autism. By reframing psychiatric conditions as emergent from dynamic interpersonal interactions rather than solely individual deficits, we underscore the importance of studying relational processes and mismatched expectations in understanding autism and other conditions (Bolis et al., 2022).

Model Performance and Implications for Clinical Practice

The SSL multi-brain model achieved a mean test accuracy of 78.13%, closely matching the benchmark of 79.4% reported by Banville et al. (2021) for self-supervised learning in EEG classification. This is particularly relevant for advancing diagnostic tools for ASC based on EEG data. Compared to traditional diagnostic tools like the Autism Spectrum Quotient (AQ), Ritvo Autism Asperger’s Diagnostic Scale-Revised (RAADS-R), and the Autism Diagnostic Observation Schedule (ADOS), the model shows both strengths and limitations, in some cases improving upon existing methods.

The model’s sensitivity of 98.90% significantly surpasses the AQ (77%), RAADS-R (52–65%), and ADOS (~ 65%) (Ashwood et al., 2016; Conner et al., 2019; Jones et al., 2021; Sizoo et al., 2015). This high sensitivity is key for minimizing false negatives and detecting nearly all ASC interactions, which is crucial for early intervention and improved outcomes. The model’s ROC AUC of 0.8926 also indicates strong discriminative power, outperforming the AQ (0.40) and RAADS-R (0.58), and aligning more closely with the ADOS (0.69). This demonstrates the model’s ability to differentiate ASC behaviors from neurotypical ones.

However, the model faces challenges in specificity, with a rate of 56.47%, leading to a higher rate of false positives. This may be due to overlapping behaviors between neurotypical individuals and those with ASC, a challenge also seen in traditional diagnostic tools like AQ and RAADS-R, where comorbidities such as generalized anxiety disorder (GAD) contribute to false positives (Ashwood et al., 2016; Conner et al., 2019; Jones et al., 2021). The AQ, for instance, has poor specificity (29–52%) in clinical settings due to shared traits with GAD (Ashwood et al., 2016), while RAADS-R’s specificity ranges from 44% to 73% (Conner et al., 2019; Sizoo et al., 2015).

Although not yet ready for widespread clinical adoption, the model has potential as a complementary tool for ASC diagnosis. Its scalability, low-cost post-training, and minimal memory requirements make it suitable for integration into routine clinical practice. The model’s high sensitivity and strong AUC make it particularly effective in identifying ASC interactions. However, further research is needed to improve its specificity, particularly in distinguishing ASC from other conditions with overlapping traits. Ultimately, this study highlights the potential of computational models like the multi-brain approach to enhance diagnostic accuracy, leading to more personalized care for individuals on the autism spectrum.

Since our method is lightweight, interpretable, and built on open tools, it could support clinical use as a decision aid. By revealing neural patterns of social interaction, it may enable earlier, more individualized support benefiting autistic individuals directly. Grounded in brain-based signals rather than behavioral judgments, this approach may reduce assessment burden and promote tools that are more inclusive, respectful, and aligned with neurodiversity-affirming care.

Modeling Hyperparameters

Epoch Length

In our experimental design, EEG recordings were segmented into one-second epochs as a foundational preprocessing step. This epoch length defined the window size for subsequent pretext tasks, balancing temporal resolution with computational feasibility. Selecting the optimal epoch length is informed by domain knowledge and further refined through experimental tuning. Epoch lengths of one to two seconds are commonly used, as they strike a balance between temporal resolution and statistical reliability (Fraschini et al., 2016; Li et al., 2018). However, the ideal length depends on the study’s objectives and the EEG signal characteristics.

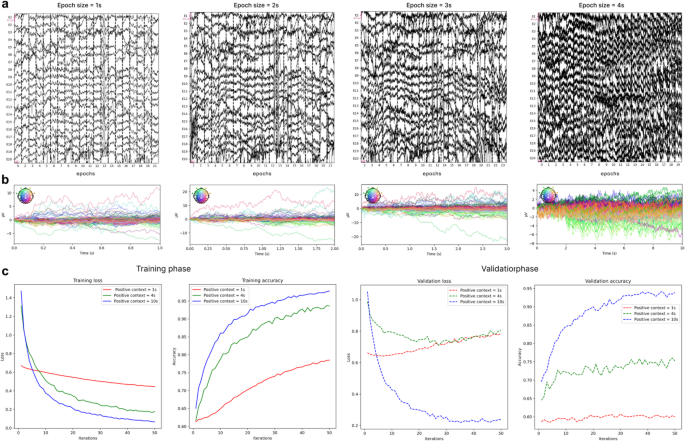

Expanding the size of epochs introduces several trade-offs. While larger epochs may encompass more temporal context, they also reduce the total number of available training samples, increase susceptibility to noise, and exacerbate computational demands due to heightened input dimensionality. Additionally, the artifact rejection pipeline necessitated excluding entire epochs if artifacts were detected, disproportionately affecting longer epochs due to their greater likelihood of containing noise.

Figure 6a illustrates this trade-off using a sample from the HBN dataset. As shown, 1-second epochs led to 28 dropped epochs due to artifacts, while 2-second and 3-second epochs resulted in 35 and 64 dropped epochs, respectively. In contrast, 10-second epochs dropped 60 epochs and caused a marked degradation in signal quality, producing noisier samples. Figure 6b further visualizes this degradation using butterfly plots, where each trace represents an EEG channel over time. With increasing epoch length, the plots show greater signal dispersion and reduced temporal alignment, indicating elevated noise and variability. Notably, the 1-second epochs produce cleaner, time-locked traces, while the 10-second epochs exhibit overlapping and erratic signals, reflecting diminished signal clarity. A similar pattern was observed in other samples from both the BBC2 and HBN datasets. However, to conclusively determine the optimal balance between epoch length, artifact rejection rates, and signal quality, further studies are required.

a Raw EEG segments of varying epoch lengths (1s, 2 s, 3 s, and 4 s) from a sample in the HBN dataset, illustrating the impact of epoch duration on signal continuity and noise levels. b Corresponding butterfly plots for each epoch length, showing overlaid EEG channel averages. c Impact of positive context length on the performance of Shallow ConvNet during pretext task training and validation phases

Positive Context Length

Positive context length, within the pretext task framework, refers to the temporal separation between randomly selected positive epochs and anchor epochs. It is a crucial parameter in our SSL pipeline, influencing the balance of positive and negative samples used for training DL models. A larger positive context length increases the number of epochs labeled as positive, which improves dataset balance. However, if the temporal distance between positive samples becomes too large, the model may struggle to distinguish them effectively, leading to misclassifications. Therefore, selecting an optimal positive context length is essential to balancing dataset distribution and model performance.

To assess its impact, we experimented with positive context lengths of one second, four seconds, and 10 s using Shallow ConvNet in the pretext task. As shown in Fig. 6c, training loss consistently decreased, and accuracy increased across all context lengths. However, with a one-second positive context, the improvements were slower compared to the four-second and 10-second contexts. During the validation phase, the effect was more pronounced: for the one-second context, loss increased, and accuracy stagnated, indicating difficulties in learning from the minority class and a bias toward the majority class.

Increasing the positive context to four seconds alleviated some of these issues, but performance still showed room for improvement. Notably, extending the context to 10 s significantly improved performance, resolving class imbalance and enhancing accuracy. Based on these findings, we selected a positive context length of 10 s.

Given our focus on improving downstream task performance, we have not yet explored higher values for positive context length or conducted further hyperparameter tuning. However, as shown, positive context length plays a significant role in the pretext task model’s performance, and further analyses of this parameter are essential. Specifically, investigating the upper bound for positive context length and comparing it to the total duration of EEG recordings could offer valuable insights into optimizing the pretext task model for this dataset and task.

Data Splitting and Generalization

Data were divided into training, validation, and test sets using epoch-level splits to maximize the limited sample size of the BBC2 dataset. Epoch pairs were always formed within the same dyad, ensuring inter-dyad independence while allowing distinct epochs from the same individuals across partitions. Enforcing strict subject- or dyad-level separation would have yielded too few epochs per partition for reliable convergence or statistically reliable evaluation, a common constraint in EEG deep-learning studies (Schirrmeister et al., 2017; Lawhern et al., 2018). Because deep networks rely on large and diverse input distributions for stable optimization (Goodfellow et al., 2016; Zhang et al., 2017a, b), limiting training to a small subset of subjects would have further reduced the effective sample size.

This epoch-level strategy, widely used in EEG ML, increases sample diversity and stabilizes training but may introduce cross-partition dependencies if subject-specific patterns recur (Brookshire et al., 2024; Del Pup et al., 2025). Empirical analyses show such lenient splits yield higher yet less generalizable accuracies than subject-independent designs (Del Pup et al., 2025; Xu et al., 2020). Our approach thus reflects a pragmatic trade-off between data sufficiency and independence.

A supervised baseline trained under the same splits performed at chance level, whereas the SSL-pretrained model achieved substantially higher accuracy. This confirms that performance gains arise from representation learning, not data leakage. We therefore report our results as upper-bound estimates of generalization, emphasizing transparency for future comparison.

Alternative SSL Objectives and Loss Functions

This study employed TS as the self-supervised pretext task, optimized using a binary cross-entropy loss. The design mirrors the temporal-order formulations of Banville et al. (2021), where the model learns to distinguish temporally consistent (ordered) from inconsistent (shuffled) EEG segments. Because this is a binary relational classification task, cross-entropy provides a theoretically coherent objective. By contrast, CPC and related methods (Oord et al., 2019; Banville et al., 2021) use InfoNCE-style losses suited to multi-negative prediction, where the model must identify the correct future window among several candidates.

Although our framework centers on temporal-order discrimination, numerous alternative SSL objectives could be explored for EEG. Masked-segment prediction (Devlin et al., 2019), instance discrimination (Chen et al., 2020; He et al., 2020), or predictive-coding formulations (Schneider et al., 2019) capture complementary temporal or spectral regularities that may enrich learned embeddings. InfoNCE-based or other contrastive losses likewise impose distinct inductive biases and optimization dynamics, potentially enhancing representational diversity, but these comparisons were beyond the present scope.

Our aim was not to assert the superiority of the TS objective or the logistic loss, but to show that a lightweight, conceptually aligned SSL approach can yield robust and transferable EEG embeddings. Future work should systematically compare binary and multi-negative objectives to clarify which best capture the complex spatio-temporal dependencies underlying interpersonal neural dynamics in hyperscanning EEG.

Hyperparameter Tuning

Both the supervised baseline and the SSL-pretrained model were initially trained using identical architectures and optimization setting including learning rate, batch size, dropout rate, and training duration to ensure that observed differences reflected the effect of self-supervised pretraining rather than optimization variability. Under these shared initial conditions, the SSL-pretrained model achieved 71% accuracy, whereas the supervised model remained near chance level and displayed unstable, erratic training dynamics. The SSL model’s learning curves indicated consistent convergence and meaningful representation learning, while the supervised network showed oscillatory loss behavior and no clear learning trajectory (Appendix 4).

In a subsequent phase, hyperparameter tuning of the SSL configuration as discussed in “Downstream Task: Performance of the SSL Multi-Brain Model” section increased the accuracy to 78.13%, suggesting that the SSL-initialized model provided a strong and adaptable optimization starting point. For the supervised baseline, only limited manual tuning was attempted, yet it yielded no substantial improvements in performance or stability.

We acknowledge that a more systematic and symmetric hyperparameter optimization would provide a fairer comparison and a clearer assessment of each model’s capacity. Developing such procedures remains an important direction for future work, as discussed in “Limitations” section and consistent with prior evidence that EEG deep-learning performance is highly sensitive to tuning (Roy et al., 2019; Zhang et al., 2021).

Limitations

This study is subject to several key limitations, which should be considered when interpreting the findings and planning future research.

First, due to the computational demands of training neural networks on large EEG datasets, we fixed the hyperparameters (e.g., learning rate, batch size, number of iterations, dropout rate) for the pretext task. While this decision was made for practical reasons within the scope of the study, it may limit the models’ adaptability to different datasets and training conditions. Future research should explore hyperparameter tuning for the pretext task and assess its impact on downstream model performance. Such exploration could optimize the models for specific tasks and datasets, potentially leading to improved results.

In addition, while both the SSL-pretrained and supervised models were trained under identical architectures and initial optimization conditions, we did not pursue fully independent hyperparameter optimization for each regime. As discussed in “Hyperparameter Tuning” section, a more symmetric tuning strategy would allow fairer comparisons and could reveal further gains in both approaches. Furthermore, as detailed in “Data Splitting and Generalization” section, the present study employed epoch-level rather than subject-level data splits due to dataset size constraints. This design ensured sufficient training stability but may permit limited overlap across partitions; future work with larger datasets should incorporate stricter subject-level or dyad-level validation to more rigorously assess model generalization.

Second, the sampling and segmentation procedures, specifically, the epoch length and positive context window (Sections Epoch Length and Positive Context Length ), influence how temporal dependencies are captured. We primarily analyzed 1-second epochs to maintain temporal precision and signal clarity, and found that longer epochs introduced overlapping noise and reduced interpretability. Nonetheless, longer temporal windows may better capture slow interpersonal dynamics, and systematic evaluation across epoch sizes remains a promising direction. While we have observed the effects of positive context length and epoch size independently, a comprehensive investigation of their joint influence is warranted. Such analysis would not only enhance model performance but also clarify how epoch size, context length, class imbalance, and noise interact to shape model efficacy. Similarly, although we retained the 500 Hz sampling rate to preserve fine temporal structure, moderate downsampling could improve computational efficiency without substantial signal loss; future scaling studies should explore this trade-off.

Third, the pretext data included both eyes-open and eyes-closed intervals. These distinct resting conditions were intentionally combined to increase spectral diversity during pretraining, promoting invariance to physiological state. However, the relative contributions of each condition were not analyzed separately. Future work should compare models trained on single versus mixed conditions to assess the robustness of learned representations.

Fourth, in the logistic regression baseline analysis, we only used 10 of the biomarkers proposed by Engemann and colleagues (2018) (Section Logistic Regression Classifier with Spectral EEG Biomarkers), which were originally designed for single-brain EEG. Our approach to adapting these biomarkers for hyperscanning EEG was simplified: we concatenated individual EEG feature vectors to create a dyadic input. However, this method may not fully capture the complexity of hyperscanning data. Future studies should explore more advanced feature engineering techniques to better adapt single-brain EEG biomarkers to the multi-brain context.

Finally, the models in this study were trained on a subset of the HBN dataset, consisting of 1,000 participants—approximately one-third of the available data. While the HBN dataset is continuously expanding and future versions will include more participants, training on a smaller portion of the data may limit the model’s generalizability. Future studies should address the challenges associated with training on larger datasets, such as optimizing the data loading pipeline to better handle memory constraints for efficient deployment. However, it is also important to investigate the relationship between model complexity, architecture, and dataset size. Understanding this relationship will provide insights into how large the dataset needs to be to achieve optimal performance, and whether further increases in dataset size will continue to yield improvements.

Conclusion

This study demonstrated the effectiveness of self-supervised learning for classifying hyperscanning EEG data in distinguishing autistic from neurotypical individuals. The SSL-based multi-brain model outperformed supervised and traditional machine learning baselines, highlighting its strength in extracting meaningful patterns from limited labeled data. These findings underscore SSL’s potential to enhance EEG-based diagnostic tools for autism spectrum conditions, offering objective, precise assessments that complement existing approaches. More broadly, this work advances social neuroscience by modeling brain-to-brain dynamics and supports precision psychiatry through scalable, personalized methods that pave the way for innovative diagnostic and therapeutic solutions.

Data Availability

The code supporting the results of this manuscript is available upon reasonable request from the corresponding author. Supplementary material (ESM_1.csv, ESM_2.csv, ESM_3.csv, ESM_4.csv) provides supporting information on sensor mappings, spectral biomarker definitions, and detailed architectural and training specifications for the self-supervised encoder and downstream classifier. The full EEG hyperscanning dataset generated for this study cannot be publicly shared due to participant privacy considerations. All participants were volunteers and provided written informed consent in accordance with the Declaration of Helsinki. The experimental protocol was approved by the institutional ethics review board for biomedical research at the Hospital (approval #104-10). The HBN dataset analyzed during this study is publicly available at: https://fcon_1000.projects.nitrc.org/indi/cmi_healthy_brain_network/.

References

Akiba, T., Sano, S., Yanase, T., Ohta, T., & Koyama, M. (2019). Optuna: A next-generation hyperparameter optimization framework. arXiv. https://doi.org/10.48550/arXiv.1907.10902

Alexander, L. M., Escalera, J., Ai, L., Andreotti, C., Febre, K., Mangone, A., Vega-Potler, N., Langer, N., Alexander, A., Kovacs, M., Litke, S., O’Hagan, B., Andersen, J., Bronstein, B., Bui, A., Bushey, M., Butler, H., Castagna, V., Camacho, N., & Milham, M. P. (2017). An open resource for transdiagnostic research in pediatric mental health and learning disorders. Scientific Data, 4(1), Article 170181. https://doi.org/10.1038/sdata.2017.181

Ashwood, K. L., Gillan, N., Horder, J., Hayward, H., Woodhouse, E., McEwen, F. S., Findon, J., Eklund, H., Spain, D., Wilson, C. E., Cadman, T., Young, S., Stoencheva, V., Murphy, C. M., Robertson, D., Charman, T., Bolton, P., Glaser, K., Asherson, P., & Murphy, D. G. (2016). Predicting the diagnosis of autism in adults using the Autism-Spectrum quotient (AQ) questionnaire. Psychological Medicine, 46(12), 2595–2604. https://doi.org/10.1017/S0033291716001082

Ayrolles, A., Brun, F., Chen, P., Djalovski, A., Beauxis, Y., Delorme, R., Bourgeron, T., Dikker, S., & Dumas, G. (2021). HyPyP: A hyperscanning python pipeline for inter-brain connectivity analysis. Social Cognitive and Affective Neuroscience, 16(1–2), 72–83. https://doi.org/10.1093/scan/nsaa141

Banville, H., Chehab, O., Hyvärinen, A., Engemann, D.-A., & Gramfort, A. (2021). Uncovering the structure of clinical EEG signals with self-supervised learning. Journal of Neural Engineering. https://doi.org/10.1088/1741-2552/abca18

Bolis, D., Dumas, G., & Schilbach, L. (2022). Interpersonal attunement in social interactions: From collective psychophysiology to inter-personalized psychiatry and beyond. Philosophical Transactions of the Royal Society B: Biological Sciences, 378(1870), Article 20210365. https://doi.org/10.1098/rstb.2021.0365

Bottema-Beutel, K., Kim, S. Y., & Crowley, S. (2019). A systematic review and meta-regression analysis of social functioning correlates in autism and typical development. Autism Research, 12(2), 152–175. https://doi.org/10.1002/aur.2055

Bouallegue, G., Djemal, R., Alshebeili, S. A., & Aldhalaan, H. (2020). A dynamic filtering DF-RNN deep-learning-based approach for EEG-based neurological disorders diagnosis. IEEE Access, 8, 206992–207007. https://doi.org/10.1109/ACCESS.2020.3037995

Bishop D. V. (2013). Cerebral asymmetry and language development: cause, correlate, or consequence?. Science, 340(6138), 1230531. https://doi.org/10.1126/science.1230531

Brihadiswaran, G., Haputhanthri, D., Gunathilaka, S., Meedeniya, D., & Jayarathna, S. (2019). EEG-based processing and classification methodologies for autism spectrum disorder: A review. Journal of Computer Science, 15(8), 1161–1183. https://doi.org/10.3844/jcssp.2019.1161.1183

Brookshire, G., Kasper, J., Blauch, N. M., Wu, Y. C., Glatt, R., Merrill, D. A., Gerrol, S., Yoder, K. J., Quirk, C., & Lucero, C. (2024). Data leakage in deep learning studies of translational EEG. Frontiers in Neuroscience, 18, 1373515. https://doi.org/10.3389/fnins.2024.1373515

Chen, T., Kornblith, S., Norouzi, M., & Hinton, G. (2020). A simple framework for contrastive learning of visual representations. In Proceedings of the 37th International Conference on Machine Learning (Vol. 119, pp. 1597–1607). https://arxiv.org/abs/2002.05709

Cohen, M. X. (2017). Where does EEG come from and what does it mean? Trends in Neurosciences, 40(4), 208–218. https://doi.org/10.1016/j.tins.2017.02.004

Conner, C. M., Cramer, R. D., & McGonigle, J. J. (2019). Examining the diagnostic validity of autism measures among adults in an outpatient clinic sample. Autism in Adulthood, 1(1), 60–68. https://doi.org/10.1089/aut.2018.0023

Crane, L., Batty, R., Adeyinka, H., Goddard, L., Henry, L. A., & Hill, E. L. (2018). Autism diagnosis in the United Kingdom: Perspectives of autistic adults, parents and professionals. Journal of Autism and Developmental Disorders, 48(11), 3761–3772. https://doi.org/10.1007/s10803-018-3639-1

Cui, X., Bryant, D. M., & Reiss, A. L. (2012). NIRS-based hyperscanning reveals increased interpersonal coherence in superior frontal cortex during cooperation. NeuroImage, 59(3), 2430–2437. https://doi.org/10.1016/j.neuroimage.2011.09.003

Dawson, G., Finley, C., Phillips, S., & Lewy, A. (1989). A comparison of hemispheric asymmetries in speech-related brain potentials of autistic and dysphasic children. Brain and Language, 37(1), 26–41. https://doi.org/10.1016/0093-934x(89)90099-0

Del Pup, F., Zanola, A., Tshimanga, L. F., Bertoldo, A., Finos, L., & Atzori, M. (2025). The role of data partitioning on the performance of EEG-based deep learning models in supervised cross-subject analysis: A preliminary study. Computers in Biology and Medicine, 196, 110608. https://doi.org/10.1016/j.compbiomed.2025.110608

Devlin, J., Chang, M. W., Lee, K., & Toutanova, K. (2019). BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (pp. 4171–4186). https://aclanthology.org/N19-1423/

Dong, H., Chen, D., Zhang, L., Hengjin, ke, & Li, X. (2021). Subject Sensitive EEG Discrimination with Fast Reconstructable CNN Driven by Reinforcement Learning: A Case Study of ASD Evaluation. Neurocomputing, 449. https://doi.org/10.1016/j.neucom.2021.04.009

Dumas, G. (2022). From inter-brain connectivity to inter-personal psychiatry. World Psychiatry, 21(2), 214–215. https://doi.org/10.1002/wps.20987

Dumas, G., Nadel, J., Soussignan, R., Martinerie, J., & Garnero, L. (2010). Inter-brain synchronization during social interaction. PLoS One, 5(8), e12166. https://doi.org/10.1371/journal.pone.0012166

Dumas, G., Soussignan, R., Hugueville, L., Martinerie, J., & Nadel, J. (2014). Revisiting mu suppression in autism spectrum disorder. Brain Research, 1585, 108–119. https://doi.org/10.1016/j.brainres.2014.08.035

Dvorak, D., Shang, A., Abdel-Baki, S., Suzuki, W., & Fenton, A. A. (2018). Cognitive behavior classification from scalp EEG signals. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 26(4), 729–739. https://doi.org/10.1109/TNSRE.2018.2797547

Ecker, C., Bookheimer, S. Y., & Murphy, D. G. M. (2015). Neuroimaging in autism spectrum disorder: Brain structure and function across the lifespan. The Lancet Neurology, 14(11), 1121–1134. https://doi.org/10.1016/S1474-4422(15)00050-2

Engemann, D. A., Raimondo, F., King, J. R., Rohaut, B., Louppe, G., Faugeras, F., Annen, J., Cassol, H., Gosseries, O., Fernandez-Slezak, D., Laureys, S., Naccache, L., Dehaene, S., & Sitt, J. D. (2018). Robust EEG-based cross-site and cross-protocol classification of States of consciousness. Brain, 141(11), 3179–3192. https://doi.org/10.1093/brain/awy251

Fitzgerald, P. J., & Watson, B. O. (2018). Gamma oscillations as a biomarker for major depression: An emerging topic. Translational Psychiatry, 8(1), 1–11. https://www.nature.com/articles/s41398-018-0239-y

Fraschini, M., Demuru, M., Crobe, A., Marrosu, F., Stam, C. J., & Hillebrand, A. (2016). The effect of epoch length on estimated EEG functional connectivity and brain network organisation. Journal of Neural Engineering, 13(3), 036015. https://doi.org/10.1088/1741-2560/13/3/036015

Gemein, L. A. W., Schirrmeister, R. T., Chrabąszcz, P., Wilson, D., Boedecker, J., Schulze-Bonhage, A., Hutter, F., & Ball, T. (2020). Machine-learning-based diagnostics of EEG pathology. Neuroimage, 220, 117021. https://doi.org/10.1016/j.neuroimage.2020.117021

Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep learning. MIT Press. https://www.deeplearningbook.org/

Gurau, O., Bosl, W. J., & Newton, C. R. (2017). How useful is electroencephalography in the diagnosis of autism spectrum disorders and the delineation of subtypes: A systematic review. Frontiers in Psychiatry, 8, 121. https://doi.org/10.3389/fpsyt.2017.00121

He, K., Fan, H., Wu, Y., Xie, S., & Girshick, R. (2020). Momentum contrast for unsupervised visual representation learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 9729–9738). https://arxiv.org/abs/1911.05722

Hirsch, J., Adam Noah, J., Zhang, X., Dravida, S., & Ono, Y. (2018). A cross-brain neural mechanism for human-to-human verbal communication. Social Cognitive and Affective Neuroscience, 13(9), 907–920. https://doi.org/10.1093/scan/nsy070

Hirsch, J., Zhang, X., Noah, J. A., & Ono, Y. (2017). Frontal Temporal and parietal systems synchronize within and across brains during live eye-to-eye contact. Neuroimage, 157, 314–330. https://doi.org/10.1016/j.neuroimage.2017.06.018

Hodges, H., Fealko, C., & Soares, N. (2020). Autism spectrum disorder: Definition, epidemiology, causes, and clinical evaluation. Translational Pediatrics, 9(Suppl 1), S55–S65. https://doi.org/10.21037/tp.2019.09.09

Hornung, T., Chan, W. H., Müller, R. A., Townsend, J., & Keehn, B. (2019). Dopaminergic hypo-activity and reduced theta-band power in autism spectrum disorder: A resting-state EEG study. International Journal of Psychophysiology, 146, 101–106. https://doi.org/10.1016/j.ijpsycho.2019.08.012

Hull, J. V., Dokovna, L. B., Jacokes, Z. J., Torgerson, C. M., Irimia, A., Van Horn, J. D., the GENDAAR Research Consortium. (2018). Corrigendum: Resting-state functional connectivity in autism spectrum disorders: A review. Frontiers in Psychiatry. https://doi.org/10.3389/fpsyt.2018.00268

Jas, M., Engemann, D. A., Bekhti, Y., Raimondo, F., & Gramfort, A. (2017). Autoreject: Automated artifact rejection for MEG and EEG data. NeuroImage, 159, 417–429. https://doi.org/10.1016/j.neuroimage.2017.06.030

Jing, L., & Tian, Y. (2019). Self-supervised visual feature learning with deep neural networks: A survey. arXiv

Jones, S. L., Johnson, M., Alty, B., & Adamou, M. (2021). The effectiveness of RAADS-R as a screening tool for adult ASD populations. Autism Research and Treatment, 2021(1), Article 9974791. https://doi.org/10.1155/2021/9974791

Key, A. P., Yan, Y., Metelko, M., Chang, C., Kang, H., Pilkington, J., & Corbett, B. A. (2022). Greater social competence is associated with higher interpersonal neural synchrony in adolescents with autism. Frontiers in Human Neuroscience. https://doi.org/10.3389/fnhum.2021.790085

Khodatars, M., Shoeibi, A., Sadeghi, D., Ghaasemi, N., Jafari, M., Moridian, P., Khadem, A., Alizadehsani, R., Zare, A., Kong, Y., Khosravi, A., Nahavandi, S., Hussain, S., Acharya, U. R., & Berk, M. (2021). Deep learning for neuroimaging-based diagnosis and rehabilitation of autism spectrum disorder: A review. Computers in Biology and Medicine, 139, 104949. https://doi.org/10.1016/j.compbiomed.2021.104949

Kruppa, J. A., Reindl, V., Gerloff, C., Oberwelland Weiss, E., Prinz, J., Herpertz-Dahlmann, B., Konrad, K., & Schulte-Rüther, M. (2021). Brain and motor synchrony in children and adolescents with ASD—a fNIRS hyperscanning study. Social Cognitive and Affective Neuroscience, 16(1–2), 103–116. https://doi.org/10.1093/scan/nsaa092

Lawhern, V. J., Solon, A. J., Waytowich, N. R., Gordon, S. M., Hung, C. P., & Lance, B. J. (2018). EEGNet: A compact convolutional neural network for EEG-based brain–computer interfaces. Journal of Neural Engineering, 15(5), 056013. https://doi.org/10.1088/1741-2552/aace8c

Leong, V., Byrne, E., Clackson, K., Georgieva, S., Lam, S., & Wass, S. (2017). Speaker gaze increases information coupling between infant and adult brains. Proceedings of the National Academy of Sciences of the United States of America, 114(50), 13290–13295. https://doi.org/10.1073/pnas.1702493114

Li, X. J., Dao, P. T., & Griffin, A. (2018). Effect of Epoch Length on Compressed Sensing of EEG. Annual International Conference of the IEEE Engineering in Medicine and Biology Society. IEEE Engineering in Medicine and Biology Society. Annual International Conference, 2018, (pp. 1–4). https://doi.org/10.1109/EMBC.2018.8513085

Liu, D., Liu, S., Liu, X., Zhang, C., Li, A., Jin, C., Chen, Y., Wang, H., & Zhang, X. (2018). Interactive brain activity: Review and progress on EEG-based hyperscanning in social interactions. Frontiers in Psychology. https://doi.org/10.3389/fpsyg.2018.01862

Liu, X., Zhang, F., Hou, Z., Mian, L., Wang, Z., Zhang, J., & Tang, J. (2021). Self-supervised learning: Generative or contrastive. IEEE Transactions on Knowledge and Data Engineering (pp. 1–1). https://doi.org/10.1109/TKDE.2021.3090866

Lord, C., Brugha, T. S., Charman, T., Cusack, J., Dumas, G., Frazier, T., Jones, E. J. H., Jones, R. M., Pickles, A., State, M. W., Taylor, J. L., & Veenstra-VanderWeele, J. (2020). Autism spectrum disorder. Nature Reviews Disease Primers, 6(1), 5. https://doi.org/10.1038/s41572-019-0138-4

Lotte, F., Bougrain, L., Cichocki, A., Clerc, M., Congedo, M., Rakotomamonjy, A., & Yger, F. (2018). A review of classification algorithms for EEG-based brain-computer interfaces: A 10 year update. Journal of Neural Engineering, 15(3), 031005. https://doi.org/10.1088/1741-2552/aab2f2

Matusz, P. J., Dikker, S., Huth, A. G., & Perrodin, C. (2019). Are we ready for real-world neuroscience? Journal of Cognitive Neuroscience, 31(3), 327–338. https://doi.org/10.1162/jocn_e_01276

McKenzie, K., Forsyth, K., O'Hare, A., McClure, I., Rutherford, M., Murray, A., & Irvine, L. (2015). Factors influencing waiting times for diagnosis of Autism Spectrum Disorder in children and adults. Research in Developmental Disabilities, 45-46, 300–306. https://doi.org/10.1016/j.ridd.2015.07.033

McMenamin, B. W., Shackman, A. J., Maxwell, J. S., Bachhuber, D. R. W., Koppenhaver, A. M., & Davidson, R. J. (2010). Validation of ICA-based myogenic artifact correction for scalp and source-localized EEG. Neuroimage, 49(3), 2416–2432. https://www.sciencedirect.com/science/article/pii/S1053811909010817

Miller, K. J., Hermes, D., & Rao, R. P. N. (2007). Spectral changes in cortical surface potentials during motor movement. Journal of Neuroscience, 27(9), 2424–2432. https://www.jneurosci.org/content/27/9/2424

Milovanovic, M., & Grujicic, R. (2021). Electroencephalography in assessment of autism spectrum disorders: A review. Frontiers in Psychiatry. https://doi.org/10.3389/fpsyt.2021.686021

Montague, P. R., Berns, G. S., Cohen, J. D., McClure, S. M., Pagnoni, G., Dhamala, M., Wiest, M. C., Karpov, I., King, R. D., Apple, N., & Fisher, R. E. (2002). Hyperscanning: Simultaneous fMRI during linked social interactions. Neuroimage, 16(4), 1159–1164. https://doi.org/10.1006/nimg.2002.1150

Moreau, Q., & Dumas, G. (2021). Beyond correlation versus causation: Multi-brain neuroscience needs explanation. Trends in Cognitive Sciences. https://doi.org/10.1016/j.tics.2021.02.011

Moreau, Q., Brun, Florence, Ayrolles, Anaël, Nadel, Jacqueline, & Dumas, G. (2024). Distinct social behavior and inter-brain connectivity in dyads with autistic individuals. Social Neuroscience, 19(2), 124–136. https://doi.org/10.1080/17470919.2024.2379917

Murphy, D. G. M., Beecham, J., Craig, M., & Ecker, C. (2011). Autism in adults. New biologicial findings and their translational implications to the cost of clinical services. Brain Research, 1380, 22–33. https://doi.org/10.1016/j.brainres.2010.10.042

Muthukumaraswamy, S. D. (2013). High-frequency brain activity and muscle artifacts in MEG/EEG: A review and recommendations. Frontiers in Human Neuroscience, 7, 138. https://doi.org/10.3389/fnhum.2013.00138/full. https://www.frontiersin.org/articles/

Nadel, J., & Pezé, A. (2017). What makes immediate imitation communicative in toddlers and autistic children? In J. Nadel & L. Camaioni (Eds.), New perspectives in early communicative development (pp. 139–156). Routledge. https://doi.org/10.4324/9781315111322-9

Nam, C., Choo, S., Huang, J., & Park, J. (2020). Brain-to-Brain neural synchrony during social interactions: A systematic review on hyperscanning studies. Applied Sciences, 10, 6669. https://doi.org/10.3390/app10196669

Newschaffer, C. J., Croen, L. A., Daniels, J., Giarelli, E., Grether, J. K., Levy, S. E., Mandell, D. S., Miller, L. A., Pinto-Martin, J., Reaven, J., Reynolds, A. M., Rice, C. E., Schendel, D., & Windham, G. C. (2007). The epidemiology of autism spectrum disorders. Annual Review of Public Health, 28, 235–258. https://doi.org/10.1146/annurev.publhealth.28.021406.144007

Nielsen, J. A., Zielinski, B. A., Fletcher, P. T. et al. (2014) Abnormal lateralization of functional connectivity between language and default mode regions in autism. Molecular Autism, 5, 8. https://doi.org/10.1186/2040-2392-5-8

Novembre, G., & Iannetti, G. D. (2021). Hyperscanning alone cannot prove causality. Multibrain stimulation can. Trends in Cognitive Sciences, 25(2), 96–99. https://doi.org/10.1016/j.tics.2020.11.003

Oord, Avanden, Li, Y., & Vinyals, O. (2019). Representation learning with contrastive predictive coding. arXiv. https://doi.org/10.48550/arXiv.1807.03748

Pion-Tonachini, L., Kreutz-Delgado, K., & Makeig, S. (2019). ICLabel: An automated electroencephalographic independent component classifier, dataset, and website. NeuroImage, 198, 181–197. https://doi.org/10.1016/j.neuroimage.2019.05.026

Ranjani, M., & Supraja, P. (2021). Classifying the autism and epilepsy disorder based on EEG signal using deep convolutional neural network (DCNN). 2021 International Conference on Advance Computing and Innovative Technologies in Engineering (ICACITE), (pp. 880-886). https://doi.org/10.1109/ICACITE51222.2021.9404634

Rojas, D. C., & Wilson, L. B. (2014). γ-band abnormalities as markers of autism spectrum disorders. Biomarkers in Medicine, 8(3), 353–368. https://doi.org/10.2217/bmm.14.15

Roy, Y., Banville, H., Albuquerque, I., Gramfort, A., Falk, T. H., & Faubert, J. (2019). Deep learning-based electroencephalography analysis: A systematic review. Journal of Neural Engineering, 16(5), 051001. https://doi.org/10.1088/1741-2552/ab260c

Schirrmeister, R. T., Springenberg, J. T., Fiederer, L. D. J., Glasstetter, M., Eggensperger, K., Tangermann, M., Hutter, F., Burgard, W., & Ball, T. (2017). Deep learning with convolutional neural networks for EEG decoding and visualization. Human Brain Mapping, 38(11), 5391–5420. https://doi.org/10.1002/hbm.23730