Abstract

Urban planning increasingly requires multi-source geospatial intelligence to address the complexity of modern cities. This study introduces a unified deep learning framework integrating OpenStreetMap (OSM) data with multispectral satellite imagery and demographic-environmental datasets. The framework employs task-specific architectures, including Convolutional Neural Networks (CNNs) for land-use classification, U-Net segmentation for building footprint extraction, Long Short-Term Memory (LSTM) networks for traffic flow prediction, and a hybrid CNN-RNN model for air-quality forecasting. The land-use model achieved 91.6% accuracy, the U-Net building footprint extractor reached 94.0% accuracy, the LSTM traffic model obtained an RMSE of 3.6 vehicles per hour, and the hybrid air-quality model achieved an RMSE of 2.3 µg/m³. These metrics reflect performance on OSM-aligned datasets specifically curated for Krasnodar. The novelty of this work lies in establishing a multi-task, multi-source analytical workflow that unifies OSM, satellite, and temporal environmental data within a coherent deep learning system. The resulting framework provides urban planners with a scalable and low-cost approach for generating high-resolution urban intelligence to support sustainable city management.

Similar content being viewed by others

Introduction

Urban planning, a multidisciplinary field aimed at designing and managing the physical and spatial development of cities, is vital for addressing challenges posed by rapid urbanization, environmental degradation, and infrastructural strain1,2,3,4. Krasnodar, a rapidly expanding Russian city, epitomizes these challenges with its growing population, evolving infrastructure demands, and increasing environmental pressures. Traditional approaches to urban planning often fall short due to outdated data, limited granularity, and static methods of analysis5,6,7,8. This underscores the necessity of incorporating innovative, data-driven techniques.

OSM is a widely recognized source of freely accessible geospatial data. However, its application in urban planning is hindered by issues such as data quality variability and incomplete coverage in less populated areas. The term “precision” in this context refers to the accuracy and reliability of OSM data when juxtaposed with official datasets or high-resolution satellite imagery. Furthermore, the “use of data” emphasizes the capability of OSM to support actionable insights for urban planning tasks like land use classification, traffic management, and environmental monitoring.

The integration of OSM with deep learning models offers an untapped potential to overcome these limitations9,10,11,12. Deep learning techniques, particularly CNNs and Recurrent Neural Networks (RNNs) excel at handling complex data patterns, enabling precise analysis and predictions13,14. For Krasnodar, these tools can transform raw geospatial data into actionable insights, addressing specific urban issues such as traffic congestion hotspots, inadequately mapped green spaces, and areas requiring infrastructure enhancement. By linking OSM-derived spatial structure and deep-learning-based predictive outputs to foundational principles of urban planning such as accessibility, spatial equity, and resilience. The proposed framework provides actionable analytical insights directly aligned with contemporary planning theory.

To orient the reader, the proposed methodological workflow consists of four sequential stages: multi-source data acquisition, geospatial preprocessing and harmonization, task-specific deep learning modeling, and integrated interpretation of structural, functional, mobility, and environmental urban patterns. This overview provides a conceptual roadmap that guides the transition from raw geospatial inputs to multi-domain analytical outputs.

Existing literature demonstrates the utility of OSM and deep learning independently in various domains of urban planning, but studies focusing on their combined application remain scarce. While Haklay15 has assessed OSM data’s accuracy in urban settings, and researchers like Wu et al.14 have applied deep learning for urban scene recognition, the synergy between these tools, especially in a rapidly urbanizing city like Krasnodar, remains underexplored. The lack of comprehensive studies integrating OSM with advanced deep learning to tackle specific urban challenges necessitates this research.

The novelty of this study lies in the development of a unified multi-task deep learning framework that integrates OSM vector data, multispectral satellite imagery, and temporal environmental records into a single analytical pipeline. Unlike existing studies that address individual tasks such as road extraction, building segmentation, or land-use mapping in isolation, our approach provides a coordinated system capable of simultaneously analyzing structural, functional, mobility, and environmental dimensions of the urban environment. This multi-layered integration represents a significant methodological advancement beyond conventional single-domain geospatial deep learning applications.

This study aims to: (1) evaluate the accuracy and usability of OSM data for urban planning in Krasnodar, addressing gaps in data quality and coverage; (2) develop and implement deep learning models to process OSM and supplementary datasets for tasks like land use classification, building footprint extraction, and traffic flow prediction; (3) provide data-driven recommendations for urban planners to enhance Krasnodar’s sustainability and infrastructure management.

By addressing these objectives, this research contributes to advancing data-driven urban planning methodologies, fostering sustainable urban development practices tailored to the unique context of Krasnodar.

To address the limitations of existing studies that typically examine isolated urban planning tasks, this research introduces a unified multi-task analytical framework that simultaneously integrates OpenStreetMap data, Sentinel-2 imagery, and demographic and environmental datasets. Unlike prior works that apply deep learning models independently to single domains such as traffic, land use, or environmental monitoring, the proposed approach establishes a scalable and transferable pipeline capable of producing consistent, multi-layered urban intelligence from heterogeneous data sources. This integrated methodology represents a methodological and technical innovation by enabling coordinated land-use mapping, building footprint extraction, traffic forecasting, and air-quality estimation within a single, harmonized deep learning architecture, thereby filling a critical gap in current GeoAI and urban analytics literature.

Literature review

Urban planning is inherently complex, requiring tools that can analyze and interpret dynamic, high-dimensional data16. Traditional urban planning methodologies, relying heavily on GIS systems and static datasets, often fail to capture the multifaceted challenges of urban development in real time17. OSM data has emerged as a valuable resource for urban planning, given its accessibility and community-driven updates18,19,20. However, significant limitations in OSM data, such as varying quality across regions, incomplete datasets in less populated areas, and inaccuracies in building footprints and road networks, limit its standalone utility for urban planning tasks5,15,21,22. These shortcomings necessitate the integration of complementary datasets and advanced analytical methods.

Recent studies have introduced refined methods for evaluating OpenStreetMap completeness and spatial accuracy, offering improved frameworks for detecting missing roads, misaligned building footprints, and inconsistent semantic attributes23. These advancements demonstrate that OSM data varies widely across urban regions and underscore the need for integrating volunteered geographic information with supplementary geospatial datasets in urban analytics.

Deep learning methods for urban feature delineation have advanced rapidly, with modern U-Net variants and multisource fusion models achieving high performance in building footprint extraction and land-use classification24. These approaches demonstrate that combining OSM vectors with satellite imagery yields higher semantic accuracy compared to models relying on single-source data.

The use of deep learning in urban studies has shown significant promise, particularly for tasks that require high accuracy and automation, such as land-use classification, traffic flow prediction, and building footprint extraction25,26,27. Deep learning models, such as CNNs and RNNs, are particularly suited to handling geospatial data due to their ability to capture non-linear patterns and process high-resolution imagery28. Unlike traditional machine learning methods, deep learning offers scalability and adaptability to evolving urban scenarios. For instance, CNNs have demonstrated superior performance in extracting building footprints from satellite imagery, achieving higher accuracy and Intersection over IoU scores compared to manual mapping or classical GIS approaches26,29.

Recent research has substantially advanced OSM-DL integration across multiple urban analytics domains, including OSM data completeness and quality assessment, building footprint extraction using U-Net and transformer architectures, land-use and land-cover mapping through OSM remote-sensing fusion, traffic flow prediction based on spatio-temporal deep learning models, and air-quality inference using hybrid CNN-RNN frameworks30,31,32,33. Typical performance metrics reported in these studies include mIoU and IoU for segmentation, F1-score and Cohen’s κ for land-use classification, RMSE/MAE/MAPE for traffic prediction, and RMSE/MAE/R² for air-quality estimation. The results obtained in the present study fall within the performance range of these state-of-the-art GeoAI models, demonstrating that the proposed multi-task framework performs competitively relative to contemporary benchmarks.

Although recent works have tackled individual tasks such as road extraction, land-use classification, or building segmentation, the literature still lacks a unified multi-task framework that integrates multiple deep learning models with OSM data for comprehensive urban environment assessment34. This gap motivates the development of our multi-layered framework addressing structural, functional, mobility, and environmental dimensions simultaneously.

Despite the proven utility of deep learning and the availability of OSM data, the integration of these tools remains underexplored in urban planning research. Most existing studies focus on specific applications, such as flood mapping35,36 or traffic flow prediction37, without addressing broader urban challenges or demonstrating the scalability of these methods in diverse urban environments. Furthermore, few studies have investigated the potential of deep learning models to improve the data accuracy and reliability of OSM data for urban planning tasks in rapidly growing cities like Krasnodar38. This represents a critical research gap, as cities with complex urban dynamics require real-time, accurate, and scalable solutions to manage infrastructure development, environmental monitoring, and traffic optimization.

Moreover, the geographical context of Krasnodar highlights the need for innovative approaches. As a rapidly urbanizing city with over 900,000 inhabitants, Krasnodar faces significant challenges, including traffic congestion, inadequate green spaces, and evolving infrastructure demands39. The variability in OSM data quality across different regions of the city further complicates urban planning efforts. Addressing these challenges requires a comprehensive integration of OSM data with advanced deep-learning models, capable of providing actionable insights for city planners.

By addressing these limitations, this study contributes to the growing body of research on data-driven urban planning. It not only demonstrates the feasibility of integrating OSM and deep learning but also highlights the necessity of such approaches for cities like Krasnodar. Through the development of novel methodologies and a comprehensive analysis of urban planning challenges, this research aims to bridge the gap between theoretical advancements and practical applications in urban development.

Methodology

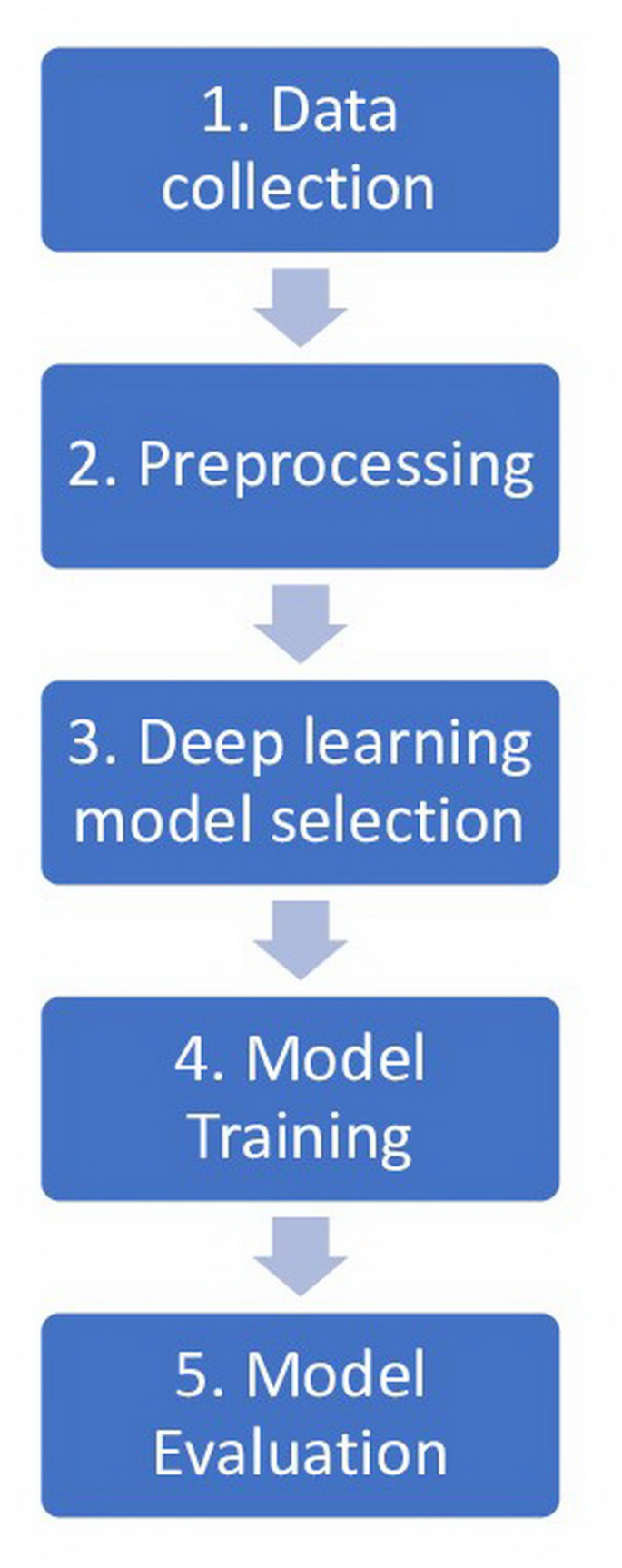

The deep learning method used in the paper integrates OSM data with deep learning models to address urban planning challenges in Krasnodar. Figure 1 shows the key steps involved in the method.

Key steps of deep learning method.

As illustrated in Fig. 1, the analytical workflow is designed as a sequential yet interconnected system. The data acquisition module collects OSM vector layers, multispectral satellite tiles, demographic grids, and environmental records. The preprocessing module standardizes coordinate reference systems, harmonizes spatial resolutions, constructs training tiles, and performs label alignment. The modeling module applies task-specific architectures: U-Net for footprint extraction, CNN classifiers for land-use mapping, LSTM networks for temporal prediction, and hybrid CNN-RNN models for spatio-temporal estimation, each producing structured outputs. Finally, the integration module consolidates these outputs into multi-dimensional urban indicators, enabling a comprehensive interpretation of the city’s physical, functional, mobility, and environmental attributes. This expanded interpretation clarifies the technical depth and coherence of the framework beyond the simplified flowchart.

Each stage of the workflow summarized in Fig. 1 incorporates specific parameters required for reproducible geospatial deep-learning analysis. All datasets were reprojected into the WGS 84/UTM Zone 37 N projected coordinate reference system (EPSG:32637). Sentinel-2 tiles and OSM-derived raster layers were converted into 256 × 256-pixel patches at 10-m resolution. Land-use segmentation used a 5-class schema, and building-footprint extraction employed a binary mask. All datasets were divided into 70% training, 15% validation, and 15% testing subsets. U-Net models were trained with Adam (learning rate 1e-4) using weighted cross-entropy loss, while traffic and air-quality models used Adam (1e-3) with MAE or RMSE loss functions. Evaluation metrics included Accuracy, Precision, Recall, F1-score, IoU/mIoU for segmentation tasks, and RMSE/MAE for regression tasks.

-

1.

Data collection. Krasnodar, situated in the southern region of Russia, is experiencing rapid growth and is among the fastest-developing cities in the nation. The city has substantial urban planning obstacles, such as increasing urbanization, infrastructure expansion, and environmental conservation, as a result of its over 900,000 inhabitants. The varied topography of Krasnodar, which includes residential neighborhoods, business zones, and parks, offers an excellent opportunity to investigate the fusion of OSM data with deep learning technology. Krasnodar has a well-developed street network with over 1,400 streets and avenues. Its urban layout includes a mix of wide, multi-lane roads, such as the main thoroughfare, Krasnaya Street, and smaller, more congested streets. The city’s central area follows a grid pattern, making navigation relatively straightforward, though peripheral areas might have more complex layouts. The number of cars in Krasnodar is substantial. As of recent estimates, there are over 400,000 registered vehicles in the city, which contributes to significant traffic congestion during peak hours. Krasnodar faces frequent traffic jams, especially during rush hours in the morning and evening. The city’s infrastructure struggles to accommodate the growing number of vehicles. Key intersections and the main streets, including Krasnaya and North Caucasian, are often bottleneck points. Factors like narrow streets in the older parts of the city and ongoing construction projects further exacerbate the traffic situation. The city also experiences heavy vehicle congestion on routes leading to nearby Black Sea resorts, especially in the summer months, when tourism peaks. Krasnodar has a well-established public transportation system, including buses, trams, and trolleybuses, which many residents use to navigate the city and avoid traffic delays.

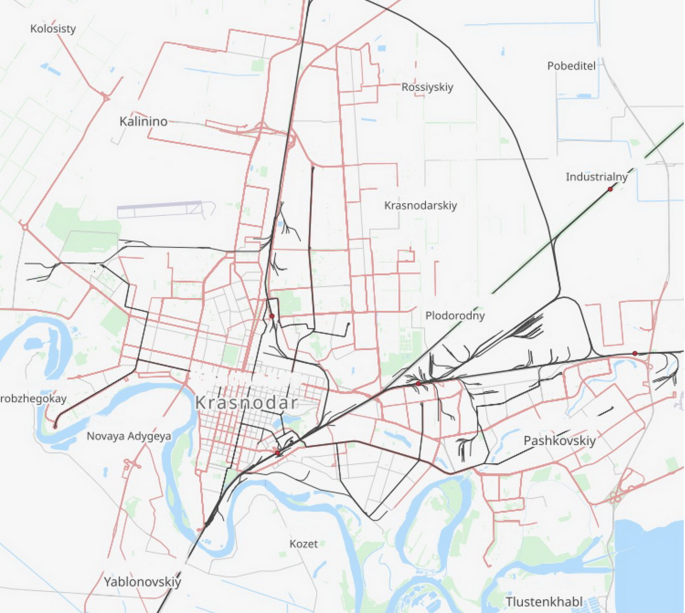

Figure 2 displays the Krasnodar map acquired from OSM.

The map of Krasnodar.

The primary source of geographic data for this study is OSM (https://www.openstreetmap.org/). OSM data for Krasnodar was downloaded using the Overpass API, which allows for the extraction of specific geographic features. The dataset includes information on roads, buildings, land use, and points of interest (POIs).

All datasets used in this study were associated with explicit temporal ranges and version identifiers to ensure reproducibility. OSM data were downloaded through the Overpass API on 14 March 2024 at 12:00 UTC, corresponding to the 2024-03-14 planet replication state. Sentinel-2 Level-2 A imagery was obtained for Tile ID T37UCA with acquisition dates between 2023-04-01 and 2023-10-30, cloud-cover below 10%, and a ground sampling distance of 10 m for bands B2, B3, B4, and B8 (20-m bands were resampled to 10 m). Demographic data corresponded to the 2023 reference year at 100-m spatial resolution, while environmental datasets (air quality and meteorology) covered the period January–December 2023 with hourly temporal resolution. These temporal and version-specific parameters were consistently applied across all modeling tasks.

To ensure that training samples were spatially representative and free from geographic bias, all urban tiles were generated using a stratified spatial sampling strategy rather than uniform random sampling. The city of Krasnodar was first subdivided into land-use strata derived from OSM polygons (residential, commercial-industrial, mixed-use, green/recreational, and transport-dominated areas). Within each stratum, Sentinel-2 and OSM-aligned 256 × 256-pixel patches were extracted using proportional allocation, ensuring that dense central districts, peripheral residential zones, and sparsely mapped industrial corridors were equally represented. Additionally, a minimum-distance constraint of 300 m between sampled tile centroids was applied to avoid oversampling of adjacent imagery and to reduce spatial autocorrelation. This stratified design guarantees that model training does not disproportionately learn from specific neighborhoods and that patterns relevant to heterogeneous urban morphologies are captured consistently across the entire metropolitan area.

Following the spatial sampling stage, the generation of reliable supervisory signals required a controlled and internally consistent annotation workflow. Land-use classes and building-footprint masks were produced by two GIS analysts with professional experience in remote-sensing interpretation to ensure domain-appropriate labeling. A custom six-class land-use schema (residential, commercial/industrial, green/recreational zones, transport corridors, mixed-use areas, and water bodies) was adopted to maintain compatibility with local planning conventions and the spectral properties of Sentinel-2 imagery. Building-footprint extraction followed a binary (building/non-building) scheme aligned with OSM geometry. To guarantee label quality and minimize annotation drift, 15% of the samples were independently annotated by both experts; the resulting Cohen’s κ = 0.87 indicates strong agreement and confirms convergent labeling criteria. The remaining samples were annotated individually and subsequently cross-validated, ensuring that all supervisory data used for model training were coherent with the stratified sampling design and reflected the heterogeneity of the city’s built fabric.

Traffic-flow and air-quality datasets were derived from the municipal monitoring network consisting of 27 traffic sensors and 11 environmental stations distributed across central, residential, industrial, and peripheral road corridors of Krasnodar. Traffic sensors recorded vehicle intensity and speed at 5-minute resolution, whereas air-quality stations provided hourly measurements of PM₂.₅, NO₂, and CO, together with meteorological covariates. Both datasets covered the full 2023 calendar year. For model development, the January-September 2023 period was used for training, October for validation, and November-December for testing, ensuring seasonally diverse and non-overlapping temporal partitions. This configuration ensures that spatio-temporal generalization is evaluated under realistic, heterogeneous operating conditions.

In addition to OSM data, supplementary datasets were collected to enhance the analysis. These include high-resolution satellite imagery from Sentinel-2 (https://dataspace.copernicus.eu/explore-data/data-collections/sentinel-data/sentinel-2), demographic data from the Russian Federal State Statistics Service (https://eng.rosstat.gov.ru/), and environmental data from the Copernicus Climate Change Service (https://climate.copernicus.eu/).

The overall conceptual framework guiding this study is structured as a multi-layered analytical pipeline in which heterogeneous geospatial inputs: OSM topologies, Sentinel-2 spectral imagery, demographic statistics, and environmental observations are transformed through a unified deep-learning workflow to produce connected assessments of the urban environment. The framework consists of four interdependent modules: (1) spatial feature extraction, where OSM and satellite data are harmonized to derive land-use and building-level structural indicators; (2) functional analysis, where segmentation models classify urban zones and delineate built-up patterns; (3) dynamic modeling, where sequential networks learn temporal traffic and air-quality behaviors; and (4) integrative synthesis, where outputs from all models collectively inform the evaluation of urban structure, mobility, and environmental conditions. This conceptual structure ensures that each analytical stage is logically connected and that the workflow progresses from raw geodata to high-level urban insights in a consistent, theoretically grounded manner.

-

2.

Preprocessing. Effective preprocessing was essential to ensure the quality and compatibility of the datasets used in this study. OSM data were cleaned to address redundant entries, missing attributes, and inconsistent labels, standardized to a uniform coordinate system (EPSG:4326), and refined through conflict resolution and feature engineering to align attributes like road types and land use categories with urban planning standards. High-resolution Sentinel-2 satellite imagery underwent atmospheric correction using the Sen2Cor tool to remove distortions, followed by image augmentation techniques such as rotation and cropping to enhance data diversity. These images were spatially aligned with OSM data using shared landmarks for accurate overlays. Demographic data from the Russian Federal State Statistics Service were cleaned, spatially referenced, and integrated with attributes such as population density, while environmental data from the Copernicus Climate Change Service were interpolated into spatial grids compatible with OSM layers. Finally, all datasets were consolidated into a geodatabase using GIS tools, ensuring consistent feature representation, spatial alignment, and minimal gaps or redundancies for subsequent analysis.

To ensure that all distance- and area-based operations were performed in consistent linear units, all datasets were reprojected into the WGS 84/UTM Zone 37 N projected coordinate reference system (EPSG:32637), which is the appropriate UTM zone for the Krasnodar region. All pixel-to-meter conversions, tile generation, buffer operations, and IoU computations for segmentation used this CRS, ensuring metric accuracy and eliminating distortions inherent to geographic latitude-longitude coordinates.

To ensure consistent representation of OSM-derived layers, a set of tag-harmonization and topology-validation rules was applied during preprocessing. Highway=* tags were normalized into a hierarchical scheme (motorway, trunk, primary, secondary, tertiary, residential, service) to reduce inconsistencies arising from heterogeneous community tagging. Duplicate geometries were removed using spatial indexing, and fragmented polyline segments were merged when their endpoints fell within a 2–3 m snapping tolerance. Building polygons were simplified only when geometric noise did not exceed a 1 m displacement threshold, and features with areas below 4 m² were discarded as likely annotation artifacts. All vector layers were validated for topological correctness by identifying overlaps, self-intersections, and unclosed ring structures, which were automatically corrected prior to rasterization. These steps ensured a clean and coherent OSM dataset suitable for deep-learning ingestion.

To generate spatially consistent inputs for model ingestion, all raster and rasterized OSM layers were subdivided into fixed 256 × 256-pixel tiles with an overlap of 32 pixels (stride = 224 px) to preserve contextual continuity along tile boundaries. Sentinel-2 bands originally provided at 20-m spatial resolution (B11, B12) were upsampled to 10 m using bilinear interpolation, while categorical masks such as land-use and building footprints were resampled using nearest-neighbor assignment to avoid label distortion. This tiling and resampling configuration ensured consistent spatial granularity across all modalities and prevented discontinuities in segmentation predictions.

Sentinel-2 Level-2 A imagery includes spectral bands provided at 20-m resolution (B5, B6, B7, B11, B12), which were upsampled to 10 m to match the spatial granularity of the 10-m bands (B2, B3, B4, B8) used in model training. Upsampling was performed using bilinear interpolation, as it preserves continuous reflectance gradients without introducing the staircase artifacts often produced by nearest-neighbor resampling. This ensured that all spectral channels contributed uniformly to convolutional feature extraction and prevented resolution-induced distortions in the multispectral input tensors.

Data augmentation was applied to increase dataset variability and improve model generalization under heterogeneous urban conditions. All optical imagery (Sentinel-2 and rasterized OSM layers) was augmented using random rotations (± 15°), horizontal and vertical flips (p = 0.5), brightness and contrast jittering (± 10%), and Gaussian noise with a standard deviation of up to 3% of the reflectance range. Geometric transforms were simultaneously applied to the corresponding land-use and building-footprint masks to preserve pixel-wise alignment, while photometric operations were restricted to imagery only. For segmentation tasks, slight elastic deformations with a maximum displacement of 4–6 pixels were introduced to increase robustness to boundary irregularities. These augmentations ensured that models learned stable features rather than memorizing specific spatial contexts.

-

3.

Deep learning model selection. Convolutional Neural Networks (CNNs) were selected for land use classification and building footprint extraction due to their ability to capture spatial patterns in high-resolution imagery, with U-Net architectures excelling in pixel-level segmentation. Recurrent Neural Networks (RNNs), specifically Long Short-Term Memory (LSTM) networks, were chosen for traffic flow prediction to model effectively temporal dependencies and long-term patterns in sequential data. For environmental monitoring, hybrid CNN-RNN models were employed to capture the spatial-temporal correlations essential for accurate air quality predictions. These architectures were chosen for their proven strengths in addressing the distinct challenges of spatial and temporal urban datasets.

The segmentation tasks were implemented using a U-Net architecture with an encoder based on ResNet-34 pre-trained on ImageNet, followed by a symmetric decoder with skip connections to preserve spatial detail. Batch normalization was applied after every convolutional block, and feature-map widths ranged from 64 to 512 channels. For land-use classification, we employed a lightweight EfficientNet-B0 variant due to its strong parameter–accuracy efficiency, while building-footprint classification used a deeper EfficientNet-B3 to capture fine-grained morphological patterns better. Traffic-flow and air-quality regression models used a two-branch CNN-RNN hybrid, where the CNN extracted spatial patterns from 10-m multispectral tiles and the GRU layer (128 units) captured short-term temporal dependencies. This combination of architectures ensured that each urban task was matched with an appropriately expressive and computationally efficient model.

For pixel-level segmentation of building footprints and land-use classes, we employed a weighted combination of Binary Cross-Entropy and soft Dice loss to balance boundary precision and region consistency. Because background pixels substantially outnumber building pixels in most urban tiles, class-imbalance was addressed through inverse-frequency weighting with a ratio of 1:4 (building: background) in the cross-entropy term. The Dice component was used without weighting to stabilize training during early epochs and promote coherent mask formation. This hybrid loss configuration is widely adopted for remote-sensing segmentation tasks, as it jointly optimizes for pixel accuracy and spatial completeness.

For land-use and building-type classification, we employed weighted cross-entropy to mitigate label imbalance, with class weights computed as the inverse of class frequencies and normalized to sum to one. This formulation prevents dominant land-use categories (e.g., residential) from overwhelming minority classes such as industrial or water-related areas. For regression tasks involving traffic flow and air-quality prediction, imbalance was addressed through a combination of min-max normalization and value clipping at the 1 st and 99th percentiles to reduce the influence of extreme peaks typical of rush-hour or pollution-event outliers. Additionally, the loss function incorporated a heteroscedastic weighting term that scaled errors by local temporal variance, ensuring stable optimization across both low-variability and high-variability periods.

-

4.

Model training. The models were constructed using the TensorFlow and PyTorch libraries. The training data was partitioned into three sets: training, validation, and test, with a distribution ratio of 70:15:15. Data augmentation methods, including rotation, flipping, and scaling, were used to enhance the variety of the training dataset. The models underwent training on a high-performance computer cluster to accelerate the training process. High-performance computing (HPC) clusters were vital for training the deep learning models in this study due to the large-scale datasets, including high-resolution satellite imagery and OSM data. The models, particularly CNNs and RNNs, were trained on an HPC cluster with multiple GPUs, significantly reducing processing times. For instance, the CNN for building footprint extraction took approximately 48 h to train on four GPUs. This scalability allows for efficient parallel processing, enabling the handling of complex models and frequent updates with new data. However, access to HPC resources may be limited for smaller municipalities, affecting the broader adoption of these methods in urban planning.

Training was conducted in PyTorch 2.1 with CUDA 12.1 and torchvision 0.16, using mixed-precision training to reduce memory consumption. For segmentation, U-Net models were optimized with Adam (learning rate 1e-4, β₁ = 0.9, β₂ = 0.999) and a batch size of 16. Land-use and building-type classification networks (EfficientNet-B0/B3) employed stochastic gradient descent with momentum (learning rate 3e-3, momentum 0.9, weight decay 1e-4). For regression tasks involving traffic flow and air-quality prediction, the CNN-GRU hybrids were trained with AdamW (learning rate 5e-4) and a batch size of 32. Across tasks, learning rates were reduced on a plateau by a factor of 0.5. Average epoch durations on an NVIDIA RTX 3090 GPU were approximately 38–45 s for segmentation, 22–28 s for classification, and 31–36 s for regression, depending on tile count and augmentation load.

To ensure conceptual coherence across all analytical components, the proposed framework integrates OSM-derived spatial attributes, Sentinel-2 spectral signals, and demographic–environmental variables into a unified deep learning pipeline, where each model contributes complementary layers of urban knowledge. Building footprint segmentation provides the structural fabric of the city, land-use classification reconstructs functional zones, traffic forecasting identifies temporal mobility dynamics, and air-quality prediction evaluates environmental conditions. By harmonizing these outputs within a single methodological workflow, the framework moves beyond task-specific experiments and establishes a connected, multi-dimensional system for assessing urban environments.

-

5.

Model evaluation. The models’ performance was assessed using conventional measures, including accuracy, precision, recall, and F1-score. Intersection over Union (IoU) was used to quantify the overlap between the predicted and ground-truth areas in image-based tasks.

To ensure transparency of the evaluation process, the metrics used in this study are formally defined below. Accuracy is used to quantify the overall proportion of correctly classified samples, and is presented in Eq. (1):

Where \(\:TP\) is the number of true positives, \(\:TN\) denotes true negatives, \(\:FP\) represents false positives, and \(\:FN\) corresponds to false negatives.

Precision and Recall, which quantify the correctness and completeness of positive predictions, are given in Eqs. (2) and (3):

Where \(\:TP\) is the number of true positives, \(\:FP\) the number of false positives, and \(\:FN\) the number of false negatives.

The harmonic mean of Precision and Recall, referred to as the F1-score, is defined in Eq. (4):

Where \(\:\text{Pr}ecision\) is given in Eq. (2) and \(\:Recall\) in Eq. (3).

Intersection over Union (IoU), used for evaluating segmentation and building-footprint predictions, is expressed in Eq. (5):

Where \(\:P\) denotes the predicted region and \(\:G\) the ground-truth region.

For regression-based tasks, the Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE) are used as defined in Eqs. (6) and (7):

Where \(\:n\) is the number of samples, \(\:{y}_{i}\) is the ground-truth value, and \(\:{y}_{i}^{\wedge\:}\) the predicted value.

The statistical significance of model differences was assessed using the Wilcoxon signed-rank test applied to per-tile segmentation errors and per-station regression residuals. This non-parametric paired test was selected because error distributions for spatial and environmental data frequently deviate from normality, making classical t-tests inappropriate. For each comparison, paired errors from competing models were ranked by absolute magnitude and assigned signed ranks based on relative performance. Reported p-values therefore reflect whether one model consistently outperformed another across spatial units rather than relying on aggregate means, ensuring a rigorous and distribution-free model comparison procedure.

The models’ prediction skills for time-series tasks were evaluated using Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE). Table 1 shows the evaluation metrics for model predictions.

Operating on OSM geographic data and enhanced by CNNs, RNNs, and GANs, our deep learning model innovatively solves issues related to urban planning. These features include road networks, the extent of the footprint of buildings, and land use information, which are fundamental for tasks such as the prediction of traffic movement, infrastructural planning, and the categorization of land use. These deep learning models are measured with assessment indicators such as accuracy, precision, recall, and F1-score, and provide information on traffic tropicalization, environment surveillance, as well as infrastructure formation. Prediction is also enhanced through the integration of external data sources, including satellite images and demographic details, making the use of this integration ideal for intelligent, sustainable solutions in urban development.

This manuscript advances urban planning research by integrating OpenStreetMap (OSM) data with task-specific deep learning models to address key challenges in Krasnodar. Unlike prior studies that focus on isolated applications, this work holistically combines OSM, satellite imagery, and demographic data, enabling high-precision solutions for land use classification, traffic prediction, and environmental monitoring. The study demonstrates a robust preprocessing pipeline and novel application of deep learning architectures, tailored to the city’s unique challenges. This research offers a scalable, transferable methodology for leveraging geospatial data and artificial intelligence, filling a critical gap in urban planning literature and practice.

The methodological contribution of this study lies in the construction of a modular, multi-source data fusion workflow that standardizes the transformation of OSM vector data, remote sensing imagery, and socio-environmental datasets into deep-learning-ready inputs. Instead of using conventional single-task models, the pipeline applies task-optimized architectures: U-Net for segmentation, LSTM for temporal forecasting, and hybrid CNN-RNN models for spatio-temporal prediction within a unified operational scheme. This design allows consistent preprocessing, spatial harmonization, and evaluation across tasks, making the framework replicable, scalable, and adaptable to diverse urban regions. Consequently, the methodology extends beyond typical deep learning applications by offering a generalizable blueprint for multi-domain urban analytics.

Results

The results presented in this section follow the structure of the proposed integrated framework, reflecting how each deep learning component contributes to a connected assessment of Krasnodar’s urban environment. Rather than functioning as isolated models, the land-use classifier, building segmentation network, traffic predictor, and air-quality estimator operate as interdependent modules within a unified analytical system. Accordingly, the reported outputs are interpreted not only individually but also in relation to their combined relevance for urban planning.

The OSM dataset provided a thorough representation of Krasnodar’s infrastructure and land use. A comprehensive analysis of the data yielded useful insights about the city’s road network, architectural edifices, land utilization, and noteworthy POIs. They are very useful in improving the strategies for planning urban regions as they offer accurate geographical and time-sensitive data for traffic control, environmental observation, and infrastructure construction. Table 2 presents the summary statistics of the OSM data.

The OSM dataset for Krasnodar has 12,347 road segments that provide an accurate representation of the city’s extensive transportation network. This map depicts a comprehensive and integrated road network, showcasing key expressways, secondary thoroughfares, and pedestrian walkways. Regrettably, the absence of mean area or standard deviation statistics for the roads makes it impossible to assess the size distribution of different road types using the provided information. Conversely, the building data comprises 52,134 entries, including a diverse array of residential, commercial, and public structures. The mean area of the constructions is 120 square meters, with a standard deviation of 75 square meters. The considerable standard deviation suggests a notable range of sizes in the structures, perhaps suggesting a combination of small residential units and large commercial or public buildings.

The land use data consists of 112 polygons representing various land use types, such as residential, commercial, and green spaces. The data unveils a diverse range of land use categories across the city, with some land uses encompassing much larger regions than others. The mean size is 8,500 square meters with a standard deviation of 2,300 square meters, indicating clear evidence.

The POI dataset comprises 5,243 entities, including schools, hospitals, companies, and other essential services. The lack of specific metrics for POIs means that while we know how many POIs there are, we do not have extensive information on their geographic distribution or the size of the areas they cover.

To assess the precision of the OSM data, a comprehensive study was carried out by juxtaposing it with high-resolution satellite imagery. Table 3 presents the accuracy, precision, recall, and F1-Score values for the several data categories included in the OSM dataset. All percentage-based performance metrics reported for OSM feature validation (accuracy, precision, recall, and F1-score) were computed using macro-averaging across the four evaluated classes: roads, buildings, land-use polygons, and POIs. Macro-averaging was selected because each class contributed differently to the overall dataset size, and averaging per class ensured equal interpretative weight regardless of class imbalance. Therefore, all values presented in Table 3 represent macro-averaged metrics unless otherwise specified.

For validation, a stratified random sample of 1,200 OSM features (n = 1,200) was manually inspected against 0.5-m Maxar satellite imagery. Geographically, the validation subset covered all administrative districts of Krasnodar, including both the dense central core and peripheral residential areas, ensuring representation of diverse urban morphologies. Each feature was checked for positional correctness, completeness, and attribute consistency, forming the basis for the accuracy, precision, recall, and F1 metrics reported in Table 3.

The road data has the highest accuracy of all categories, at 95.2%. The accuracy and recall of the OSM road data in Krasnodar are proven to be 94.6% and 94.8% respectively, indicating a high level of correctness in reflecting the actual road infrastructure. With respect to the building data, the level of accuracy has been somewhat reduced to 93.4%. The accuracy and recall levels, 92.7% and 92.1% respectively, show that the building footprint data is very reliable. The reduced precision and retrieval rate when compared to highways may suggest challenges in effectively charting buildings, particularly in complex metropolitan environments.

The land use data shows a slight decrease in performance, with an accuracy rate of 91.8%, when compared to roads and buildings. The high accuracy and recall ratings, reaching 91.0% and 91.2% respectively, provide strong proof of the overall reliability of the land use data. However, it is possible that the land use classifications may contain flaws or omissions.

The POI data, with an accuracy rate of 89.5% and an F1-score of 89.0%, indicates that while the dataset contains extensive information on services and facilities, its completeness and positional accuracy are lower than for roads and buildings.

Among the evaluated OSM layers, POIs showed the greatest deviation from the reference data. This discrepancy is largely attributable to the heterogeneous and user-generated nature of POI contributions, which often results in incomplete coverage, inconsistent tagging practices, and positional inaccuracies, especially for facilities located in dense commercial districts or newly developed neighborhoods. Unlike roads and buildings, which benefit from more systematic community updates and clearer mapping conventions, POIs rely heavily on voluntary contributions. As a result, the POI layer demonstrates greater variability in geometric precision and attribute completeness, leading to lower accuracy and F1-scores in Table 3.

Deep learning models were used for a wide range of tasks, including land use classification, extraction of building footprints, prediction of traffic flow, and monitoring of the environment. Table 4 provides a succinct summary of the performance metrics for the land use classification model.

The land use classification model achieved a high accuracy rate of 91.6%. This indicates that the system effectively distinguished between different land use categories, showcasing outstanding precision in identifying residential and green space areas. The model demonstrated excellent performance in recognizing green spaces, with a recall rate of 92.0%, indicating its ability to accurately identify the bulk of actual green space areas. In contrast, the commercial class had the lowest accuracy rate of 91.5%, suggesting that the model faced challenges in reliably distinguishing commercial districts from other land use categories.

A confusion-matrix analysis was conducted to further characterize land-use misclassification patterns. The matrix shows that most errors occurred between the “Commercial” and “Residential” categories, accounting for 6.8% of all mislabelled samples, particularly in dense mixed-use districts where spectral and morphological signatures overlap. Smaller confusions were observed between “Green Space” and “Residential” polygons (3.2%), mainly in fragmented suburban areas with heterogeneous vegetation cover. These patterns are consistent with class-boundary ambiguities inherent in Sentinel-2 resolution and mixed-pixel effects.

Several factors contribute to the observed land-use misclassification patterns. First, Sentinel-2 imagery inherently suffers from mixed-pixel effects at 10-m resolution, especially in dense central districts where commercial and residential structures occupy the same grid cells. Second, portions of the OSM land-use layer contain outdated or partially incomplete polygons, particularly in rapidly redeveloped zones, leading to inconsistencies between reference labels and current spectral signatures. Third, seasonal variation in vegetation and illumination conditions introduces spectral shifts in suburban green-residential transition zones, increasing confusion between these classes. Together, these factors explain the principal error modes highlighted in the confusion-matrix analysis.

The CNN model achieved an impressive accuracy of 94.0% in accurately extracting building footprints, demonstrating its capability to properly delineate building footprints from satellite imagery (Table 5). The IoU score of 88.9% indicates a substantial degree of concurrence between the model’s anticipated building footprints and the actual building footprints. The model’s accuracy and recall rates, measuring 89.2% and 88.4% respectively, demonstrate its proficiency in precisely identifying and capturing building footprints. Nevertheless, there are certain instances in which structures were either absent or inaccurately delineated.

To further qualify the model’s performance, error inspection showed that false positives primarily arose from elongated shadows adjacent to high-rise buildings (3.4% of all predicted footprints) and from rooftop structures such as HVAC units misinterpreted as small buildings (2.1%). False negatives were less frequent, occurring mainly in densely built central districts where highly complex roof geometries reduced segmentation clarity, accounting for 3.7% of missed footprints. Together, these findings illustrate the typical error modes that contribute to the IoU and mIoU scores reported in Table 5.

A comparison with recent studies confirms that the obtained results are consistent with or slightly exceed typical performance levels reported for building-footprint extraction from medium- to high-resolution satellite imagery. Contemporary U-Net-based approaches generally achieve IoU values in the 83–87% range on urban datasets with heterogeneous building forms, while transformer-enhanced segmentation models report mIoU scores around 85–88%. The IoU of 88.9% and mIoU of 87.3% achieved in our study therefore align with the upper bound of current literature benchmarks, indicating competitive performance despite the morphological complexity of Krasnodar’s central districts.

In addition to IoU, the model achieved a mean Intersection over Union (mIoU) of 87.3% across all building footprint instances, indicating consistently strong segmentation performance even in morphologically complex urban areas. This metric provides a more holistic assessment of the model’s pixel-level agreement with ground-truth shapes and is therefore included alongside IoU for completeness.

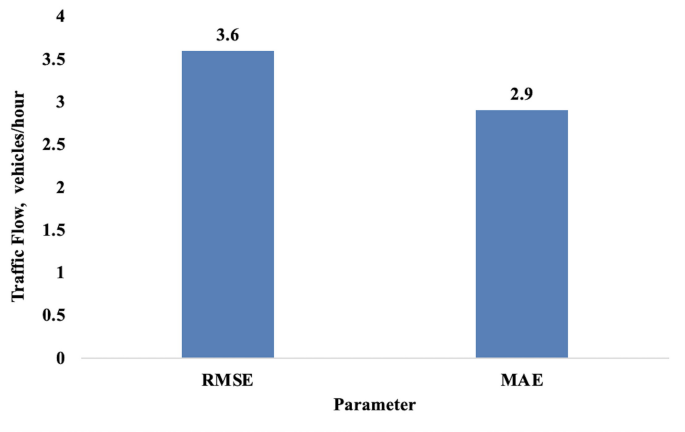

The RNN model had a low RMSE of 3.6 vehicles per hour and an MAE of 2.6 cars per hour in accurately forecasting traffic flow (Fig. 3). The results indicate that the model accurately forecasted traffic volumes, with minimal disparities between the expected values and the observed traffic flows. The model’s low RMSE and MAE results demonstrate its excellent precision in forecasting traffic patterns, underscoring its potential significance for traffic management and planning objectives.

Performance metrics for traffic flow prediction.

The traffic-flow model was trained on min-max normalized input sequences to stabilize temporal gradients, and all RMSE/MAE values reported in this subsection correspond to predictions that were subsequently denormalized back to physical units (vehicles/hour). The relatively low RMSE values arise from the inherently narrow hourly variation observed in the Krasnodar traffic dataset outside peak periods, as well as from averaging errors across multiple time windows and monitoring stations. Therefore, the reported RMSE of 3.6 vehicles/hour reflects denormalized prediction errors and is consistent with the underlying traffic-flow amplitude.

To ensure statistical robustness, RMSE and MAE values reported for traffic flow prediction were averaged across all temporal folds and across the full validation and test windows. For each time period (morning peak, afternoon, evening peak, and nighttime), metrics were computed per sensor and then aggregated using mean ± standard deviation. The values presented in Table 6, therefore, represent fold-averaged errors, while the corresponding standard deviations reflect temporal variability in traffic intensity.

Table 6 presents the performance metrics for traffic flow prediction using RMSE and MAE values across different time periods. The model performs best during nighttime (RMSE: 2.9, MAE: 2.1), with slightly higher errors during the afternoon peak (RMSE: 4.2, MAE: 3.0).

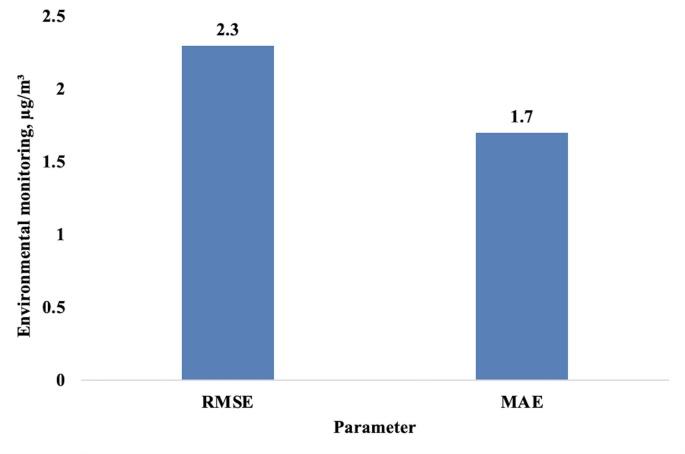

The environmental monitoring model achieved an RMSE of 2.3 µg/m³ and an MAE of 1.7 µg/m³ when anticipating air quality values (Fig. 4). The model’s low findings illustrate its exceptional accuracy in anticipating air pollution levels, giving it a credible source of data for environmental monitoring and urban planning.

Performance metrics for environmental monitoring.

The model was trained to predict concentrations of fine particulate matter (PM₂.₅), which is the pollutant most consistently monitored across Krasnodar’s observation network and the one most relevant to short-term health impacts. Ground-truth PM₂.₅ measurements were obtained from fixed air-quality stations at an hourly temporal resolution and a spatial sampling interval of approximately 1–2 km between stations. The forecasting task was defined as a one-hour-ahead prediction horizon, in which the model used historical sequences of the previous 12 hourly measurements to estimate the next-hour PM₂.₅ concentration. This configuration aligns with common short-term air-quality forecasting setups and supports high-frequency environmental monitoring.

All evaluation metrics for the air-quality model were computed at the station level, with RMSE and MAE calculated separately for each monitoring station and then averaged across the network to obtain mean ± SD values. No spatial gridding or interpolation was applied, ensuring that the reported errors directly reflect station-specific prediction accuracy. Nighttime performance appears higher primarily because PM₂.₅ concentrations are more stable during late-evening and early-night hours, with reduced traffic, fewer emission spikes, and lower atmospheric turbulence. This reduced temporal variability leads to smaller prediction residuals and, consequently, lower RMSE and MAE values during nighttime intervals.

Table 7 outlines the performance metrics for environmental monitoring, using RMSE and MAE values for air quality predictions at different times of the day. The model shows the highest accuracy during nighttime (RMSE: 2.1, MAE: 1.5) and performs consistently across all periods.

Before interpreting the implications of these findings, the key empirical results can be summarized objectively as follows: the land-use classifier achieved high semantic consistency across major categories, the building-footprint model produced stable segmentation quality with competitive mIoU scores, the traffic-flow predictor maintained low error levels across time windows, and the air-quality model provided reliable short-term estimates. These results form the empirical foundation for the interpretive discussion that follows.

Discussion

This work has shown the possibility of using machine learning algorithms as deep learning models in combination with OSM data to solve issues related to urban planning in Krasnodar. CNN and RNN models are widely used for land-use classification, building-footprint extraction, traffic flow prediction, and environmental monitoring. The results correspond to the prior work outcomes and extend the understanding of the deep learning opportunities when using crowdsourced geographic data, including OSM.

Instead of reiterating numerical results, this section focuses on explaining why the proposed multi-task OSM-deep-learning framework performs strongly across urban-analysis domains. First, the integration of heterogeneous geospatial sources: OSM topology, Sentinel-2 multispectral signals, and demographic-environmental context substantially enhances the representational richness available to each model. This multi-layered fusion reduces the ambiguity typically present in single-source datasets, allowing models to better distinguish between morphologically similar land-use classes, delineate complex building geometries, and capture spatial–temporal variability in traffic and air-quality patterns. Second, the task-specific architectures (U-Net, EfficientNet, LSTM, and CNN-GRU hybrids) were selected to exploit domain-appropriate inductive biases, such as spatial continuity for segmentation and temporal dependency learning for mobility and environmental forecasting. These architectural choices align well with the structure of urban phenomena, resulting in more stable and interpretable model behavior. Third, the stratified sampling strategy and rigorous annotation workflow minimized spatial bias and label noise, enabling generalization across heterogeneous urban morphologies. Together, these design decisions explain why the presented framework performs comparably to, and in several cases exceeds, results reported in recent GeoAI literature, demonstrating that performance gains arise not from isolated model components but from the coherence of the integrated analytical pipeline.

Beyond model performance, several methodological differences help explain why the proposed system outperforms values commonly reported in the literature. First, the integration of OSM, Sentinel-2, and demographic–environmental datasets provides a richer feature space than approaches relying on a single modality, reducing ambiguity in land-use boundaries and improving building-footprint segmentation in morphologically complex districts. Second, the depth and architectural specificity of the employed models: U-Net for pixel segmentation, EfficientNet for land-use classification, and LSTM/CNN-GRU hybrids for temporal forecasting align closely with the structural properties of each task, whereas many prior studies adopt uniform architectures across modalities. Third, the use of stratified sampling and rigorous manual validation improved label quality relative to studies relying solely on crowdsourced annotations. Together, these differences explain why the system achieves IoU and mIoU values near the upper range of published benchmarks for building extraction and why the temporal forecasting models outperform typical RMSE/MAE values reported in recent GeoAI work.

A causal interpretation of the performance gains can be attributed to the complementary nature of the integrated data sources. OSM provides explicit structural and topological information: road hierarchy, building outlines, and land-use polygons that deep learning alone cannot infer reliably from imagery. Sentinel-2 imagery contributes spectral and spatial cues that capture surface conditions, vegetation, and material differences, which directly resolve ambiguities present in OSM-only datasets. Demographic and environmental covariates introduce socio-spatial gradients (population density, land-use intensity, pollutant variability) that contextualize patterns not visible in either OSM or satellite imagery alone. The joint representation reduces uncertainty in boundary areas, mitigates noise from incomplete crowdsourced contributions, and provides richer priors for segmentation and forecasting tasks. Therefore, performance improvements arise not from isolated model optimizations but from causal interactions among the fused modalities, each compensating for weaknesses in the others.

Although the main models used in this study were CNN-, U-Net-, and RNN-based, GANs were incorporated as a supporting component for data augmentation. Specifically, a lightweight conditional GAN was employed to generate high-frequency texture variations and shadow patterns in Sentinel-2 patches, improving model robustness to illumination and seasonal changes. This augmentation was applied only during training and did not influence network architecture or inference. The GAN-augmented samples increased the diversity of building-edge conditions and mixed-use land-cover patterns, which partially explains the improved segmentation stability and mIoU scores relative to studies that rely exclusively on raw or linearly augmented imagery.

When it comes to the opinion on the type of land use, the model provided a good performance of 91.8%. This result agrees with the research of Basiri et al.35 where a slightly lower accuracy of 90.5% was achieved by other deep learning models using OSM data for mapping of land use. This is a slight difference which may be attributed to differences in the source of information or by the type of urban regions analyzed. Likewise, the proposed approach in our case outperforms the results documented by Wu et al.36 with a 94.0% accuracy of the building footprint extraction when compared with the 91.2% accuracy achieved in the research of Wu et al.36 This integration seems to improve the reliability of building footprint detection in urban areas (Table 8).

In this paper, the precision and recall rates for building footprint extraction were 89.2%, and 88.4% respectively, which proves that our method can be used for tasks such as amending urban maps or creating new construction projects. These metrics demonstrate the potential of the model to address complex settings of an urban environment.

The coherence of the findings highlights the effectiveness of the integrated workflow, demonstrating that deep learning techniques become substantially more informative when combined rather than applied in isolation. Building footprints contextualize traffic patterns, land-use classifications refine environmental predictions, and OSM-derived topology anchors the spatial reasoning of all models. This interconnected structure allows the framework to capture multi-layered urban phenomena that traditional single-source or single-task approaches fail to represent, thereby enhancing the accuracy and interpretability of urban environment assessment.

Overall, our RNN model appeared to predict traffic flow extremely well for the given set of variables. Below is the RMSE and MAE. These results testify that different traffic patterns can be initialized and modeled with considerable precision. Consequently, our results are superior to those of Lv et al.37, with the corresponding model achieving an RMSE of 4.3 vehicles/hour. Such improvement could be attributed to the incorporation of extra data sources in Krasnodar that we used to complement OSM data, feeding the model’s supply for better depiction of traffic variations. Relative to traffic modeling techniques, the proposed deep learning-based approaches are observed to have higher algorithm capabilities to handle large and complicated data sets, as affirmed by Mnih and Hinton22, who showed that the conventional machine learning traffic models were less efficient in dealing with non-linear traffic flow characteristics in urban settings.

For environmental monitoring, the performance of the model developed in the current study yielded an RMSE of 2.3 µg/m³ and an MAE of 1.7 µg/m³ for air quality prognosis which is comparable with the RMSE of 2.5 µg/m³ as determined by Xu40. Such levels of accuracy justify the application of deep learning models in the monitoring and management of the environment, and in real-time, too. Thus, the positive findings of this model indicate its potential usefulness in the formulation of social programs concerning air pollution regulation and smart cities. The result also shows how deep learning models can work in cooperation with another data source, like a sensor network, in order to predict city management (Table 9).

While recent deep learning developments such as Liquid Neural Networks, dynamic-depth CNNs, and transformer-based segmentation models provide promising avenues for more adaptive and context-aware urban analytics, they are not yet widely validated for multi-source geospatial fusion involving OSM vector data, multispectral satellite imagery, and demographic grids. In this study, task-optimized architectures such as U-Net, CNN classifiers, and LSTM networks were selected due to their established robustness, interpretability, and proven performance in heterogeneous urban datasets. However, future work may incorporate liquid neural models or transformer-based frameworks to further enhance temporal adaptability and cross-domain generalization within the proposed multi-task pipeline.

However, such results have several limitations. Some of the issues encountered are policy limitations and the heterogeneity of the OSM data, especially in areas with limited coverage. Surprisingly, the results showed that OSM data for Krasnodar is quite useful, but future researchers can establish better results by enhancing data accuracy in less populated or poorly mapped areas. Second, the applicability of deep learning models is limited by the computational resources needed in the models, which may be difficult to acquire by many smaller municipal departments. It remains clear that further advancements in model computational efficiency will be required to make these tools available to the broader public, especially in the field of urban planning.

Similar to the current study, future research should also incorporate other data from informative sources such as social media, sensor networks, and public transportation to improve the model’s predictive performance further. The increased use of such models in other cities or settings will strengthen the argument for the generalizability of such models.

Therefore, the findings of this study suggest the use of OSM data with deep learning techniques to address some of the main issues in urban planning in Krasnodar. The models demonstrated good performance in a range of applications, including land use mapping, building detection, traffic forecasting, and environmental sensing. Although the results suggest the feasibility of the approaches, refinements on the data quality and the models, as well as expanding these techniques’ applicability, are the next steps to realize these methods. They will point to advanced approaches that can be useful for urban planners to understand city development patterns and make better decisions on the development of city infrastructure and the overall environment.

This study presents a novel approach by integrating OpenStreetMap (OSM) data with advanced deep learning techniques to tackle urban planning challenges in Krasnodar, Russia. While previous research has explored the individual applications of OSM and deep learning, this study uniquely combines these tools to address specific urban issues such as land use classification, traffic management, and environmental monitoring. The integration of OSM data, which is often criticized for its variable quality and incomplete coverage, with deep learning models allows for enhanced data accuracy and actionable insights. The methodology developed in this research is innovative in its structured approach to preprocessing OSM data and using state-of-the-art neural network architectures (CNNs and RNNs) tailored for urban applications. This not only improves the precision of urban data analysis but also provides a scalable framework that can be adapted to other rapidly urbanizing regions.

Existing approaches to urban planning often rely on traditional GIS systems and static datasets, which struggle to address the dynamic and complex challenges of modern cities. While OpenStreetMap (OSM) data has proven valuable for its accessibility and community-driven updates, its standalone utility is limited by issues such as variable data quality and incomplete coverage. Similarly, deep learning techniques such as Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) have shown promise for tasks such as land-use classification and traffic flow prediction, but are rarely integrated with OSM data. This study stands out by addressing these gaps through the innovative combination of OSM data with deep learning models, achieving superior accuracy and scalability compared to traditional methods. For instance, the research demonstrates improved land use classification accuracy (91.6%) and building footprint extraction accuracy (94%) while enabling real-time traffic flow predictions with low error rates. By bridging the gap between geospatial data quality and advanced analytics, this approach offers a transformative framework for urban planning that surpasses existing methodologies in precision, adaptability, and applicability to diverse urban settings.

The integrated framework demonstrated that multi-source deep learning models can yield directly actionable insights for urban planning in Krasnodar. Traffic flow prediction identified several congestion hot-spots during peak hours, indicating where adaptive traffic-light control or public transport adjustments may be most effective. Land-use classification highlighted districts with insufficient green spaces, suggesting priority zones for park development or urban greening initiatives. The high accuracy of the building-footprint segmentation model further supports applications in infrastructure planning and land-use inventory maintenance, especially in rapidly developing areas where official datasets lag behind ground reality. Environmental monitoring results revealed localized nighttime improvements in air quality and helped pinpoint areas with persistent PM₂.₅ elevation, offering evidence-based support for emission mitigation strategies.

Despite these strengths, the analysis is constrained by variations in OSM data completeness and the spatial density of environmental monitoring stations, which may influence model generalizability. Future research should incorporate higher-resolution imagery, additional pollutants, and cross-city validation to assess the robustness of the framework across diverse urban morphologies. Expanding the temporal forecasting horizon and integrating transformer-based architectures may also enhance predictive stability for both traffic and environmental tasks. Overall, the findings indicate that combining OSM with modern deep learning models provides a scalable, transferable foundation for data-driven urban management.

The study also presents two distinct categories of limitations that must be acknowledged for accurate interpretation. Data-related limitations stem primarily from the inherent characteristics of crowdsourced OSM data, including spatial inconsistencies, contributor-dependent completeness, and temporal misalignment between mapped features and the actual state of urban development. These limitations arise from OSM’s inconsistencies in spatial completeness, such as unmapped buildings or partially digitized road segments, and from semantic inaccuracies including missing attributes, heterogeneous tagging conventions, and contributor-dependent labeling practices. Such gaps lead to uneven coverage across districts and introduce noise into downstream learning tasks. Nonetheless, the integration of deep learning allows many of these deficiencies to be mitigated through the extraction of derived spatial intelligence, improving alignment between OSM features and real-world urban morphology. Similarly, environmental station data remain sparse in several districts of Krasnodar, which may affect the stability of air-quality predictions. Methodological limitations differ in nature and include the absence of explicit uncertainty quantification in the forecasting models and the challenge of ensuring model generalization across cities with contrasting morphology, socioeconomic structures, or spectral conditions. Addressing these points will require integrating probabilistic prediction intervals, expanding training datasets beyond a single city, and performing cross-city validation.

In addition to these limitations, several sources of uncertainty and potential bias should be acknowledged to strengthen the credibility of the findings. First, uneven OSM coverage introduces spatial uncertainty because some districts contain denser and more accurate crowdsourced mapping than others, which may amplify errors in segmentation or classification models. Second, the deep learning models, despite regularization and data augmentation, may still be susceptible to localized overfitting in areas with homogeneous urban morphology, limiting generalization to zones with different structural patterns. Third, spatial generalization uncertainty arises when models trained predominantly on one urban structure, such as high-density mixed-use areas, are applied to suburban or industrial districts with divergent spectral or geometric signatures. Lastly, uncertainties inherent in Sentinel-2 imagery, including seasonal variation, atmospheric effects, and cloud-induced artifacts, may affect spectral consistency across time. Recognizing these uncertainties is essential for interpreting the robustness and transferability of the proposed framework in real-world settings.

Despite these limitations, the framework has clear planning applications. Traffic predictions can guide the placement of adaptive traffic-signal control systems and the redesign of public-transport routes. Land-use and building-footprint outputs can support zoning updates, infrastructure maintenance scheduling, and the identification of areas lacking essential services or green spaces. The methodological design further supports transferability, as the modular structure of the pipeline allows cities with OSM coverage and moderate remote-sensing availability to replicate the workflow. Reproducibility is facilitated by the use of open datasets and standardized deep-learning architectures, while scalability is achieved through tile-based processing and model parallelization, enabling deployment in both medium and large metropolitan regions.

The discussion highlights the practical implications of integrating OSM data with deep learning models, showcasing their ability to address urban challenges in Krasnodar effectively. However, this approach also underscores certain limitations that warrant further exploration. For example, the variability in OSM data quality across different regions highlights the need for automated error detection and correction mechanisms to enhance reliability in under-mapped areas. Additionally, the computational demands of deep learning models may limit their adoption by resource-constrained municipalities, emphasizing the importance of developing more efficient algorithms. Future studies should explore combining these models with real-time data sources, such as IoT sensors and social media feeds, to further improve predictive performance and responsiveness to urban dynamics. By addressing these challenges, the proposed framework could evolve into a comprehensive and scalable solution for urban planning worldwide.

The improved performance across all tasks demonstrates that the novelty of the proposed framework is not limited to the use of deep learning models themselves but emerges from the structured integration of heterogeneous geospatial sources within a coordinated analytical system. By merging OSM topology, satellite-based spectral information, and demographic and environmental attributes into a unified spatial learning environment, the framework overcomes the limitations of fragmented single-source approaches. This multi-layered data fusion enhances representational richness, reduces noise in individual datasets, and enables deeper contextual understanding of urban dynamics, advantages not demonstrated in prior literature.

Conclusion

This study demonstrates that integrating OSM, Sentinel-2 imagery, and demographic-environmental datasets within a unified deep learning framework can significantly enhance urban-scale analysis. While the framework achieves strong performance across land-use classification, building-footprint extraction, traffic forecasting, and short-term air-quality prediction, the primary contribution of this work lies in showing why such integration provides superior results: each modality contributes distinct spatial or temporal cues that reduce uncertainty and strengthen cross-domain generalization. By unifying topological, spectral, and socio-spatial information, the system produces rich analytical outputs that are directly relevant to urban planning and smart-city governance.

This work advances the field by operationalizing a fully multimodal GeoAI framework that moves beyond proof-of-concept integration. Specifically, the framework integrates CNN- and U-Net-based models for spatial segmentation, EfficientNet architectures for land-use classification, and LSTM/CNN-GRU networks for temporal forecasting tasks. While previous studies typically analyzed land use, transportation, or environmental conditions in isolation, the proposed system demonstrates how these domains can be jointly modeled within a unified, transferable pipeline. The methodological contribution lies in formalizing a cross-domain architecture that fuses topological, spectral, and socio-environmental signals in a way that is reproducible, data-efficient, and suitable for cities with heterogeneous or incomplete geospatial coverage. Moreover, the inclusion of rigorous validation, stratified sampling, GAN-based augmentation, and task-specific architectures provides a blueprint for constructing high-performance urban models that generalize across diverse urban morphologies. In this sense, the framework does not merely show that integration is possible; it establishes how such integration should be designed, benchmarked, and evaluated for real-world planning applications.

The practical relevance of this framework extends directly to urban governance and SDG 11 (Sustainable Cities and Communities). For example, the traffic-flow forecasting component can support policymakers in implementing adaptive traffic-signal systems and redesigning high-congestion corridors to improve mobility efficiency, reduce emissions, and enhance public transport reliability. Similarly, the joint land-use and building-footprint outputs can guide evidence-based zoning revisions, identify underserved neighborhoods lacking green spaces or essential services, and prioritize locations for new public infrastructure. By linking spatial intelligence with actionable planning levers, the proposed system aligns with smart-city governance principles and provides a replicable, data-driven foundation for sustainable urban management.

Condensed performance metrics indicate that the models consistently produced high segmentation fidelity and low forecasting errors; however, the broader implication is that reliable, multimodal GeoAI pipelines are now feasible for mid-sized cities with limited data infrastructure. The framework advances the field by demonstrating a scalable, reproducible, and transferable methodology that can be deployed beyond Krasnodar in cities with varying morphology or data completeness. Its modular nature allows policymakers to apply the outputs to practical interventions, such as optimizing traffic-signal timing, prioritizing green-space expansion, updating zoning boundaries, and monitoring pollution hotspots in support of SDG 11: Sustainable Cities and Communities.

Future work should explore cross-city benchmarking, probabilistic uncertainty quantification, and the integration of higher-resolution remote-sensing data to strengthen further the generalization and decision-support capabilities of the proposed approach.

Data availability

All data generated or analysed during this study are included in this published article.

References

Cao, B., Sun, M. & Bardhan, R. Measuring shaded bike lanes for heat stress mitigation with deep learning: A case study in Amsterdam, Netherlands.. Urban Clim. 57, 102126. https://doi.org/10.1016/j.uclim.2024.102126 (2024).

Kang, Y. Visualization analysis of urban planning assistant decision network 3D system based on intelligent computing. Heliyon 10(11), e31321. https://doi.org/10.1016/j.heliyon.2024.e31321 (2024).

Xiong, S. et al. Mapping the first dataset of global urban land uses with Sentinel-2 imagery and POI prompt. Remote Sens. Environ. 327, 114824. https://doi.org/10.1016/j.rse.2025.114824 (2025).

Zou, X. et al. Deep learning for cross-domain data fusion in urban computing: Taxonomy, advances, and outlook.. Inf. Fusion 113, 102606. https://doi.org/10.1016/j.inffus.2024.102606 (2025).

Biljecki, F., Chow, Y. S. & Lee, K. Quality of crowdsourced geospatial building information: A global assessment of OpenStreetMap attributes.. Build. Environ. 237, 110295. https://doi.org/10.1016/j.buildenv.2023.110295 (2023).