Abstract

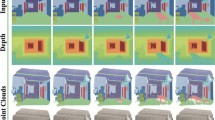

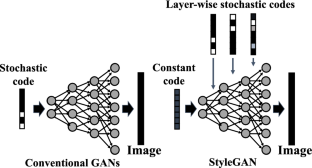

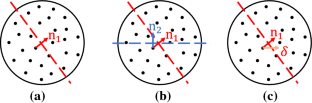

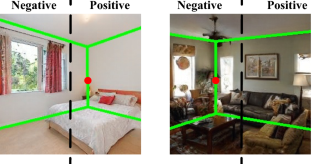

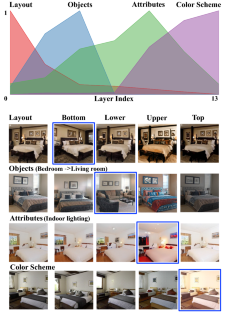

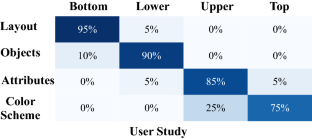

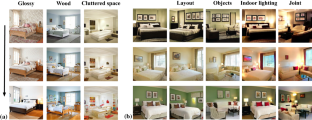

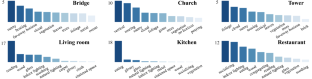

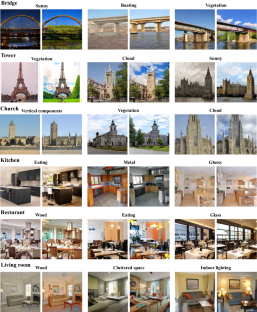

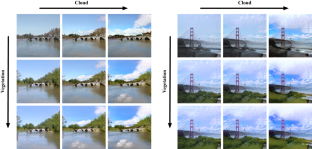

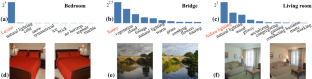

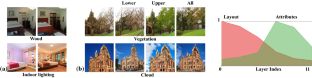

Despite the great success of Generative Adversarial Networks (GANs) in synthesizing images, there lacks enough understanding of how photo-realistic images are generated from the layer-wise stochastic latent codes introduced in recent GANs. In this work, we show that highly-structured semantic hierarchy emerges in the deep generative representations from the state-of-the-art GANs like StyleGAN and BigGAN, trained for scene synthesis. By probing the per-layer representation with a broad set of semantics at different abstraction levels, we manage to quantify the causality between the layer-wise activations and the semantics occurring in the output image. Such a quantification identifies the human-understandable variation factors that can be further used to steer the generation process, such as changing the lighting condition and varying the viewpoint of the scene. Extensive qualitative and quantitative results suggest that the generative representations learned by the GANs with layer-wise latent codes are specialized to synthesize various concepts in a hierarchical manner: the early layers tend to determine the spatial layout, the middle layers control the categorical objects, and the later layers render the scene attributes as well as the color scheme. Identifying such a set of steerable variation factors facilitates high-fidelity scene editing based on well-learned GAN models without any retraining (code and demo video are available at https://genforce.github.io/higan).

Similar content being viewed by others

References

Abdal, R., Qin, Y., & Wonka, P. (2019). Image2stylegan: How to embed images into the stylegan latent space? In: International conference on computer vision (pp. 4432–4441).

Abdal, R., Qin, Y., & Wonka, P. (2020). Image2stylegan\(++\): How to edit the embedded images? In: IEEE conference on computer vision and pattern recognition (pp. 8296–8305).

Agrawal, P., Girshick, R., & Malik, J. (2014). Analyzing the performance of multilayer neural networks for object recognition. In: European conference on computer vision (pp. 329–344). Springer.

Alain, G., & Bengio, Y. (2016). Understanding intermediate layers using linear classifier probes. In: International conference on learning representations workshop.

Bau, D., Strobelt, H., Peebles, W., Wulff, J., Zhou, B., Zhu, J.-Y., & Torralba, A. (2019). Semantic photo manipulation with a generative image prior. ACM Transactions on Graphics, 38(4), 59.

Bau, D., Zhou, B., Khosla, A., Oliva, A., & Torralba, A. (2017). Network dissection: Quantifying interpretability of deep visual representations. In: IEEE conference on computer vision and pattern recognition (pp. 6541–6549).

Bau, D., Zhu, J. Y., Strobelt, H., Zhou, B., Tenenbaum, J. B., Freeman, W. T., & Torralba, A. (2018). Gan dissection: Visualizing and understanding generative adversarial networks. In: International conference on learning representations.

Bengio, Y., Courville, A., & Vincent, P. (2013). Representation learning: A review and new perspectives. IEEE Transactions on Pattern Analysis and Machine Intelligence, 35(8), 1798–1828.

Brock, A., Donahue, J., & Simonyan, K. (2018). Large scale gan training for high fidelity natural image synthesis. In: International conference on learning representations.

Cheng, M. M., Zheng, S., Lin, W. Y., Vineet, V., Sturgess, P., Crook, N., et al. (2014). Imagespirit: Verbal guided image parsing. ACM Transactions on Graphics, 34(1), 1–11.

Choi, Y., Choi, M., Kim, M., Ha, J. W., Kim, S., & Choo, J. (2018). Stargan: Unified generative adversarial networks for multi-domain image-to-image translation. In: IEEE conference on computer vision and pattern recognition (pp. 8789–8797).

Goetschalckx, L., Andonian, A., Oliva, A., & Isola, P. (2019). Ganalyze: Toward visual definitions of cognitive image properties. In: Proceedings of the IEEE international conference on computer vision (pp. 5744–5753).

Gonzalez-Garcia, A., Modolo, D., & Ferrari, V. (2018). Do semantic parts emerge in convolutional neural networks? International Journal of Computer Vision, 126(5), 476–494.

Goodfellow, I., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., Courville, A., & Bengio, Y. (2014). Generative adversarial nets. In: Advances in neural information processing systems (pp. 2672–2680).

Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B., & Hochreiter, S. (2017). Gans trained by a two time-scale update rule converge to a local nash equilibrium. In: Advances in neural information processing systems (pp. 6626–6637).

Isola, P., Zhu, J. Y., Zhou, T., & Efros, A. A. (2017). Image-to-image translation with conditional adversarial networks. In: IEEE conference on computer vision and pattern recognition (pp. 1125–1134).

Jahanian, A., Chai, L., & Isola, P. (2019). On the“steerability” of generative adversarial networks. In: International conference on learning representations.

Karacan, L., Akata, Z., Erdem, A., & Erdem, E. (2016) Learning to generate images of outdoor scenes from attributes and semantic layouts. arXiv preprint arXiv:1612.00215.

Karras, T., Aila, T., Laine, S., & Lehtinen, J. (2017). Progressive growing of gans for improved quality, stability, and variation. In: International conference on learning representations.

Karras, T., Laine, S., & Aila, T. (2019). A style-based generator architecture for generative adversarial networks. In: IEEE conference on computer vision and pattern recognition (pp. 4401–4410).

Laffont, P. Y., Ren, Z., Tao, X., Qian, C., & Hays, J. (2014). Transient attributes for high-level understanding and editing of outdoor scenes. ACM Transactions on Graphics, 33(4), 1–11.

Liao, J., Yao, Y., Yuan, L., Hua, G., & Kang, S. B. (2017). Visual attribute transfer through deep image analogy. ACM Transactions on Graphics, 36(4), 120.

Luan, F., Paris, S., Shechtman, E., Bala, K. (2017) Deep photo style transfer. In: IEEE conference on computer vision and pattern recognition (pp. 4990–4998).

Mahendran, A., & Vedaldi, A. (2015). Understanding deep image representations by inverting them. In: IEEE conference on computer vision and pattern recognition (pp. 5188–5196).

Morcos, A. S., Barrett, D. G., Rabinowitz, N. C., & Botvinick, M. (2018). On the importance of single directions for generalization. In: International conference on learning representations.

Nguyen, A., Dosovitskiy, A., Yosinski, J., Brox, T., & Clune, J. (2016). Synthesizing the preferred inputs for neurons in neural networks via deep generator networks. In: Advances in neural information processing systems (pp. 3387–3395).

Nguyen-Phuoc, T., Li, C., Theis, L., Richardt, C., & Yang, Y. L. (2019) Hologan: Unsupervised learning of 3D representations from natural images. In: International conference on computer vision (pp. 7588–7597).

Oliva, A., & Torralba, A. (2001). Modeling the shape of the scene: A holistic representation of the spatial envelope. International Journal of Computer Vision, 42(3), 145–175.

Oliva, A., & Torralba, A. (2006). Building the gist of a scene: The role of global image features in recognition. Progress in Brain Research, 155, 23–36.

Park, T., Liu, M. Y., Wang, T. C., Zhu, J. Y. (2019). Semantic image synthesis with spatially-adaptive normalization. In: IEEE conference on computer vision and pattern recognition (pp. 2337–2346).

Park, T., Zhu, J.-Y., Wang, O., Lu, J., Shechtman, E., Efros, A. A., & Zhang, R. (2020). Swapping autoencoder for deep image manipulation. In: Advances in Neural Information Processing Systems.

Patterson, G., Xu, C., Su, H., & Hays, J. (2014). The sun attribute database: Beyond categories for deeper scene understanding. International Journal of Computer Vision, 108(1–2), 59–81.

Radford, A., Metz, L., & Chintala, S. (2015). Unsupervised representation learning with deep convolutional generative adversarial networks. In: International conference on learning representations.

Shaham, T. R., Dekel, T., & Michaeli, T. (2019). Singan: Learning a generative model from a single natural image. In: International conference on computer vision (pp. 4570–4580).

Shen, Y., Gu, J., Tang, X., & Zhou, B. (2020a). Interpreting the latent space of gans for semantic face editing. In: IEEE conference on computer vision and pattern recognition (pp. 9243–9252).

Shen, Y., Luo, P., Yan, J., Wang, X., & Tang, X. (2018). Faceid-gan: Learning a symmetry three-player gan for identity-preserving face synthesis. In: IEEE conference on computer vision and pattern recognition (pp. 821–830).

Shen, Y., Yang, C., Tang, X., & Zhou, B. (2020b). InterFaceGAN: Interpreting the disentangled face representation learned by GANs. IEEE Transactions on Pattern Analysis and Machine Intelligence. https://doi.org/10.1109/TPAMI.2020.3034267.

Simonyan, K., Vedaldi, A., & Zisserman, A. (2014). Deep inside convolutional networks: Visualising image classification models and saliency maps. In: Workshop at international conference on learning representations.

Torralba, A., & Oliva, A. (2003). Statistics of natural image categories. Network: Computation in Neural Systems, 14(3), 391–412.

Wang, T. C., Liu, M. Y., Zhu, J. Y., Tao, A., Kautz, J., & Catanzaro, B. (2018). High-resolution image synthesis and semantic manipulation with conditional gans. In: IEEE conference on computer vision and pattern recognition (pp. 8798–8807).

Xiao, J., Hays, J., Ehinger, K. A., Oliva, A., & Torralba A (2010) Sun database: Large-scale scene recognition from abbey to zoo. In: 2010 IEEE computer society conference on computer vision and pattern recognition (pp. 3485–3492). IEEE.

Xiao, T., Hong, J., & Ma, J. (2018) Elegant: Exchanging latent encodings with gan for transferring multiple face attributes. In: European conference on computer vision (pp. 168–184).

Xiao, T., Liu, Y., Zhou, B., Jiang, Y., & Sun, J. (2018). Unified perceptual parsing for scene understanding. In: Proceedings of the European conference on computer vision (ECCV) (pp. 418–434).

Yao, S., Hsu, T. M., Zhu, J. Y., Wu, J., Torralba, A., Freeman, B., & Tenenbaum, J. (2018). 3D-aware scene manipulation via inverse graphics. In: Advances in neural information processing systems (pp. 1887–1898).

Yosinski, J., Clune, J., Bengio, Y., & Lipson, H. (2014). How transferable are features in deep neural networks? In: Advances in neural information processing systems (pp. 3320–3328).

Yu, F., Seff, A., Zhang, Y., Song, S., Funkhouser, T., & Xiao, J. (2015) Lsun: Construction of a large-scale image dataset using deep learning with humans in the loop. arXiv preprint arXiv:1506.03365.

Zeiler, M. D., & Fergus, R. (2014). Visualizing and understanding convolutional networks. In: European conference on computer vision (pp. 818–833). Springer.

Zhang, W., Zhang, W., & Gu, J. (2019). Edge-semantic learning strategy for layout estimation in indoor environment. IEEE Transactions on Cybernetics, 50(6), 2730–2739.

Zhou, B., Khosla, A., Lapedriza, A., Oliva, A., & Torralba, A. (2015). Object detectors emerge in deep scene cnns. In: International conference on learning representations.

Zhou, B., Lapedriza, A., Khosla, A., Oliva, A., & Torralba, A. (2017). Places: A 10 million image database for scene recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, 40(6), 1452–1464.

Zhu, J., Shen, Y., Zhao, D., & Zhou, B. (2020). In-domain gan inversion for real image editing. In: European conference on computer vision.

Zhu, J. Y., Park, T., Isola, P., & Efros, A. A. (2017). Unpaired image-to-image translation using cycle-consistent adversarial networks. In: International conference on computer vision (pp. 2223–2232).

Acknowledgements

This work is supported by Early Career Scheme (ECS) through the Research Grants Council (RGC) of Hong Kong under Grant No.24206219 and CUHK FoE RSFS Grant (No. 3133233).

Author information

Authors and Affiliations

Corresponding authors

Additional information

Communicated by Jifeng Dai.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yang, C., Shen, Y. & Zhou, B. Semantic Hierarchy Emerges in Deep Generative Representations for Scene Synthesis. Int J Comput Vis 129, 1451–1466 (2021). https://doi.org/10.1007/s11263-020-01429-5

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1007/s11263-020-01429-5