Abstract

Web crawlers are an indispensable tool for collecting research data. However, they may be blocked by servers for various reasons. This can reduce their coverage. In this early-stage work, we investigate server-side blocks encountered by Common Crawl (CC). We analyze page contents to cover a broader range of refusals than previous work. We construct fine-grained regular expressions to identify refusal pages with precision, finding that at least 1.68% of sites in a CC snapshot exhibit a form of explicit refusal. Significant contributors include large hosters. Our analysis categorizes the forms of refusal messages, from straight blocks to challenges and rate-limiting responses. We are able to extract the reasons for nearly half of the refusals we identify. We find an inconsistent and even incorrect use of HTTP status codes to indicate refusals. Examining the temporal dynamics of refusals, we find that most blocks resolve within one hour, but also that 80% of refusing domains block every request by CC. Our results show that website blocks deserve more attention as they have a relevant impact on crawling projects. We also conclude that standardization to signal refusals would be beneficial for both site operators and web crawlers.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Pepyaka webserver. https://webtechsurvey.com/technology/pepyaka. Accessed May 2024

Ablove, A., et al.: Digital discrimination of users in sanctioned states: the case of the cuba embargo. In: 33rd USENIX Security Symposium (USENIX Security 2024), Philadelphia, PA, pp. 3909–3926. USENIX Association (2024). https://www.usenix.org/conference/usenixsecurity24/presentation/ablove

Afroz, S., Tschantz, M.C., Sajid, S., Qazi, S.A., Javed, M., Paxson, V.: Exploring server-side blocking of regions. arXiv abs/1805.11606 (2018). https://api.semanticscholar.org/CorpusID:44131334

Ahmad, S.S., Dar, M.D., Zaffar, M.F., Vallina-Rodriguez, N., Nithyanand, R.: Apophanies or epiphanies? How crawlers impact our understanding of the web. In: Proceedings of The Web Conference 2020 (WWW 2020), pp. 271–280 (2020)

Asghari, H.: pyasn. https://github.com/hadiasghari/pyasn

Center for Applied Internet Data Analysis (CAIDA): AS Organizations Dataset (2024). https://catalog.caida.org/dataset/as_organizations. Accessed May 2024

Common Crawl: November/december 2023 crawl archive now available. https://www.commoncrawl.org/blog/november-december-2023-crawl-archive-now-available. Accessed May 2024

Darer, A., Farnan, O., Wright, J.: Automated discovery of internet censorship by web crawling. In: Proceedings of the 10th ACM Conference on Web Science (WebSci 2018), pp. 195–204 (2018)

Fielding, R.T., Nottingham, M.: Additional HTTP Status Codes. RFC 6585 (2012). https://doi.org/10.17487/RFC6585. https://www.rfc-editor.org/info/rfc6585

Fielding, R.T., Nottingham, M., Reschke, J.: HTTP Semantics. RFC 9110 (2022). https://doi.org/10.17487/RFC9110. https://www.rfc-editor.org/info/rfc9110

Holz, R., Braun, L., Kammenhuber, N., Carle, G.: The SSL landscape - a thorough analysis of the X.509 PKI using active and passive measurements. In: Proceedings of the ACM/USENIX 11th Annual Internet Measurement Conference (IMC), Berlin, Germany (2011)

http.dev: HTTP status codes. https://http.dev/status

Institute, R.: How many news websites block AI crawlers. Reuters Institute for the Study of Journalism (2023). https://reutersinstitute.politics.ox.ac.uk/how-many-news-websites-block-ai-crawlers#:~:text=Examining. Accessed May 2024

Invernizzi, L., Thomas, K., Kapravelos, A., Comanescu, O., Picod, J.M., Bursztein, E.: Cloak of visibility: detecting when machines browse a different web. In: 2016 IEEE Symposium on Security and Privacy (SP), pp. 743–758 (2016)

Koster, M., Illyes, G., Zeller, H., Sassman, L.: Robots Exclusion Protocol. RFC 9309 (2022). https://doi.org/10.17487/RFC9309. https://www.rfc-editor.org/info/rfc9309

Leonard, D., Loguinov, D.: Demystifying service discovery: implementing an internet-wide scanner. In: Proceedings of the ACM SIGCOMM Conference on Internet Measurement (IMC 2010), pp. 109–122 (2010)

McDonald, A., et al.: 403 forbidden: a global view of CDN geoblocking. In: Proceedings of the Internet Measurement Conference (IMC 2018), pp. 218–230 (2018)

Nagel, S.: Common crawl: data collection and use cases for NLP (2023). http://nlpl.eu/skeikampen23/nagel.230206.pdf. Accessed May 2024

Niaki, A.A., et al.: ICLab: a global, longitudinal internet censorship measurement platform. In: 2020 IEEE Symposium on Security and Privacy (SP), pp. 135–151 (2020)

Tschantz, M.C., Afroz, S., Sajid, S., Qazi, S.A., Javed, M., Paxson, V.: A bestiary of blocking: the motivations and modes behind website unavailability. In: 8th USENIX Workshop on Free and Open Communications on the Internet (FOCI 2018) (2018)

Vastel, A., Rudametkin, W., Rouvoy, R., Blanc, X.: FP-crawlers: studying the resilience of browser fingerprinting to block crawlers. In: NDSS Workshop on Measurements, Attacks, and Defenses for the Web, MADWeb 2020 (2020)

Wan, G., et al.: On the origin of scanning: the impact of location on Internet-wide scans. In: Proceedings of the ACM Internet Measurement Conference (IMC 2020), pp. 662–679 (2020)

Zeber, D., et al.: The representativeness of automated web crawls as a surrogate for human browsing. In: Proceedings of the Web Conference 2020 (WWW 2020), pp. 167–178 (2020)

Acknowledgments

This work was partially supported by the research project ‘CATRIN’ (NWA. 1215.18.003) as part of the Dutch Research Council’s (NWO) National Research Agenda (NWA).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Ethics declarations

Ethics

This work raises no ethical concerns. The CC dataset was ethically created [18]. We ran fewer than \(70 \times 10^{3}\) DNS queries (about \(12 \times 10^{3}\) FQDN samples) to identify their NS and PTR records, distributing them over time and sequentially to avoid network or nameserver load.

A Additional Figures and Tables

A Additional Figures and Tables

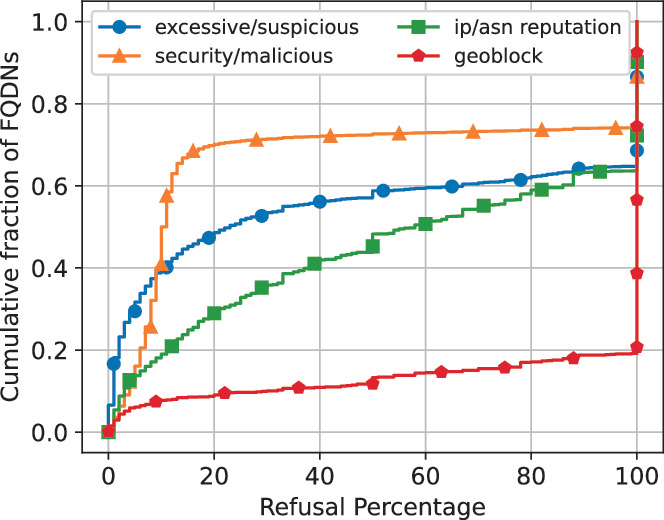

Refusal rate per FQDN by reason.

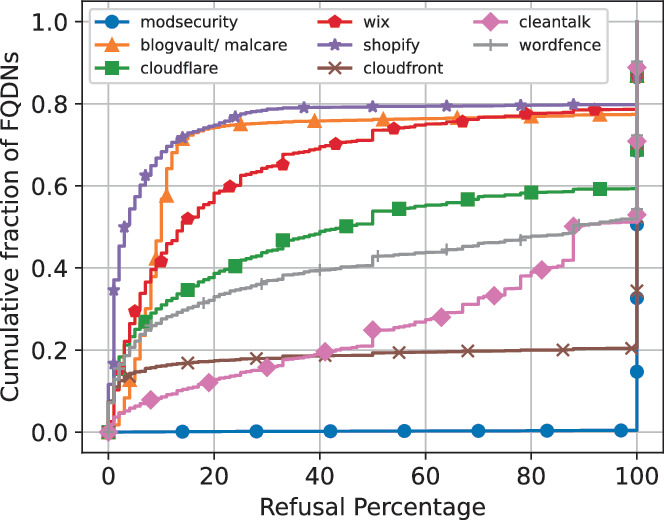

Refusal rate per FQDN by tag.

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Ansar, M., Sperotto, A., Holz, R. (2025). Web Crawl Refusals: Insights From Common Crawl. In: Testart, C., van Rijswijk-Deij, R., Stiller, B. (eds) Passive and Active Measurement. PAM 2025. Lecture Notes in Computer Science, vol 15567. Springer, Cham. https://doi.org/10.1007/978-3-031-85960-1_9

Download citation

DOI: https://doi.org/10.1007/978-3-031-85960-1_9

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-85959-5

Online ISBN: 978-3-031-85960-1

eBook Packages: Computer ScienceComputer Science (R0)Springer Nature Proceedings Computer Science