Here I share some thoughts around the history of "responsive" MediaWiki skins and how we might want to think about it for Vector.

The buzzword "responsive" is thrown around a lot in Wikimedia-land, but essentially what we are talking about is whether to include a single tag in the page. The addition of a meta tag with name viewport, will tell the page how to adapt to a mobile device.

<meta name="viewport" content="width=device-width, initial-scale=1">

More information: https://css-tricks.com/snippets/html/responsive-meta-tag/

Since the viewport tag must be added, by default websites are not made mobile-friendly. Given the traditional Wikimedia skins were built before mobile sites and this tag existed, CologneBlue, Modern, Vector did not add this tag.

When viewing these skins on mobile the content will not adapt to the device and instead will appear zoomed out. One of the benefits of this is that the reader sees a design that is consistent with the design they see on desktop. The interface is familiar and easy enough to navigate as the user can pinch and zoom to parts of the UI. The downside is that reading is very difficult, and requires far more hand manipulation to move between sentences and paragraphs, and for this reason, many search engines will penalize traffic.

Enter Minerva

The Minerva skin (and MobileFrontend before it) were introduced to allow us to start adapting our content for mobile. This turned out to be a good decision as it avoided the SEO of our projects from being penalized. However, building Minerva showed that making content mobile-friendly was more than adding a meta tag. For example, many templates used HTML elements with fixed widths that were bigger than the available space. This was notably a problem with large tables. Minerva swept many of these issues under the rug with generic fixes (For example enforcing horizontal scrolling on tables). Minerva took a bottom-up approach where it added features only after they were mobile-friendly. The result of this was a minimal experience that was not popular with editors.

Timeless

Timeless was the 2nd responsive skin added to Wikimedia wikis. It was popular with editors as it took a different approach to Minerva, in that it took a top-down approach, adding features despite their shortcomings on a mobile screen. It ran into many of the same issues that Minerva had e.g. large tables and copied many of the solutions in Minerva.

MonoBook

During the building of Timeless, the Monobook skin was made responsive (T195625). Interestingly this led to a lot of backlash from users (particularly on German Wikipedia), revealing that many users did not want a skin that adapted to the screen (presumably because of the reasons I outlined earlier - while reading is harder, it's easier to get around a complex site. Because of this, a preference was added to allow editors to disable responsive mode (the viewport tag). This preference was later generalized to apply to all skins:

Responsive Vector

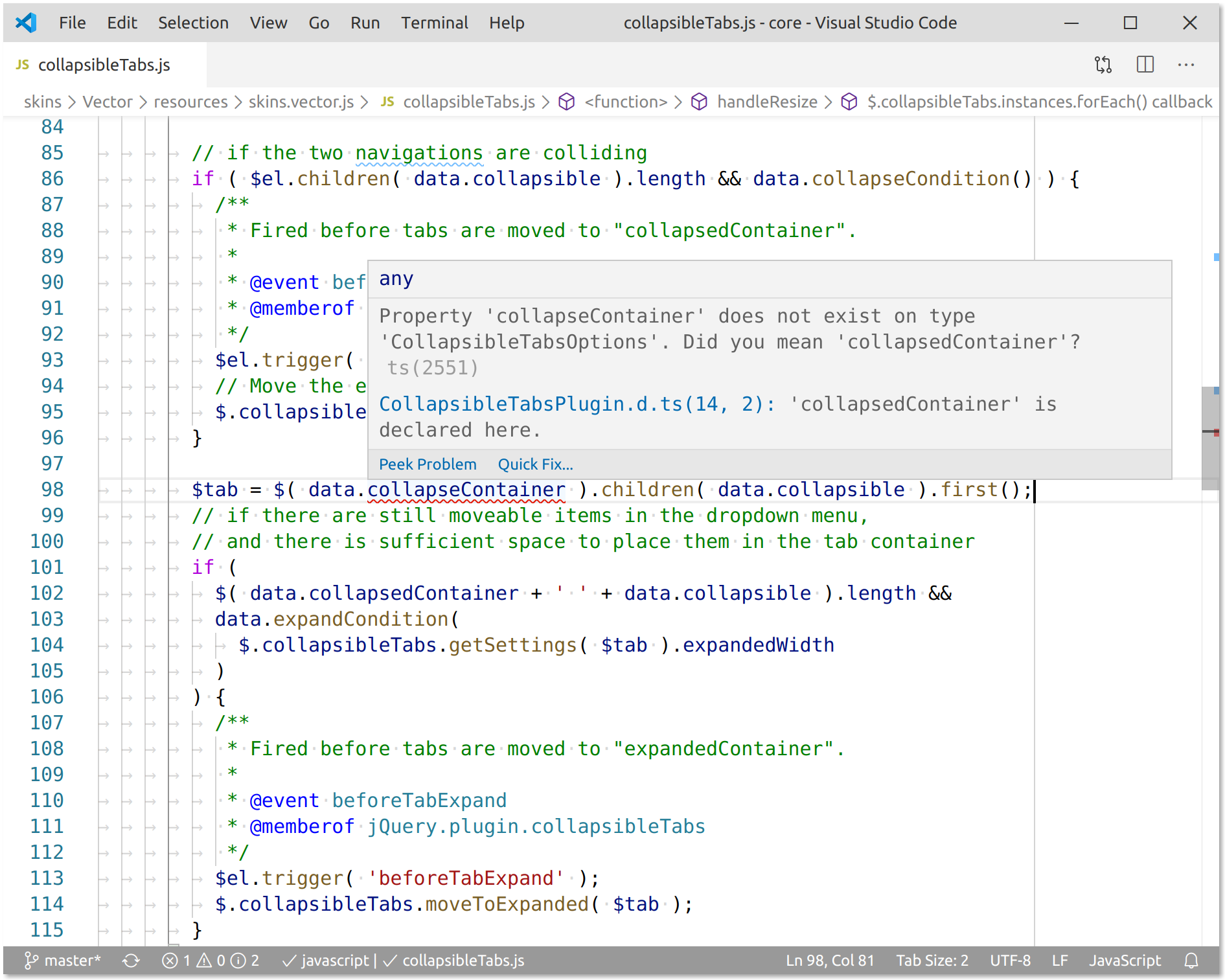

Around the same time, several attempts were made by volunteers to force Vector to work as a responsive skin. This was feature flagged given the backlash for MonoBook's responsive mode. The feature flag saw little development, presumably because many gadgets popped up that were providing the same service.

Vector 2022

The feature flag for responsive Vector was removed for legacy Vector in T242772 and efforts were redirected into making the new Vector responsive. Currently, the Vector skin can be resized comfortably down to 500px. It currently does not add a viewport tag, so does not adapt to a mobile screen.

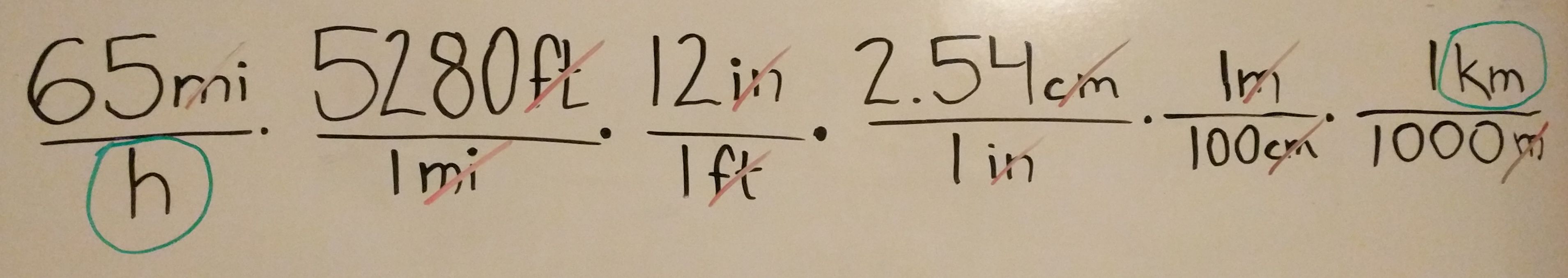

However, during the building of the table of contents, many mobile users started complaining (T306910). The reason for this was that when you don't define a viewport tag the browser makes decisions for you. To avoid these kind of issues popping up it might make sense for us to define an explicit viewport to request content that appears scaled out at a width of our choosing. For example, we could explicitly set width 1200px with a zoom level of 0.25 and users would see:

If Vector was responsive, it would encourage people to think about mobile-friendly content as they edit on mobile. If editors insist on using the desktop skin on their mobile phones rather than Minerva, they have their reasons, but by not serving them a responsive skin, we are encouraging them to create content that does not work in Minerva and skins that adapt to the mobile device.

There is a little bit more work needed on our part to deal with content that cannot hit into 320px e.g. below 500px. Currently if the viewport tag is set, a horizontal scrollbar will be shown - for example the header does not adapt to that breakpoint:

Decisions to be made

- Should we enable Vector 2022's responsive mode? The only downside of doing this is that some users may dislike it, and need to visit preferences to opt-out.

- When a user doesn't want responsive mode, should we be more explicit about what we serve them? For example, should we tell a mobile device to render at a width of 1000px with a scale of 0.25 ( 1/4 of the normal size) ? This would avoid issues like T306910. Example code [1] demo

- Should we apply the responsive mode to legacy Vector too? This would fix T291656 as it would mean the option applies to all skins.

[1]

<meta name="viewport" content="width=1400px, initial-scale=0.22">