TensorFlow is an open-source framework for machine learning and artificial intelligence developed by Google Brain. It provides tools to build, train and deploy models across different platforms, especially for deep learning tasks.

- Supports a wide range of applications such as NLP, computer vision, time series forecasting and reinforcement learning

- Enables scalable model development and deployment across devices

Key Features

1. Scalability

TensorFlow is designed to scale across a variety of platforms from desktops and servers to mobile devices and embedded systems. It supports distributed computing allowing models to be trained on large datasets efficiently.

2. Comprehensive Ecosystem

TensorFlow offers a broad set of tools and libraries including:

- TensorFlow Core: The base API for TensorFlow that allows users to define models, build computations and execute them.

- Keras: A high-level API for building neural networks that runs on top of TensorFlow, simplifying model development.

- TensorFlow Lite: A lightweight solution for deploying models on mobile and embedded devices.

- TensorFlow.js: A library for running machine learning models directly in the browser using JavaScript.

- TensorFlow Extended (TFX): A production-ready solution for deploying machine learning models in production environments.

- TensorFlow Hub: A repository of pre-trained models that can be easily integrated into applications.

3. Automatic Differentiation (Autograd)

TensorFlow automatically calculates gradients for all trainable variables in the model which simplifies the backpropagation process during training. This is a core feature that enables efficient model optimization using techniques like gradient descent.

4. Multi-language Support

TensorFlow is primarily designed for Python but it also provides APIs for other languages like C++, Java and JavaScript making it accessible to developers with different programming backgrounds.

5. TensorFlow Serving and TensorFlow Model Optimization

TensorFlow includes tools for serving machine learning models in production environments and optimizing them for inference allowing for lower latency and higher efficiency.

TensorFlow Architecture

The architecture of TensorFlow revolves around the concept of a computational graph which is a network of nodes (operations) and edges (data). Here's a breakdown of key components:

- Tensors: Tensors are the fundamental units of data in TensorFlow. They are multi-dimensional arrays or matrices used for storing data. A tensor can have one dimension (vector), two dimensions (matrix) or more dimensions.

- Graph: A TensorFlow graph represents a computation as a flow of tensors through a series of operations. Each operation in the graph performs a specific mathematical function on the input tensors such as matrix multiplication, addition or activation.

- In TensorFlow 2.x, computations are executed eagerly by default, meaning operations run immediately without requiring a separate session.

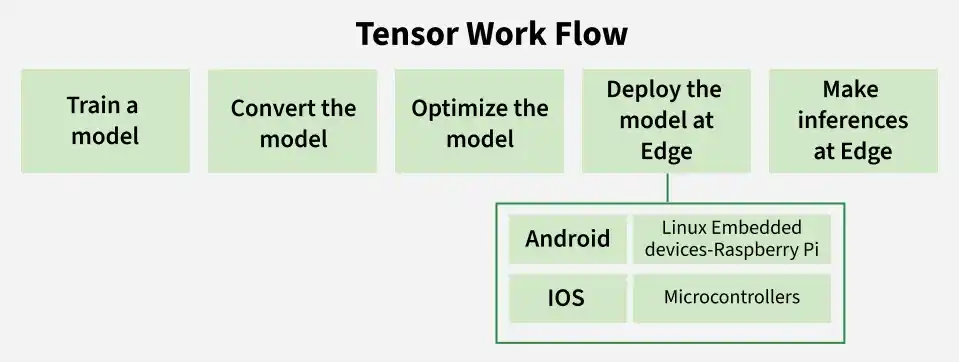

TensorFlow Workflow

Building a machine learning model in TensorFlow typically involves the following steps:

Step 1: Train a Model

- Use TensorFlow to build and train a machine learning model on platform like a PC or cloud.

- Employ datasets relevant to our application like images, text, sensor data, etc.

- Evaluate and validate the model to ensure high accuracy before deployment.

Step 2: Convert the Model

- Convert the trained model into TensorFlow Lite (.tflite) format using the TFLite Converter.

- This conversion prepares the model for resource-constrained edge environments.

- Supports different formats like saved models, Keras models or concrete functions.

Step 3: Optimize the Model

- Apply model optimization techniques such as quantization, pruning or weight clustering.

- Reduces the model size, improves inference speed and minimizes memory footprint.

- Crucial for running models efficiently on mobile, embedded or microcontroller devices.

Step 4: Deploy the Model

- Deploy the optimized .tflite model to edge devices like Android, iOS, Linux-based embedded systems like Raspberry Pi and Microcontrollers like Arm Cortex-M.

- Ensure compatibility with TensorFlow Lite runtime for the target platform.

Step 5: Make Inferences at the Edge

- Run real-time predictions directly on the edge device using the TFLite Interpreter.

- Enables low-latency, offline inference without relying on cloud computation.

- Supports use cases like image recognition, voice detection and sensor data analysis.

Building a Simple Model with TensorFlow

1. Here, we have loaded the MNIST Dataset and process the image. Then we have built a simple neural network using TensorFlow's Sequential API with two layers:

- Dense layer with ReLU activation

- An output layer with softmax activation function

2. At last we compiled the model using Adam Optimizer and Sparse Categorical Crossentropy and train the model for 5 epochs.

3. Install tensorfow library by running the following command in command prompt

pip install tensorflow

4. Implementation

from tensorflow.keras.datasets import mnist

from tensorflow.keras.layers import Dense

from tensorflow.keras.models import Sequential

import tensorflow as tf

(train_images, train_labels), (test_images, test_labels) = mnist.load_data()

train_images = train_images.reshape(

(train_images.shape[0], 28 * 28)).astype('float32') / 255

test_images = test_images.reshape(

(test_images.shape[0], 28 * 28)).astype('float32') / 255

model = Sequential([

Dense(128, activation='relu', input_shape=(28 * 28,)),

Dense(10, activation='softmax')

])

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy', metrics=['accuracy'])

model.fit(train_images, train_labels, epochs=5)

test_loss, test_acc = model.evaluate(test_images, test_labels)

print(f"Test accuracy: {test_acc}")

Output:

TensorFlow vs Other Frameworks

TensorFlow is often compared to other popular machine learning frameworks such as PyTorch, Keras and scikit-learn. Here’s how TensorFlow stands out:

| Feature | TensorFlow | PyTorch | Keras | Scikit-Learn |

|---|---|---|---|---|

| Primary Focus | Deep learning and production | Research & experimentation | High-level DL API (on TensorFlow) | Traditional ML (SVM, trees, regression) |

| Deployment | Strong (Lite, JS, Serving) | Limited vs TensorFlow | Uses TensorFlow pipeline | Minimal deployment focus |

| Ease of Use | Moderate, needs setup | Easy & flexible | Very easy (beginner-friendly) | Simple for small ML tasks |

| Flexibility | Good for prod, less for research | Highly flexible | Limited vs raw TF | Limited (no deep learning) |

| Use Cases | NLP, CV, RL, production | Research, prototyping | Quick DL model building | Classification, clustering, regression |

| Neural Networks | Strong (CNN, RNN, RL) | Strong (RNN, GAN, LSTM) | Simplified NN building | Not for deep learning |

| Learning Curve | Steep but powerful | Easier (research), complex for prod | Easiest | Easy |

| Community | Large and growing | Growing, research-focused | Part of TF ecosystem | Large (classical ML only) |