Artificial intelligence has matured quickly. Boardrooms now discuss deployment timelines instead of proofs of concept. Vendors promise autonomy. Analysts predict disruption.

But here is the real question you should ask in 2026:

Are enterprises truly ready for fully autonomous systems, or is Human-in-the-Loop AI still the only practical path?

The answer is not dramatic or overly simple. It depends on how much risk your organization can handle, the context in which AI operates, the strength of your governance framework, and how much error your business can realistically tolerate.

If you are responsible for technology strategy, compliance, or operational resilience, this distinction affects real outcomes, not just headlines. Getting this balance wrong can create operational and reputational consequences that are difficult to reverse.

Let us break it down clearly.

The Two Competing Models

Before discussing what is realistic, you need to understand the structural difference between these approaches.

1. Human-in-the-Loop AI

In this model, humans remain embedded in the decision cycle. The AI system generates outputs, predictions, classifications, or actions. A human reviews, approves, adjusts, or overrides.

This approach prioritizes oversight.

2. Fully Autonomous AI

Here, AI systems operate independently within defined boundaries. They sense, decide, and act without real-time human intervention. Humans step in only if something fails or if governance triggers an alert.

This approach prioritizes efficiency.

Now let us compare them side by side.

Comparative Framework

| Dimension | Human-in-the-Loop AI | Autonomous AI Systems |

| Decision Authority | Shared between AI and human | Fully delegated to AI |

| Risk Exposure | Lower, mitigated by review | Higher, dependent on safeguards |

| Speed | Moderate | High |

| Scalability | Slower due to human dependency | Highly scalable |

| Compliance Alignment | Easier to audit | Complex, requires strong logging |

| Error Correction | Immediate human intervention | Automated or post-event review |

| Use Case Maturity in 2026 | Widely adopted | Selectively adopted |

This is not a theoretical debate. Enterprises are making investment decisions based on these trade-offs right now. Budgets, vendor choices, and governance models are being shaped by how leaders balance autonomy with control, and those decisions directly influence risk and accountability.

Why Human Oversight Still Dominates in 2026

Despite progress in AI governance, enterprises hesitate to hand over full control. The reasons are structural, not emotional. Leaders understand that accountability, regulatory exposure, and operational risk do not disappear just because decision-making is automated.

1. Regulatory Pressure

Financial services, healthcare, insurance, and critical infrastructure operate under strict regulatory oversight. You cannot justify a compliance failure by saying the algorithm made a mistake.

Regulators demand accountability that humans provide, not machines.

2. Ethical Ambiguity

AI can optimize for efficiency. It struggles with context, fairness nuances, and business ethics when trade-offs are subtle.

For example:

- Credit risk assessment

- Insurance claim approvals

- Hiring shortlists

- Fraud investigations

These decisions require discretion. They involve context, judgment, and sometimes empathy that cannot always be reduced to data patterns. Pure automation often oversimplifies, forcing complex human situations into rigid algorithmic rules that may miss important nuances.

3. Trust Deficit

You cannot expect employees or customers to immediately trust a black-box system.

Adoption of technology requires gradual exposure to people. When humans validate outputs, confidence grows. If you remove that layer too quickly you create internal resistance.

Where Fully Autonomous AI Is Gaining Ground

That said, complete autonomy is not fiction. It already exists in bounded, low-risk domains. In controlled environments where variables are predictable and decision parameters are clearly defined, autonomous systems already operate with minimal human intervention.

Here is where Autonomous AI systems realistically operate in 2026:

- Predictive server scaling in cloud environments

- Real-time fraud transaction blocking within defined thresholds

- Supply chain routing optimization

- Algorithmic trading within guardrails

- Cybersecurity threat containment playbooks

These systems work because:

- Boundaries are predefined.

- Escalation rules exist.

- Risk appetite is calculated.

- Data patterns are stable.

Notice the pattern. Autonomy works best when the environment is structured and measurable.

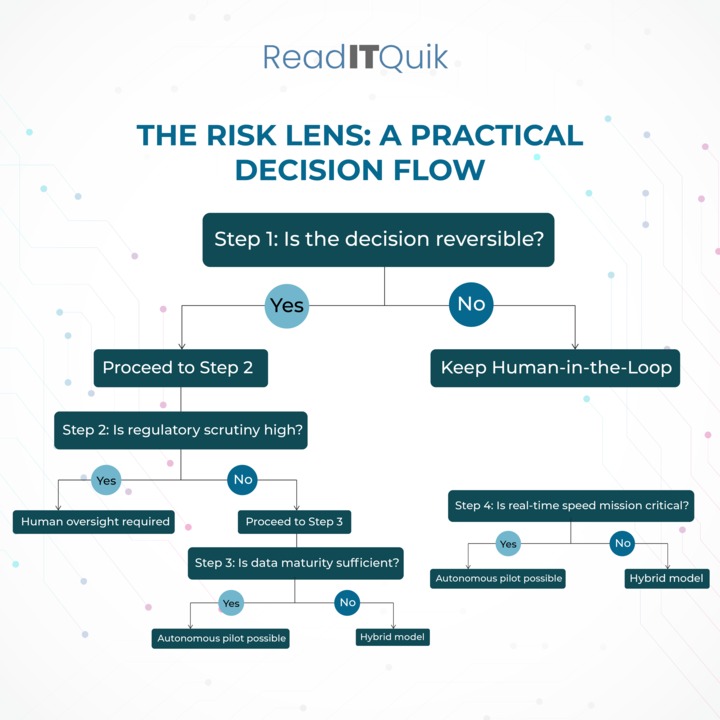

The Risk Lens: A Practical Decision Flow

When deciding between oversight and autonomy, CIOs increasingly apply a structured evaluation process.

Below is a simplified decision flow:

This structured approach prevents emotional decisions driven by vendor marketing.

The Cost Equation

Many assume autonomy reduces cost. That assumption is incomplete. While automation can lower manual effort, it introduces new layers of infrastructure, monitoring, and governance expenses that are often underestimated.

Let us analyze realistically.

Human-in-the-Loop Costs

- Salaries for review teams

- Training expenses

- Slower throughput

- Operational delays

Autonomous AI Costs

- Infrastructure scaling

- Monitoring frameworks

- Explainability tooling

- Audit logs

- Incident response teams

- Model retraining cycles

When you calculate the total cost of ownership, fully autonomous AI does not automatically mean cheaper. It means cost shifts from labor to governance and infrastructure.

The Governance Imperative

In 2026, conversations have shifted from model accuracy to AI governance maturity.

Enterprises now evaluate:

- Model transparency

- Bias detection mechanisms

- Escalation protocols

- Version control documentation

- Explainability reports

- Audit readiness

Without governance maturity, autonomy becomes liability.

Below is a governance maturity checklist many enterprises now follow:

AI Governance Readiness Checklist

- Clear accountability framework

- Documented model decision boundaries

- Real-time monitoring dashboards

- Escalation hierarchy defined

- Bias testing conducted quarterly

- Regulatory mapping completed

- Incident simulation drills executed

If you cannot check most of these boxes, full autonomy is premature.

Industry Snapshot: Adoption Reality in 2026

| Industry | Dominant Model | Rationale |

| Banking | Human-in-the-Loop | Regulatory scrutiny |

| Healthcare | Hybrid | Ethical sensitivity |

| Retail | Increasing autonomy | Operational speed |

| Manufacturing | Autonomous AI systems | Predictive optimization |

| Insurance | Human oversight | Claims complexity |

| Cybersecurity | High autonomy | Speed requirement |

The pattern is clear. Risk tolerance determines architecture.

The Human Element You Cannot Ignore

There is another dimension that leaders often overlook. It’s workforce transformation. Technology decisions do not operate in isolation from people, skills, and organizational culture.

If you eliminate humans from decision cycles too aggressively, you introduce real operational risks:

- Skill erosion

- Overdependence on systems

- Reduced institutional knowledge

- Morale decline

- Internal pushback

When employees stop exercising judgment, their ability to intervene during failures weakens over time. That creates vulnerability during crises.

Smart organizations in 2026 do not remove humans from the equation. They redesign roles and elevate them. Instead of reviewing every transaction, employees supervise exception patterns. Instead of approving each recommendation, they audit trends and challenge anomalies. Oversight shifts from repetitive validation to strategic supervision.

This shift represents the true evolution of Enterprise AI strategy.

Hybrid Intelligence: The Realistic Middle Ground

The debate between oversight and autonomy is gradually dissolving. Enterprises are embracing hybrid models.

Here is what hybrid intelligence looks like in practice:

- AI handles 80 percent of routine decisions.

- Humans review high-risk exceptions.

- Autonomous actions occur within confidence thresholds.

- Escalation triggers activate instantly.

This model preserves speed while protecting accountability.

In 2026, hybrid intelligence will not compromise. It is optimization.

Technical Maturity Factors That Influence Autonomy

Before you scale autonomy, evaluate these technical pillars:

1. Data Integrity

Garbage in still equals garbage out. Fully autonomous systems amplify bad data faster than humans can correct it.

2. Model Explainability

If your AI cannot explain why it acted, regulators and executives will not accept it.

3. Monitoring Infrastructure

You need:

- Drift detection

- Anomaly alerts

- Real-time dashboards

- Automatic rollback mechanisms

4. Scenario Simulation

Run stress tests. Simulate edge cases. Conduct red-team exercises.

Autonomy without simulation is reckless.

The Ethical Trade-Off

Autonomous AI optimizes measurable metrics such as speed, accuracy, and cost. Humans interpret nuance. They understand context, intent, and ethical implications that structured data alone may not fully capture.

Consider this scenario: An AI denies insurance claims based purely on statistical fraud likelihood. It achieves efficiency targets. However, it unintentionally discriminates against specific demographics due to historical data bias.

A human reviewer might notice contextual irregularities. An autonomous system might not.

Efficiency and fairness sometimes conflict. This is where oversight remains indispensable.

Enterprise Decision Matrix for 2026

Below is a simplified classification model many CIOs now use:

| Decision Type | Risk Level | Frequency | Recommended Model |

| Routine operational scaling | Low | High | Autonomous |

| Financial approvals | High | Moderate | Human-in-the-Loop |

| Security containment | Medium | High | Autonomous with guardrails |

| Strategic vendor selection | High | Low | Human-driven |

| Customer service ticket triage | Low | High | Hybrid |

You do not choose one model for the entire organization. You choose per function.

What the Hype Gets Wrong

Market narratives often suggest that human oversight slows innovation. That claim ignores reality.

Oversight builds resilience.

In fact, organizations that rushed into automation without proper AI governance frameworks are now retrofitting controls. That is expensive and reputationally risky.

Pragmatic leaders understand that autonomy is not a badge of sophistication. It is a calculated progression.

The Realistic Outlook for 2026

Here is the grounded prediction:

- Fully autonomous AI will expand in infrastructure management, cybersecurity automation, and operational analytics.

- Human-in-the-Loop AI will dominate financial decisions, healthcare interventions, compliance-sensitive workflows, and customer-impacting actions.

- Hybrid intelligence models will become the default architecture for large enterprises.

Autonomy will grow. Oversight will not disappear.

The debate will shift from whether to automate to how much to automate safely.

What You Should Do Now

If you lead IT or business strategy, take these actions:

- Audit your current AI deployments.

- Classify workflows by risk exposure.

- Strengthen governance before expanding autonomy.

- Invest in monitoring and explainability.

- Train your workforce to supervise AI rather than compete with it.

Do not chase full autonomy because competitors claim they have achieved it. Evaluate your readiness honestly.

Why This Conversation Matters

Technology no longer operates in isolation. IT decisions shape revenue, compliance posture, customer trust, and brand equity.

If your AI strategy misaligns with business risk tolerance, the consequences extend beyond technical failure.

That is precisely why nuanced discussions around Human-in-the-Loop AI, Autonomous AI systems, and Enterprise AI strategy deserve careful analysis rather than surface-level enthusiasm.

Conclusion

In 2026, realism beats ambition.

AI will continue advancing. Models will grow smarter. Infrastructure will scale. But enterprise maturity does not depend on removing humans. It depends on orchestrating intelligence responsibly.

The future is not human versus machine. It is a structured collaboration.

At ReadITQuik, we analyze high-impact developments in artificial intelligence, cloud, cybersecurity, analytics, and enterprise architecture with one objective. To help you make informed business technology decisions that align with measurable outcomes. Since 2016, we have provided leaders and decision-makers with in-depth perspectives, curated insights, and expert commentary that cut through noise and marketing exaggeration.

If you value practical analysis over hype and want to stay ahead of strategic technology shifts, now is the right time to increase subscribers to our digital publication.

Subscribe to ReadITQuik and stay connected to the business impact of technology.

(This article was originally published on ReadITQuik)