Abstract

An important aim in psychiatry is to establish valid and reliable associations linking profiles of brain functioning to clinically relevant symptoms and behaviors across patient populations. To advance progress in this area, we introduce an open dataset containing behavioral and neuroimaging data from 241 individuals aged 18 to 70, comprising 148 individuals meeting diagnostic criteria for a broad range of psychiatric illnesses and a healthy comparison group of 93 individuals. These data include high-resolution anatomical scans, multiple resting-state, and task-based functional MRI runs. Additionally, participants completed over 50 psychological and cognitive assessments. Here, we detail available behavioral data as well as raw and processed MRI derivatives. Associations between data processing and quality metrics, such as head motion, are reported. Processed data exhibit classic task activation effects and canonical functional network organization. Overall, we provide a comprehensive and analysis-ready transdiagnostic dataset to accelerate the identification of illness-relevant features of brain functioning, enabling future discoveries in basic and clinical neuroscience.

Similar content being viewed by others

Background & Summary

In recent years, there has been growing interest in establishing how alterations in brain anatomy and function may, at least in part, underpin the onset and maintenance of common psychiatric illnesses. However, progress in understanding these brain-behavior relationships in psychiatry has faced challenges partly due to a lack of open-access clinical cohorts and the restricted sampling of brain function and behavior across patient populations. While developments have been made in both brain-based explanatory and predictive models of clinically relevant behaviors, most of what we currently know about in vivo brain functioning comes from studying healthy populations. As such, the increased availability of clinically focused open-access data will facilitate the identification of brain function characteristic of symptom-relevant behavioral and cognitive domains.

To date, research on the neurobiological origins of psychiatric illness has primarily focused on discrete illness categories studied in isolation. Although researchers have historically treated patient populations as discrete entities, murky boundaries often exist between nominally distinct diagnostic categories1,2,3. Transdiagnostic data collection efforts provide the unique opportunity to identify symptom and disorder general impairments that may transcend conventional diagnostic boundaries4,5. While existing large-scale population neuroscience datasets such as the UK Biobank and Human Connectome Project6 have proven indispensable to foundational research questions in neuroscience, they predominantly consist of individuals without psychiatric illness. This narrow scope restricts the range of measurable behaviors, limiting our capacity to connect the full continuum of functioning to biological and environmental factors. Establishing these associations remains critical, especially given the high incidence of psychiatric diagnoses (approximately 23% of all adults in the United States as of 2021 and a lifetime prevalence of approximately 50% starting in adolescence7). We present openly available data from the Transdiagnostic Connectomes Project (TCP) dataset8,9 to work towards addressing these shortcomings. The TCP dataset comprises richly phenotyped behavioral and MRI data from individuals with and without psychiatric diagnoses, covering a broad spectrum of human behavior. This resource provides the opportunity to uncover the neural substrates of illness-relevant behaviors across traditional diagnostic boundaries.

Methods

In this section, we begin by describing recruitment strategies, screening procedures, and overall demographics of the TCP dataset. We then describe the clinician-administered measures, self-report questionnaires, and cognitive tests all participants completed. Finally, we describe the MRI data, detailing the acquisition parameters for each scan.

Participants

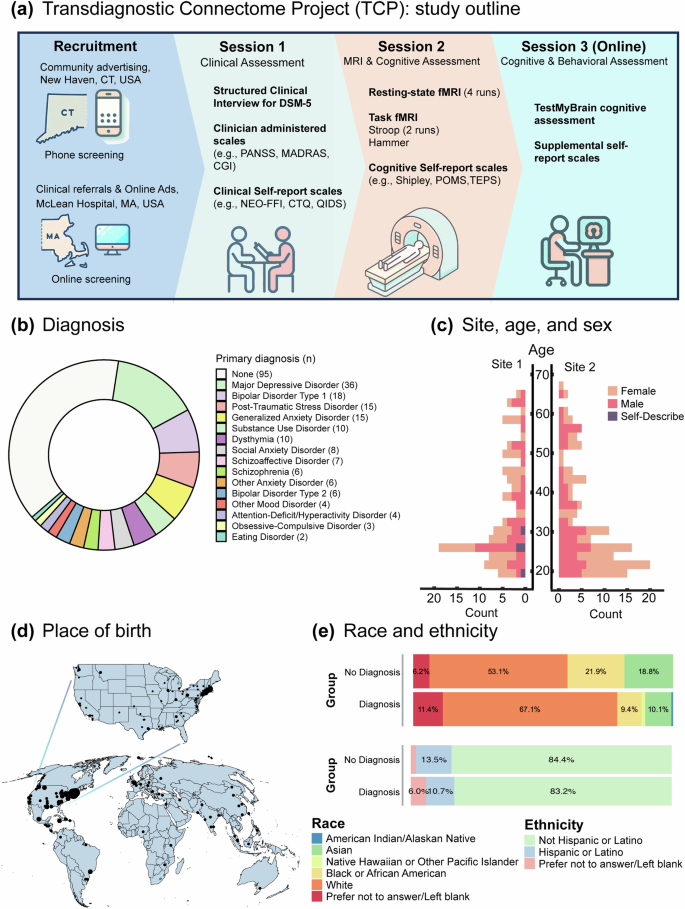

Between November 2019 and March 2023, 241 participants completed the TCP study at one of two sites within the United States of America: (1) Yale University, Department of Psychology, FAS Brain Imaging Center, located in New Haven, Connecticut and (2) McLean Hospital Brain Imaging Center, located in Belmont, Massachusetts. Participants were recruited from the community via flyers, online advertisements, and patient referrals from participating clinicians. We specifically recruited participants from psychiatric hospitals, community mental health centers, and from online research platforms accessible by providers and patients to ensure high levels of transdiagnostic psychopathology. Participants provided written informed consent following guidelines established by the Yale University and McLean Hospital (Partners Healthcare) Institutional Review Boards (IRB protocol number: 2013P001798; see Supplement B for representative study consent forms from each site). This data release only contains data from participants that explicitly consent to allow unrestricted access to and use of their data for any purpose. Participants were eligible for the study if they were (1) 18–65 years old, (2) had no MRI contraindications, (3) were not colorblind, and (4) had no diagnosed neurological abnormalities. All participants underwent a Structured Clinical Interview for DSM-5 (SCID-V-RV) to assess the presence of current or past psychiatric illness. Interviews were conducted by clinical psychologists or trained research assistants who were supervised by qualified clinical psychologists. Research assistants and their clinical supervisors met weekly to discuss interview findings. The final study population included both healthy individuals without a history of psychiatric illness or treatment and individuals with a diverse set of clinical presentations, including affective and psychotic psychopathology. A diagnostic and demographic breakdown of study participants is provided in. Figure 1.

Overview of the Transdiagnostic Connectome Project (TCP) dataset8,9. (a) Schematic representation of the TCP protocol, illustrating the recruitment of participants across two sites. Participants underwent three assessment sessions, including in-person clinician evaluations, self-report measures, neuroimaging, and online cognitive and self-report assessments. (b) Breakdown of primary mental health diagnoses for each individual based on the Structured Clinical Interview for DSM-5 (SCID-V-RV) criteria. (c) Bar chart displaying age distributions across the two sites, with bars colored based on the proportion of self-reported sex within each age bracket. (d) Geographic distribution of TCP participants’ place of birth. The dot size represents the number of participants from each location, with a world map at the bottom and an enlarged map of the United States of America on top. (e) Distribution of race and ethnicity according to National Institute of Health criteria, categorized for individuals with and without a mental health diagnosis.

After online or phone-based screening, participation in the TCP consisted of three study sessions (Fig. 1a): (1) an in-person clinical, demographic, and health assessment, including a diagnostic interview, clinician-administered scales, and self-report scales (see Behavioral measurements for a complete list); (2) a neuroimaging session, including anatomical, resting-state functional and task-based functional MRI (see MRI data acquisition), as well as an additional battery of self-report cognitive and behavioral measures; (3) an online cognitive and behavioral assessment, including the TestMyBrain10 cognitive assessment and a supplemental set of self-report assessments (see Behavioral measurements for a complete list).

MRI data acquisition

MRI data were acquired at both sites using a harmonized acquisition protocol on Siemens Magnetom 3 T Prisma MRI scanners and a 64-channel head coil. T1-weighted (T1-w) anatomical images were acquired using a multi-echo MPRAGE sequence with the following parameters: acquisition duration of 132 seconds, with a repetition time (TR) of 2.2 seconds, echo times (TE) of 1.5, 3.4, 5.2, and 7.0 milliseconds, a flip angle of 7°, an inversion time (TI) of 1.1 seconds, a sagittal orientation and anterior (A) to posterior (P) phase encoding. The slice thickness was 1.2 millimeters, and 144 slices were acquired. The image resolution was 1.2 mm3. A root mean square of the four images corresponding to each echo was computed to derive a single image. T2-weighted (T2-w) anatomical images with the following parameters: TR of 2800 milliseconds, TE of 326 milliseconds, a sagittal orientation, and AP phase encoding direction. The slice thickness was 1.2 millimeters, and 144 slices were acquired.

All seven functional MRI runs were acquired using the same parameters, which match the HCP protocol6,11, varying only the conditions (rest/task) and separately acquired phase encoding directions (AP/PA). For the resting-state, Stroop task, and Emotional Faces task, a total of 488, 510, and 493 volumes were acquired, respectively, all using the following MRI sequence parameters: TR = 800 milliseconds, TE = 37 milliseconds, flip angle = 52°, and voxel size = 2mm3. A multi-band acceleration factor of 8 was applied. An auto-align pulse sequence protocol was used to align the acquisition slices of the functional scans parallel to the anterior commissure-posterior commissure (AC-PC) plane of the MPRAGE and centered on the brain. To enable the correction of the distortions in the EPI images, B0-field maps were acquired in both AP and PA directions with a standard Spin Echo sequence. Detailed MRI acquisition protocols for both sites are available in Supplement B. In total, four resting-state (2 \(\times \) AP, 2 \(\times \) PA), 2 Stroop task acquisitions (1 \(\times \) AP, 1 \(\times \) PA), and 1 Emotional Faces task acquisition12 (1 \(\times \) AP) acquisitions were collected. Overall, this results in four runs of 6.5 min resting-state scans (total of up to 26 mins per individual), two runs of 6.8 min Stroop task scans (total of up to 13.6 mins per individual) and one run of 6.6 min Emotional Faces task scan. Some participants did not complete all functional neuroimaging runs; thus the sample sizes for each run were as follows: resting-state AP run 1, n = 241; resting-state PA run 1, n = 241; resting-state AP run 2, n = 237; resting-state AP run 2, n = 235; Stroop task AP, n = 226; Stroop task PA, n = 224; and Emotional Faces task AP, n = 226.

Task fMRI paradigms

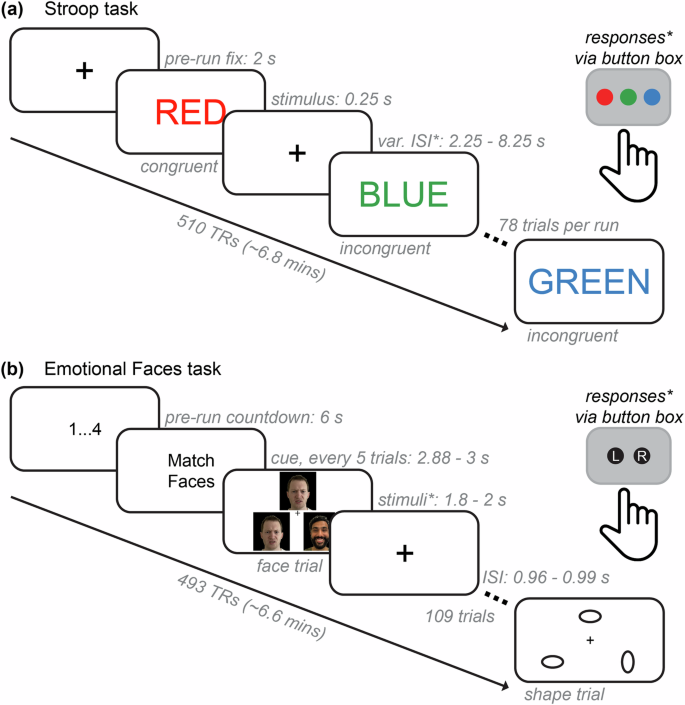

We collected functional MRI data while participants engaged in two tasks: 1) the Stroop task and 2) the Emotional Faces task. Figure 2 shows the two task paradigms, including timing specifications for fixation periods, trial durations, inter-stimulus intervals, and response collection methods. In all task fMRI runs, stimuli were presented using the PsychoPy software13 and projected onto a screen viewed through a mirror mounted atop the MRI scanner’s head coil.

Functional neuroimaging task paradigms. (a) The Stroop task paradigm. For each functional run (2 total: AP and PA), an initial fixation (2 s) was followed by the presentation of color name words (red, green, or blue) in either congruent or incongruent ink color. Participants were given a button box with three buttons that were pre-mapped to record responses as either red, green, or blue and were told to identify the color of the ink on each trial. Stimuli were presented for 250 ms, and responses were allowed within the variable interstimulus interval (var. ISI; response periods denoted by asterisks), which included a range of 2.25 through 8.25 s. There were three congruent trial types, shown for about 70% of trials (18 each), and six incongruent trial types, shown for about 30% of trials (4 each). There were 78 trials for each functional run, each approximately 6.8 minutes (or 510 TRs at 0.8 s each) in total. (b) The Emotional Faces task paradigm. There was an initial countdown of four screens showing 1 to 4 in consecutive order (6s total). Then, every six trials showed a cue that either said “Match Faces” or “Match Shapes”. Participants were given a button box with two pre-mapped buttons that recorded responses as either “left” or “right” and were told to choose which of the two (left or right) of the bottom images matched the top image in the triangle. Stimuli were presented for 1.8 through 2 s, and there was an ISI fixation between each trial. Feedback plus ISI was approximately 1 s per trial. Each of the faces and shapes trial types were shown in equal amounts (50% of trials each). There were 109 trials altogether, which lasted a total of 6.6 minutes (493 TRs). Note that some components of these figures have been slightly modified from their original presentation form for ease of visualization and to protect the privacy of the original models; the face stimuli shown are photographs of authors of this manuscript meant to be representative, but not exact matches, of the original images used in the experiment.

The Stroop task is a classical experimental manipulation of cognitive control–specifically, the ability to inhibit automatic responses when presented with conflicting information to accomplish a given task goal and/or context14,15,16,17,18,19. During the task, participants were presented with various color name words (e.g., red, blue, and green) that were shown in various “ink” colors (i.e., font colors) and asked to identify the color of the ink (Fig. 2a). If the ink and the written word matched (e.g., “red” shown in red font), this was a congruent trial and is relatively easy and fast to identify. However, if the ink and the written word did not match (e.g., “red” shown in blue font), this was an incongruent trial and is more difficult and slower to identify. This is known as the “Stroop effect” (or “Stroop interference”) and is quantified via reduced accuracy and slower reaction time on incongruent versus congruent trials. The Stroop effect is generally more pronounced (i.e., larger accuracy and reaction time differences between conditions) when a participant has lower inhibitory cognitive control or difficulty recruiting the neurocognitive resources needed to process conflicting information accurately20. Resolving interference in an experimentally manipulated context is thought to capture the extent to which one can deploy cognitive flexibility and/or selective attention in everyday life21,22,23. The Stroop effect has been investigated using various task adaptions in a variety of clinical research programs, including studies of psychotic24,25,26,27, attentional28,29,30,31, mood32,33,34, and substance use disorders35,36,37. Additionally, cognitive control is negatively impacted across a large number of psychiatric disorders38,39,40,41. Therefore, behavioral performance and neurocognitive processes exhibited while performing the Stroop task are well-suited to the transdiagnostic research questions addressable with the TCP dataset.

The Emotional Faces task12 is also a widely used task paradigm in neuroimaging. Participants were presented with images of human faces or geometric shapes and asked to categorize stimuli as either faces or shapes (Fig. 2b). Trials that showed pictures of human faces included people with negative emotional expressions (e.g., anger and fear) taken from the NimStim database of face stimuli42; trials showing shapes included ovals with different orientations. Three images were arranged in a triangle, and participants were instructed to indicate which of the two bottom shapes or faces matched the shape or face at the top of the screen. This task is relatively easy; therefore, behavioral performance is not typically of central interest. Instead, this task paradigm reliably elicits a response within regions of the amygdala during emotional face relative to shape trials and can provide neuroimaging studies with (1) essential benchmarking using a well-established task activation and (2) an entry point for neuroscience research questions on affective processing. The latter is an important consideration for transdiagnostic research, given that a variety of psychiatric conditions are known to involve dysregulated processing of emotions and deficits in social cognition. These topics have been examined using the Emotional Faces task in studies of autism spectrum disorder43,44, anxiety disorders45,46, post-traumatic stress disorder47, psychotic disorders48, psychopathy49, and those exhibiting social phobia50.

Behavioral measurements

Table 1 lists the entire battery of assessments across the three testing sessions. The selected measures index a broad range of functional, lifestyle, emotional, mental health, cognitive, environmental, personality, and social factors. These assessments include multiple commonly used clinical tools such as the Montgomery-Åsberg Depression Rating Scale (MADRS51), Depression Anxiety Stress Scale (DASS52), Positive and Negative Syndrome Scale (PANSS53), and Young Mania Rating Scale (YMRS54), as well as scales that capture distinctive aspects of experiences such as Temperament and Character Inventory55, Temporal Experience of Pleasure Scale56, Experience in Close Relationship Scale57, and the Positive Urgency Measure58. The dataset also includes common measures of cognition, including the Shipley Vocabulary Test59 and the TestMyBrain suite10, including matrix reasoning, as well as self-report measures such as Cognitive Emotion Regulation Questionnaire60, Cognitive Failures Questionnaire61 and Cognitive Reflection Test62. Item-level participant responses are available for download (see Data Records). The distribution, missingness, mean, median, and standard deviation for each scale and subscale are provided in Supplement Table 1.

Data Records

Raw neuroimaging and behavioral data can be openly accessed via OpenNeuro9: https://openneuro.org/datasets/ds005237. Note that 5 participants did not grant permission to share their data via openly online-hosted repositories and are excluded from this release. Additionally, the raw and processed data are co-deposited in the National Institute of Mental Health Data Archive (NDA)8: https://nda.nih.gov/study.html?id=2932. This supplemental NDA data is released as part of Open Access Permission, has been consented for broad research use, and, as of date of publication, can be accessed by users who are not affiliated with an NIH-recognized research institution.

Raw MRI data have undergone DICOM to NifTI conversion using dcm2niix63 and are provided in BIDS-compliant64 format. BIDS organizes data into a standardized file, folder and naming structure. For data uploaded to the primary dataset repository, OpenNeuro, a ‘sub-’ prefix was added to subject folders and filenames. For consistency with the co-deposited NDA dataset, data for each participant is stored in folders named according to the assigned Global Unique Identifier (GUID), an alphanumeric code created by the NDA GUID Tool. Each participant folder contains an ‘anat’ subfolder containing T1-w and T2-w images, a ‘func’ subfolder containing all runs of functional data as well as event files for task runs, and a ‘fmap’ subfolder containing the field maps. Each image and event file has a corresponding .json file that contains relevant meta-data. All anatomical images were defaced prior to upload using the face_removal_mask function from the R library fslr65 (R package version: 2.52.2, R version: 4.3.0), which is a wrapper library of FSL66 (version 6.0.5.1). The face_removal_mask function is an R implementation of the Python package pydeface67. Demographic and behavioral data are available as .csv files, with a separate file for each scale and a corresponding dictionary delineating available data fields.

To facilitate the use of the TCP dataset, in addition to raw BIDS-formatted data on OpenNeuro, we provide processed derivatives from the HCP processing pipelines (version 4.7.0, see Human Connectome Project minimal processing below for details) as a secondary resource on the NDA, including anatomical surfaces, as well as minimally processed and denoised functional timeseries in both densely sampled surface space and standard volumetric space. A full list of HCP-related derivatives can be found at: https://www.humanconnectome.org/study/hcp-young-adult/document/1200-subjects-data-release. We additionally provide analysis-ready parcellated timeseries using cortical, subcortical, and cerebellar regions on both OpenNeuro and NDA. The processing and denoising pipeline, and quality control procedures are described below in Technical Validation.

Technical Validation

In this section, we first describe the processing and denoising pipeline used for the MRI data. Next, we benchmark this process by reporting (1) the residual relationship between in-scanner movement and functional connectivity before and after denoising, (2) the effect of region-to-region distance on this residual relationship, and (3) the number of statistically significant associations between head motion and functional connectivity. Subsequently, we identify the presence of group-level canonical functional network structures and report the similarity of these structures across different fMRI runs. Additionally, we report commonly computed network diagnostics68 given by graph theory, canonical task-based fMRI activation contrasts, and behavioral outcomes from in-scanner tasks. Finally, we identify correlation patterns across behavioral scales and subscales for diagnosed and non-diagnosed individuals and report latent behavioral structures using dimensionality reduction. All processing and assessments reported below, except differences in behavioral measures reported in Fig. 7 and Fig. 8, are conducted on the entire sample and do not differentiate between those with and without a psychiatricdiagnosis.

Human connectome project minimal processing

MRI data were minimally processed and denoised via the Human Connectome Project (HCP) pipelines69, version 4.7.0 (https://github.com/Washington-University/HCPpipelines). The following versions of dependencies were implemented on a 64-bit Linux operating system (Red Hat (RHEL) version 8.8): FMRIB Software Library (FSL) version 6.0.5, FSL ICA-based Xnoiseifier (FIX) version 1.06.15, FreeSurfer version 6.0.0, Connectome Workbench version 1.4.2, MATLAB version 2017b (compiled option), and R package 4.3.0. Broadly, HCP processing pipelines include: (1) FreeSurfer structural MRI processing, (2) functional MRI volume processing, (3) functional MRI surface processing, (4) denoising via ICA-FIX, (5) “multimodal surface matching” registration (MSMAll70), and (6) de-drifting, resampling, and applying the MSMAll registration. Tools implemented by HCP pipelines are mainly adapted from FSL and FreeSurfer71 and aim to improve the spatiotemporal accuracy of MRI data, particularly with acquisition advancements such as multiband acceleration69,72,73.

In brief, anatomical T1w/T2w images were used to create MNI-aligned structural volumes in each participant’s native space. These images were corrected for gradient nonlinearities and magnetic field inhomogeneities and reconstructed into segmented brain structures. Folding-based surface registration to the standard FreeSurfer atlas (i.e., fsaverage) and to the high-resolution Conte69 atlas74 was followed by downsampling to various spatial resolutions (2 mm used herein). fMRI volume processing steps further corrected for spatial distortions and implemented motion correction, bias field correction, and normalization. Motion correction was implemented via FLIRT registration of individual frames to a pseudo-single-band reference image (i.e., the first volume of the time series is standardly used in relevant HCP processing scripts when single-band reference volumes are not collected). In HCP-style, we provide motion parameters in separate files to characterize x/y/z translation, rotation, and their derivatives, as well as demeaned and detrended versions of each (24 parameters total), which may be used for nuisance regression and subject exclusion (see fMRI denoising). Echo planar distortions were corrected by FSL’s “topup”75,76 using spin echo field maps that were acquired in the opposite phase encoding directions of each scan and pseudo-single-band reference images concurrently.

fMRI surface processing steps transformed 4D volumetric timeseries into 2D surface-based timeseries that were registered to a standard set of grayordinates across all participants. This involved HCP’s “partial volume weighted ribbon-constrained volume-to-surface mapping algorithm”69, which uses non-resampled images in each participant’s native space to align surfaces along tissue contours. Surfaces were smoothed using the “geodesic Gaussian surface smoothing algorithm”69 and additional 2 mm FWHM smoothing that enhances subcortical regularization. Following denoising (see fMRI denoising for full details), surface-based functional timeseries were aligned with MSMAll70. MSMAll improves inter-participant alignment by using areal features from multiple sources in the reference pipeline developed by Glasser et al.70, including cortical folding, sulcal depth, T1w/T2w myelin maps, resting-state network maps, and visuotopic maps. The HCP minimal processing pipelines are optimized for surface-based processing of high-resolution anatomical and functional MRI images. Thus, we used the resulting grayordinate timeseries from each of the seven functional runs to perform the quality assurance and preliminary analyses reported herein. This included cortical, subcortical, and cerebellar vertices (i.e., 91,282 “whole-brain” gray ordinates) that were regionally mapped according to their corresponding atlas schemes (see Parcellating timeseries into brain regions and functional connectivity).

fMRI denoising

To remove sources of noise such as movement, scanner drift, and physiological artifacts, we implemented the data-driven MELODIC independent component analysis-FMRIB ICA-based Xnoiseifier (ICA-FIX;77,78). ICA-FIX is a denoising approach performed on each functional timeseries for each participant. Given that we closely followed the HCP’s acquisition protocols and minimal processing pipelines, we applied FIX classifiers pre-trained on HCP data (using the HCP pipeline default; “HCP_hp2000”). To provide flexibility for the varied research questions addressable with the TCP dataset, we separately performed both “single-run” and “multi-run” ICA-FIX. Single-run ICA-FIX, which was used for benchmarking and quality control herein (see Figure S1 for example quality control scenes provided by the HCP pipelines), was performed on each functional run independently, and multi-run ICA-FIX was performed on: (1) concatenated the four resting-state runs, and (2) three task runs functional timeseries. Consistent with the HCP processing pipeline, we used a high-pass temporal filter of 2000s FWHM during single-run ICA-FIX. While spatial ICA likely provides components with better signal-to-noise separation via multi-run FIX (given longer timeseries data), single-run FIX is likely optimal for research questions (or benchmarking) requiring statistical independence across functional runs.

We performed global signal regression (GSR) to further control for noise sources in fMRI timeseries data79,80,81,82. GSR has been shown to remove global sources of noise83,84,85 and improve behavioral prediction models86. However, it has also been shown that the global signal can carry behaviorally-relevant information86,87,88. Given this ongoing debate surrounding the use of GSR81,82, we provide denoised derivative timeseries with and without GSR and evaluate the impact of GSR on fMRI data in the Technical Validation sections below. We performed GSR for each participant and each functional run by regressing the mean timeseries across all vertices from each vertex89.

Parcellating timeseries into brain regions and functional connectivity

We parcellated the dense (i.e., 91,282 vertices) CIFTI timeseries into 434 brain regions that covered the cortex, subcortex, and cerebellum, by averaging the functional timeseries of the vertices belonging to a given region together. We used a previously validated surface-based functional atlas to parcellate the cortex into 400 regions90. This “homotopic” cortical atlas is a recent update to the widely-used Schaefer atlas91 that improves upon hemispheric lateralization in brain systems known to be asymmetric, such as language processing regions. This atlas is openly available via website links in Schaefer et al.91. Subcortical vertices were parcellated into 16 bilateral brain regions (32 total) that are part of the medial temporal lobe, the thalamus, and the striatum (including the pallidum)92. These 32 regions were yielded by the “scale II” resolution provided by Tian and colleagues, which we implemented based on the finding that anatomical boundaries are well-captured at this resolution while also providing functional subdivisions. This atlas is openly available via website links in Tian et al.92. Lastly, we parcellated the cerebellum into one region per hemisphere (2 total) using the atlas provided by Buckner et al.93. Following the best practices provided by Buckner and colleagues, we regressed neighboring (6 mm) cortical signals from cerebellar vertices before parcellation to account for potential spatial autocorrelation between these brain segments.

The pairwise product-moment correlation between regional timeseries was used to derive functional connectivity matrices for each participant and each functional scan at three different stages of the pipeline: after minimal processing (see Human Connectome Project minimal processing pipeline), after ICA-FIX denoising, and after GSR was applied to denoised data (see Functional MRI denoising).

Functional connectivity quality control and benchmarking

Estimates of brain function derived from fMRI, such as functional connectivity, are sensitive to artifacts from multiple sources, including in-scanner head movement, respiratory motion, and scanner effects94. To assess the success of denoising procedures, residual relationships between functional connectivity and in-scanner head motion can be examined and compared at different stages of the processing pipeline84,95,96. Head motion during each fMRI scan was estimated using framewise displacement (FD), a summary measure of head movement from one volume to the next84,97,98. For each scan, FD was calculated according to the method described by Jenkinson et al.99 and the resulting FD timeseries was band-stop filtered and down-sampled to account for the high sampling rate of the multiband fMRI acquisition100. Distributions of mean FD for each participant and each fMRI run are provided in Figure S2. In regards to excluding subjects based on head motion, we suggest the criteria validated by Parkes et al.84, which recommends a lenient exclusion threshold98 of mean FD >0.55 mm, or a stringent threshold97 which consists of three parts: (i) mean FD >0.2 mm, (ii) >20% of the FDs above 0.2 mm, or (iii) any FDs were >5 mm. To facilitate the exclusion of low-quality scans, we have included subject-level FD timeseries in our data release, as well as code for estimating FD and associated data exclusion thresholds as in Fig. S2 (see Code Availability).

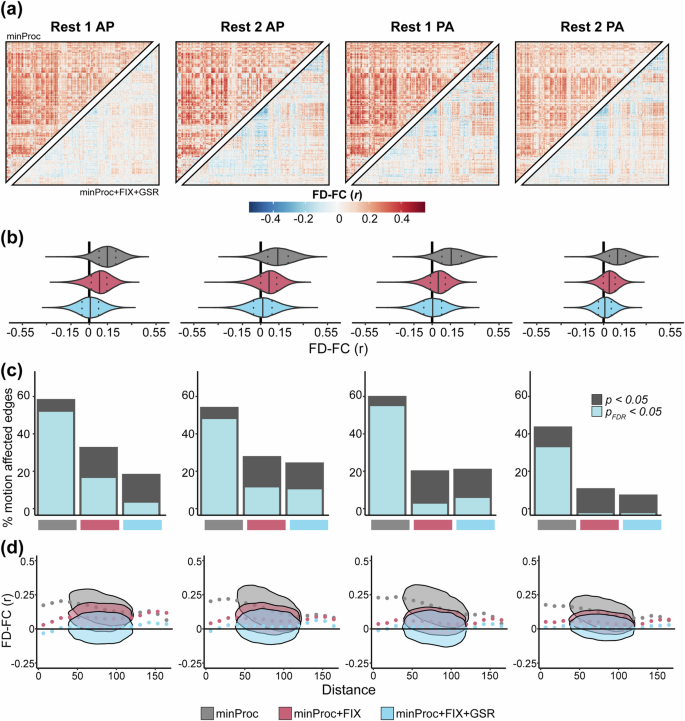

FD-FC correlations

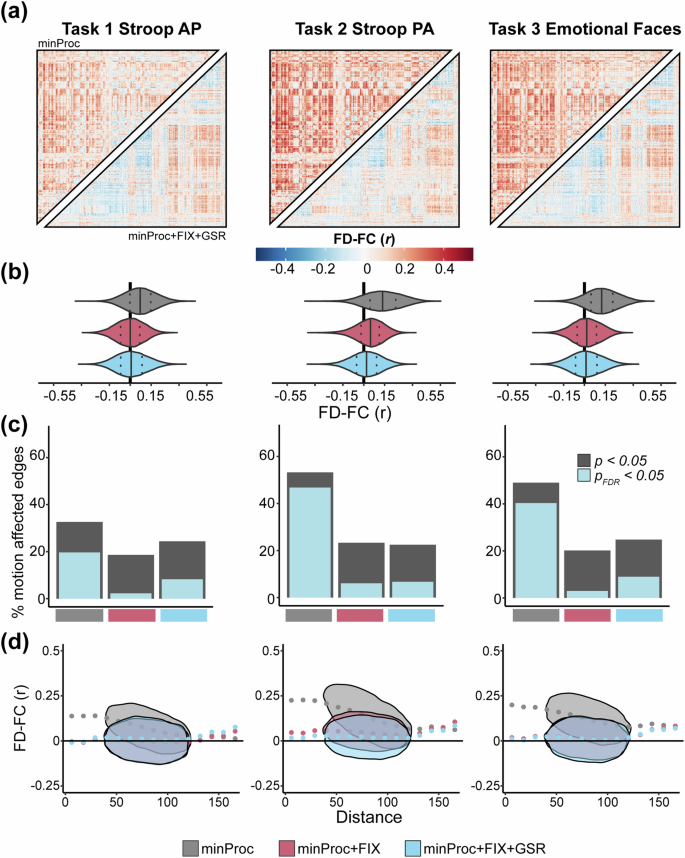

For each rs-fMRI run, we computed the Spearman correlation between FD and functional connectivity at each pair of regions across all participants (Fig. 3a,b). Similar to previous work84,96, before denoising (i.e., after minimal processing), we find widespread positive associations between functional connectivity and FD with a moderate effect size across most connections in all four runs (Fig. 3a, upper triangles), indicating a strong and wide-spread effect of in-scanner head motion on functional connectivity estimates. The mean FD-FC correlation over the brain ranged across each run from ρ = 0.12–0.16 (Fig. 3b). ICA-based denoising consistently reduced these associations (ρ = 0.04–0.08; Fig. 3b) and adding GSR brings the mean correlation down to ρ = 0.00–0.02 (Fig. 3a, lower triangles; Fig. 3b). However, in some cases (Rest 1 AP and Rest 1 PA), both ICA-based denoising and GSR induced negative FD-FC associations. Overall, denoising procedures substantially reduced FD-FC associations across the brain. A broadly similar pattern of results was evident across the three task-based fMRI runs (Fig. 4a,b), with mean correlations across each run ranging from ρ = 0.07–0.14 before denoising, from ρ = 0.00–0.04 after denoising and ρ = 0.00–0.02 after GSR, albeit with GSR having a less pronounced effect on reducing FD-FC correlations in task runs compared to rs-fMRI runs.

Functional connectivity and head motion association across processing stages for resting-state fMRI runs. (a) Inter-participant correlation between functional connectivity (FC) and mean framewise displacement (FD) at each of 93,961 connections for each resting-state fMRI run before denoising, i.e., after minimal processing (minProc; upper triangles) and after ICA-based denoising (FIX) and Global Signal Regression (GSR; lower triangles). (b) Distributions of FD-FC correlations for each run resting-state fMRI at three different processing stages: minProc, FIX, and GSR. (c) Percentage of connections significantly (p < 0.05, gray; p < 0.05FDR, light blue) correlated with FD for each resting-state fMRI at each of the three processing stages. (d) Associations and density between FD-FC values and pairwise Euclidean distance between 432 regions for each resting-state fMRI run and processing stage.

Functional connectivity and head motion association across processing stages for task-based fMRI runs. (a) Inter-participant correlation between functional connectivity (FC) and mean framewise displacement (FD) at each of 93,961 connections for each task-based fMRI run before denoising, i.e., after minimal processing (minProc; upper triangles) and after ICA-based denoising (FIX) and Global Signal Regression (GSR; lower triangles). (b) Distributions of FD-FC correlations for each run task-based fMRI at three different processing stages: minProc, FIX, and GSR. (c) The percentage of connections significantly (p < 0.05, gray; p < 0.05FDR, light blue) correlated with FD for each task-based fMRI at the three processing stages. (d) Associations and density between FD-FC values and pairwise Euclidean distance between 432 regions for each task-based fMRI run and processing stage.

When examining the proportion of connections significantly correlated with FD, we find that before denoising, across rs-fMRI runs, 35% to 56% of connections met the threshold for significance (pFDR < 0.05; Fig. 3d). After ICA-based denoising, there was a substantial drop in motion-affected connections, reflecting 1% to 19% of connections, depending on the fMRI run (Fig. 3d). In all runs except Rest 1 PA, GSR further reduces the number of motion-affected connections. A similar pattern was seen for the task-fMRI run, except that GSR consistently increased the number of motion-affected connections across all three runs compared to ICA-based denoising alone (Fig. 4d).

FD-FC distance dependance

For each rs-fMRI run, we examined how the FD-FC relationship varies as a function of the pairwise Euclidean distance between the centroid of regions, as head motion generally has a more pronounced effect on the FD-FC correlation for short-range connectivity94,95,101. Specifically, the presence of head motion can artifactually increase correlations between regions that are closer and decrease correlations between areas that are further apart. This pattern of distance-dependence motion contamination was present across all rs-fMRI runs before denoising and was substantially reduced after ICA-based denoising (Fig. 3c). The addition of GSR did not have a notable impact. Similar patterns were found for task-fMRI runs (Fig. 4c).

Edge-level Intraclass Correlation Coefficient (ICC)

To assess the within-session reliability of edge-level functional connectivity for modalities where we collected more than one run (resting-state and Stroop task), we used the PyReliMRI toolbox102 with default settings to compute edge-level ICC at each major processing stage. Consistent with prior work84,103, we find that edge-level ICC for resting state scans across the four runs is poor, and decreases with GSR (Fig. S5), suggesting that intra-session reliability of edge-level functional connectivity may be partly driven by head motion and other physiological confounds. Also consistent with prior work, we find that the two runs of the Stroop task have numerically higher ICC than the resting-state scans, approaching moderate reliability after denoising (Fig. S5). Across both task and rest scans, limbic and subcortical regions showed exceptionally poor ICC across all processing stages. This is consistent with the low signal-to-noise ratio and distortion susceptibility of these areas104,105. These outcomes remained largely the same when examining ICC within each site (Fig. S5C–F).

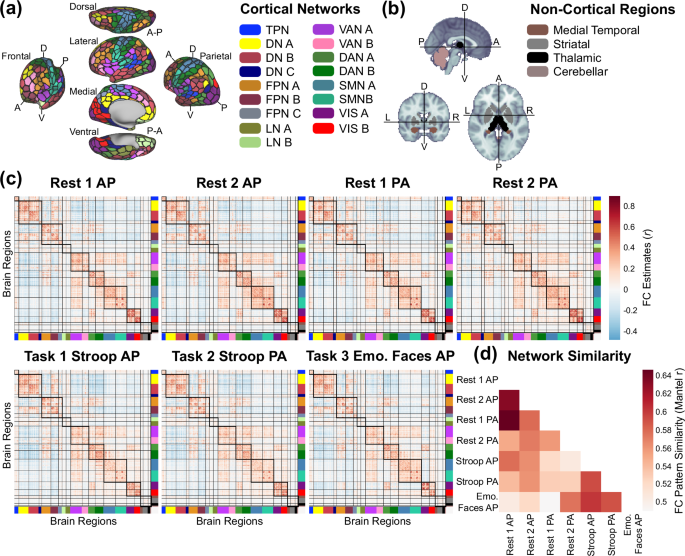

Functional network structure

The regions in the cortical and subcortical atlases used are each provided with corresponding network assignments. We used the 17-network solution for cortical regions105 and a 3-network anatomical solution for subcortical regions comprising the medial temporal lobe, striatum, and thalamus92. Lastly, we considered the two cerebellar regions as part of their own network, altogether resulting in 21 functional networks (Fig. 5a,b).

Average functional network structure and similarity across each fMRI run. (a) Whole-brain regional parcellation (black borders, 432 regions90,92,93) and network partition (filled in colors, 21 networks) across the cortex (a) and non-cortex (b) (see Parcellating timeseries into brain regions and functional connectivity for full details). TPN: temporal parietal network, DN: default network, FPN: frontoparietal network (sometimes referred to as cognitive control network), LN: limbic network, VAN: salience/ventral attention network, DAN: dorsal attention network, SMN: somatomotor network, VIS: visual network. The medial temporal network contains hippocampal and amygdalar regions; the striatal network contains caudate, nucleus accumbens, putamen, and globus pallidus regions. The thalamic and cerebellar networks contain only thalamus and cerebellum, respectively. (c) Functional connectivity (FC) estimates for each of the 6 runs (across-participant averages). Thick and thin black borders delineate within- and between-network borders, respectively. (d) Similarity of FC patterns for each pair of neurocognitive states using the nonparametric Mantel test.

Functional connectivity estimated from rest and task fMRI typically exhibits a reliable and robust set of brain networks that encompass spatially distributed regions with temporally correlated activity. The topology and connectivity strengths of these networks are heritable106 and linked with behavioral outcomes in both health107 and psychiatric illness108. To identify whether the expected functional network structure was present across each of the fMRI runs, functional connectivity matrices were Fisher z-transformed, averaged across participants, and then the group-average matrix was transformed back into product-moment correlations (Fig. 5c, visualizing mean functional connectivity for each run after denoising and GSR). The columns and rows of each group average connectivity matrix were reordered according to the prespecified 21-network scheme (Fig. 5a,b), revealing the expected pattern of pronounced within-network connectivity (Fig. 5c, diagonal blocks) and comparatively lower between-network connectivity (Fig. 5c, off-diagonal blocks). We present group average functional connectivity matrices with/without ICA-based denoising and with/without GSR in Figure S3.

Across the network neuroscience literature, there is strong evidence that functional network organization is highly similar across neurocognitive states, particularly across rest- and task-state connectivity estimates109,110,111,112. Rest-to-task changes in network connectivity patterns are typically limited in magnitude, small in extent (i.e., only a subset of regional pairs change), and tend to be decreases in connectivity113. However, that prior work suggests these relatively subtle task-evoked changes likely carry important information for behavioral and/or cognitive functioning112,114. In the present work, we report the similarity of connectivity patterns for each pair of functional runs (Fig. 5d), estimated with the nonparametric Mantel test115. As expected, stronger similarity patterns were generally observed across similar states, i.e., resting states (runs 1 AP/PA and 2 AP/PA), Stroop (AP/PA), and Emotional Faces task states.

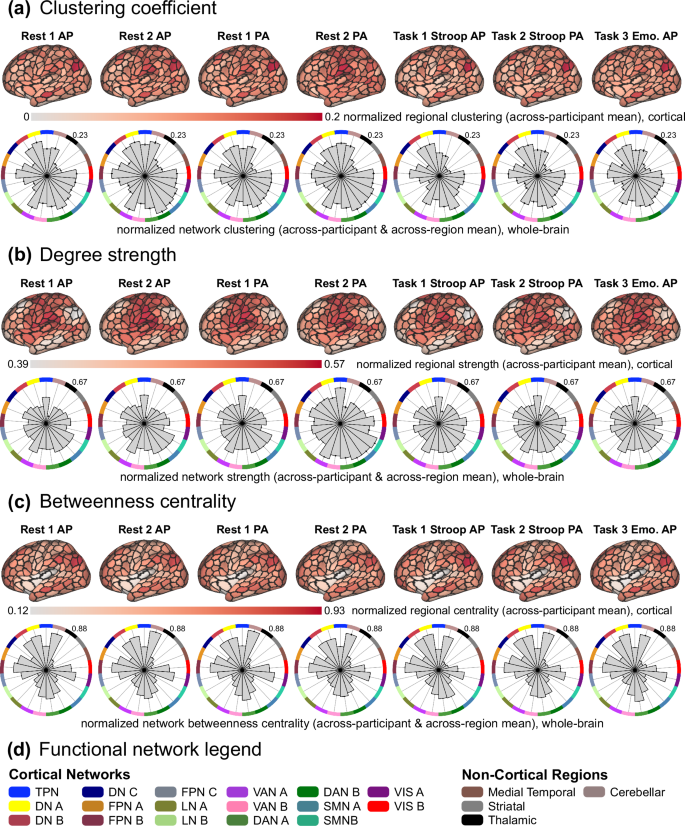

Benchmarking functional network properties with graph-theoretic metrics

A common approach in network neuroscience is to leverage graph theoretic tools to characterize the properties underlying brain connectivity patterns via neurobiologically relevant and computationally tractable metrics68,116,117,118,119,120,121,122, sometimes referred to as network diagnostics123. Given that the neuroimaging acquisition and processing protocols of the TCP dataset were optimized for functional network analysis, we aimed to demonstrate that well-established network properties are discoverable in TCP connectomes. To this end, we implemented the following network metrics: (1) clustering coefficient, (2) degree strength, and (3) betweenness centrality (Fig. 6). We used the Brain Connectivity Toolbox68 (http://www.brain-connectivity-toolbox.net) adapted to Python (i.e., bctpy), which is openly available here: https://pypi.org/project/bctpy/. Each metric was applied to both pairwise regional functional connectivity estimates (i.e., “region level”) and subsequently averaged based on network assignment (i.e., “network level”). Given that we used product-moment correlation to estimate functional connectivity (see Parcellating timeseries into brain regions and functional connectivity), we used the “weighted and undirected” variant68,124 of these metrics. We used min-max normalization to average network metric scores across participants and scaled all scores between 0 and 1. Min-max normalization was used to maintain the relative distribution of scores across participants while scaling possible values to a fixed, comparable range. However, we encourage future investigations to consider normalization techniques that account for potential extremes if appropriate for the research question and network metric.

The across-participant average (i.e., group-level) clustering coefficient scores at the region level (top: projected onto surface-based cortical brain schematics with black outlines delineating cortical regions given by Yan et al.90; showing the lateral view of the right hemisphere) and at the network-level (bottom: polar plots organized by network assignment, which follows label colors in panel d, and the maximum value is listed on the top right of each plot). Before averaging, scores were normalized across the entire dataset using min-max feature scaling (between the values of 0 and 1). (b) The same as panel (a), but for the metric of degree strength. (c) The same as panels a and b, but for the betweenness centrality metric. (d) Legend of colors used to indicate functional network assignment in polar plots, corresponding to Fig. 5.

The clustering coefficient is a metric for quantifying the extent that local connectivity patterns are segregated and is based on the average “intensity” or “abundance” of triangles that are present around a given region124,125,126. Triangles refer to connectivity estimates adjacent to the given region, and their intensity is given by the relative extent (i.e., fraction) that those regions are also connected. Clustering can be thought of as the relative magnitude that a neighborhood of connections is “established” or “complete”. Therefore, a relatively large region-level score indicates clustered connectivity surrounding that region, and a large network-level score indicates that regions in that functional system tend to cluster together. A common inference for such clustering is that it supports local efficiency of information processing and community structure127. Across participants, we observed non-random clustering patterns across brain regions and functional networks (Fig. 6a), which is broadly consistent with prior work demonstrating that functional brain networks exhibit clustering and expected properties such as small worldness127. In resting-state connectivity matrices, high clustering was observed in all dorsal attention, somatomotor, temporoparietal, cerebellar, and visual network regions, as well as ventral attention A, frontoparietal B, and default A and B network regions. This resting-state pattern was slightly modified during Stroop and Emotional Faces task states. Stroop connectivity patterns exhibited reduced clustering within somatomotor, visual, cerebellar, and temporoparietal network regions. Emotional Faces connectivity patterns exhibited an overall similar pattern to resting-state, just reduced in magnitude.

The degree strength of a given brain region is a straightforward and commonly applied network metric that quantifies the relative magnitude of connectivity estimates for a given region68. This is similar to the degree – the number of connections for a given region – but more appropriate for fully connected networks. Regions with relatively large degree strength are thought to be more important in a given network, and the network-level degree strength is often interpreted as the wiring cost or density of that brain system68,128. This is an important consideration for future research questions that are sensitive to heterogeneous metabolic demands across different brain systems, different participant groups, or both, given the association between the efficiency of energy consumption and degree of connectivity patterns129. Here (Fig. 6b), resting-state functional connectivity patterns exhibited relatively higher degree strength in temporoparietal and somatomotor network regions, as well as ventral attention A, dorsal attention B, and default C network regions. This pattern was consistent but with reduced overall magnitude in all task states, suggesting that the network-level degree strength is a relatively stable metric across neurocognitive states. One exception was the relative reduction of degree strength exhibited by default A and B network regions in Stroop task states, which is broadly consistent with the traditional view of the default network being less prominent during task engagement130 (although see Spreng131). However, degree strength is lower in default A and B networks than expected across all states, which may be attributable to dampened structural hub structure exhibited across brain regions of psychiatric patients versus healthy controls, which were examined concomitantly for demonstration purposes. Given the transdiagnostic sampling of the TCP dataset, this is a complex and open empirical question that we encourage researchers to investigate.

Betweenness centrality is a metric that estimates the extent to which a given region interacts with other regions132,133. Such central areas are sometimes called “hubs” and are integral for integrated information flow across brain systems68,134,135. Multiple network metrics quantify different hub properties; however, betweenness centrality quantifies the fraction of shortest connectivity paths that contain the given region68. Therefore, a high betweenness centrality score indicates that the region participates in a relatively large number of short paths in the brain network and can be conceptualized as bridges in the system. Across all resting-state connectomes, regions in the frontoparietal, dorsal attention, visual, and cerebellar networks exhibited high betweenness centrality, as well as regions in the default A/B, and ventral attention B networks (Fig. 6c). This pattern was similar across Stroop task-state connectivity patterns, but with a relatively more pronounced centrality in frontoparietal B network regions, and relatively reduced centrality in visual and cerebellar network regions, which is broadly consistent with the theory that frontoparietal network interactions support cognitive control processes136,137. During the Emotional Faces task, connectivity patterns exhibited relatively more centrality in visual A and dorsal attention A network regions, which is expected given that this task requires participants to make judgements about the context of visual stimuli.

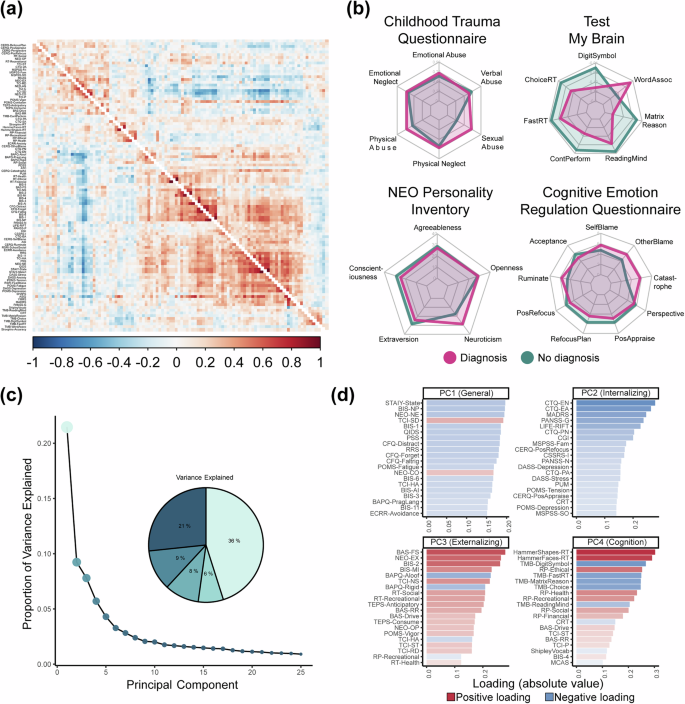

Inter-scale correlations and latent structure across measures of behavior

To assess associations between 110 scales and subscale scores across participants (see Table S1 for distributions and descriptive metrics), after z-scoring each scale, we computed product-moment correlations between each pair of measures using pairwise complete observations. This was done separately for individuals with (Fig. 7a, lower triangle) and without a diagnosis (Fig. 7a, upper triangle), with both groups showing a highly similar inter-scale correlation structure (r = 0.74).

Behavioral data associations and latent structure. (a) Inter-participant correlation matrix between 110 scales and subscales for individuals with (top-triangle) and without (bottom-triangle) a diagnosis, with the rows and columns ordered using hierarchical clustering. (b) Radar plots showing scale scores differences between individuals with (pink) and without (green) a diagnosis across select measures (c) Screeplot and pie chart of the variance explained by the first 25 components from a PCA conducted on imputed and transformed behavioral data across all participants. (d) Top 30 absolute positive (red) and negative (blue) loadings on the first four principal components.

When examining select individual scales for consistency with previous findings (Fig. 7b), individuals with a mental health diagnosis, on average, had higher scores on measures of abuse on the Childhood Trauma Questionnaire138 and neuroticism on the NEO Five-Factor Inventory139. These individuals also showed higher scores on measures of catastrophizing, blame, and rumination, as indexed by the Cognitive Emotional Regulation questionnaire60, and worse performance across a range of computerized measures of cognitive performance10 from the Test My Brain battery.

In recent years, there has been a growing interest in exploring novel data-driven approaches to understanding psychopathological nosology by examining covariation among symptoms and maladaptive behaviors140. To identify latent dimensions of behavior that may be captured by the range of scales and subscale scores, we used Principal Component Analysis (PCA). Standard PCA cannot account for missing data and is biased by highly non-gaussian distributions, such as zero-inflated distributions often seen in clinical measures. Therefore, we imputed missing data using a simulation-based multi-algorithm comparison framework (missCompare141).

Following best practices, prior to imputation, the number of participants and measures was reduced to minimize missingness141. An optimal threshold for removing participants and scales was found by visualizing the proportion of missing data as a function of removing an increasing number of participants and measures and finding inflection points (Fig. S4). This led to removing participants with >20% and variables with >25% missing data. This resulted in 191 participants and 104 measures being entered into the imputation process. Following the missCompare framework, 50 simulated datasets matching multivariate characteristics of the original data were generated, and then missingness patterns from the original data were added to the simulated data. Then, the missing data in each simulated dataset were imputed using a curated list of 16 algorithms141, and finally, the computation time and imputation accuracy of each algorithm were assessed by calculating Root Mean Square Error (RMSE), Mean Absolute Error (MAE) and Kolmogorov-Smirnov (KS) values between the imputed and the simulated data points (see Figure S4). These metrics were compared under three conditions: Missing Completely At Random (MCAR), Missing At Random (MAR), and Missing Not At Random or Non-Ignorable (MNAR). The missForest algorithm142, which is an iterative imputation method based on a random forest algorithm, consistently performed the best compared to all other algorithms across all metrics evaluated and was therefore used to impute the missing values in the observed data (Fig. S4). Finally, post-imputation diagnostics, including visual examination of data distributions and stability of correlation coefficients between measures, before and after imputation, were examined, with both checks showing minimal impact of imputation (Fig. S4). Non-gaussian measures were transformed using an optimal normalizing transformations framework (bestNormalize143), where normalization efficacy is compared across a suite of possible transformations and evaluated for normality on goodness of fit statistics. Highly non-gaussian measures, such as those with large zero inflation, were binarized.

PCA of the imputed and transformed behavioral data revealed that the first component, which accounted for 21% of the variance, represented a general functioning and well-being factor (Fig. 7c,d). This component exhibited high absolute loadings on a broad array of measures, and higher scores were associated with lower anxiety and depressive symptoms, neuroticism, impulsivity, stress, forgetfulness, fatigue, avoidance, and higher conscientiousness and self-directedness (Fig. 7d). The second, third, and fourth components were linked to internalizing behaviors, externalizing behaviors, and cognitive functioning, explaining 9%, 8%, and 6% of the variance, respectively (Fig. 7c). Higher scores on the second component were associated with lower childhood emotional and physical trauma, depressive traits and symptoms, general clinical functioning, perceived social support, neuroticism, suicidal ideation, stress, and tension (Fig. 7d). Elevated scores on the third component were related to higher extraversion, fun, novelty and pleasure-seeking, impulsiveness, social and recreational risk-taking, openness to experience and reward dependence, and lower harm avoidance, and autism traits, including rigidity and aloofness (Fig. 7d). Higher scores on the fourth component were associated with slower reaction times on multiple cognitive tasks, slower processing speed, worse abstract reasoning, memory and vocabulary, and higher concerns related to health, ethics, and recreation.

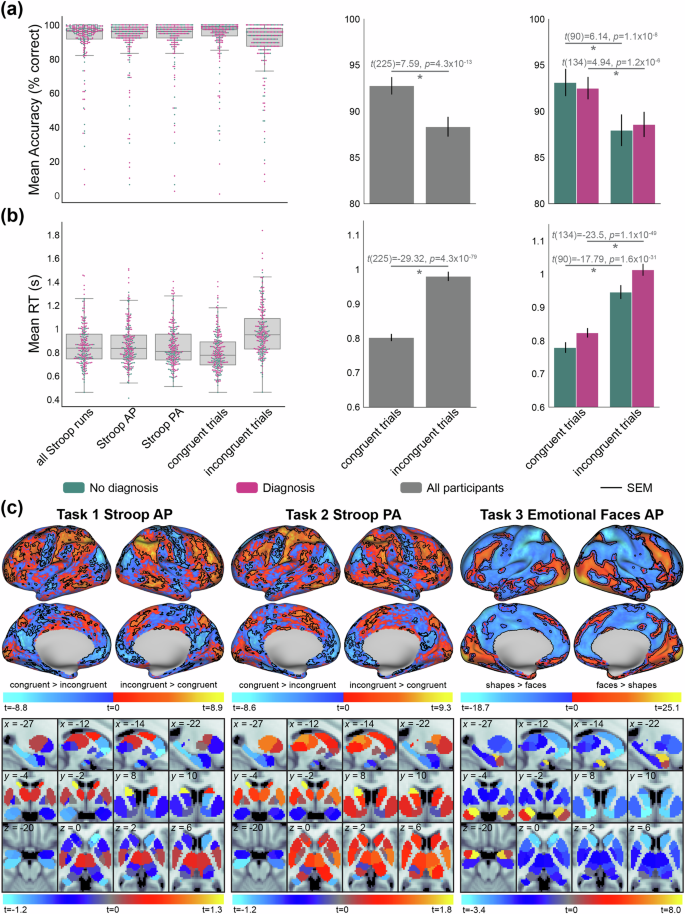

Task-based fMRI activation and behavioral outcomes

Here, we report the performance data and brain activity estimates for the Stroop and Emotional Faces task fMRI paradigms to validate them against known and established findings. The Stroop task is a well-replicated assessment of inhibitory cognitive control14. In the Stroop task, participants must identify colors of printed words that are congruent or incongruent with the given text (see Task fMRI paradigms for further details). Thus, congruent trials are lower conflict and easier to identify, and incongruent trials are higher conflict and more difficult to identify. As expected, participants performed significantly better on congruent than incongruent trials both in terms of accuracy (t(225) = 7.59, p = 4.3 × 10−13) and reaction time (t(225) = −29.32, p = 4.3 × 10−79) (Fig. 8a,b). While these “Stroop effects” were statistically significant in both the diagnosis and no diagnosis groups, participants with psychiatric diagnoses exhibited less differentiated accuracy between congruent and incongruent trials (diagnoses: t(134) = 4.94, p = 1.2 × 10−6; no diagnoses: t(90) = 6.14, p = 1.1 × 10−8), but more differentiated reaction times between congruent and incongruent trials (diagnoses: t(134) = −23.5, p = 1.1 × 10−49; no diagnoses: t(90) = −17.79, p = 1.6 × 10−31). This indicates that participants with diagnoses required more time than those without diagnoses to exert inhibitory cognitive control during incongruent trials (with reference to performance on congruent trials).

Functional task-activation and behavioral data from Stroop and Emotional Faces tasks. (a) Stroop task performance quantified via percentage of correct responses. Left: across-participant distribution of accuracy scores for all trials and runs, trials in each AP and PA functional run, and trials in each of the congruent and incongruent conditions. Lines inside boxplots indicate median performance, and dots indicate individual participant scores. For each figure, participants with diagnoses are coded with pink, and without diagnoses coded with turquoise, as applicable. Middle: Paired t-test of congruent versus incongruent accuracies across all participants. Right: The same as the middle panel, but for the diagnosis and no diagnosis groups. SEM: standard error of the mean. (b) Same as panel a, but for the performance metric of reaction time (seconds). (c) GLM-based planned contrasts for functional neuroimaging data for the Stroop (left: AP; middle: PA) and Emotional Faces (right) tasks. Cool and warm color scales show complementary contrasts, overlaid together on one brain schematic. For example, for the two Stroop runs, brain activity that was greater for congruent versus incongruent trials in cool color scale, and incongruent greater than congruent trials in warm color scale. Statistics for cortical vertices are projected onto a surface-based brain schematic (black borders surround vertices whose contrasts passed FDR correction). Subcortical statistics are projected onto a standard MNI (2 mm) volume-based brain schematic, showing average contrast statistics for each of the 32 regions provided by Tian et al.92. Three slices are shown following the visualizations in Tian et al.92, plus an additional slice to highlight the amygdala (x = −27, y = −4, z = −20). The group-level statistical activation maps are shown in all projections.

Next, we performed task GLMs on each run of the task-fMRI data (Fig. 8c). For the Stroop GLM, we used the congruent and incongruent conditions as regressors, and for the Emotional Faces GLM, we used face and shape conditions as regressors. For each participant, task events were time-locked to the onset of each trial and convolved with a canonical hemodynamic response function (HRF144), as well as a parameter for the first temporal derivative of the HRF. In addition, 8 parameters accounting for potential low-frequency signals (i.e., drift; with a cutoff of 0.01 Hz) that used a discrete cosine transform were added, as well as a constant parameter. We examined correct trials only and specifically contrasted the aforementioned conditions of interest (e.g., incongruent > congruent) for each vertex across the whole brain. The resulting individual-level statistical maps were compared using one-sample t-testing (versus 0) across participants to obtain group-level statistical maps (shown in Fig. 8). We used FDR correction to account for multiple comparisons145 at an error rate of α = 0.05. Vertices that passed FDR correction are outlined in black borders in Fig. 8.

Consistent with prior work, areas of the cingulate gyrus and lateral prefrontal cortex were significantly more responsive to incongruent versus congruent trials during the Stroop task146,147. Additionally, regions distributed across functional systems were more responsive to incongruent trials, including the frontoparietal, dorsal/ventral attention, somatomotor, and visual networks. In the Emotional Faces task, amygdala regions exhibited a robust and selective responsiveness to emotional faces versus shapes. This is consistent with the literature which reports that the Emotional Faces task reliably activates the amygdala in response to affective images of human faces12,148. This report validates that the Stroop and Emotional Faces task fMRI paradigms activate brain regions and systems previously shown to be involved with cognitive control and emotional reactivity, respectively. We performed these assessments across all participants, but we encourage future research with the TCP dataset to examine the extent to which brain activity and network interactions may be differentiable with diagnostic status or co-vary with clinically relevant behaviors. Additionally, the extent that MRI-related metrics scale with task performance is an important methodological question that we encourage future data users to explore.

Usage Notes

Transdiagnostic neuroimaging datasets with a broad range of behavioral measures are necessary to address complex questions regarding the relation between brain and behavior in psychiatry. The TCP dataset release provides a curated collection of neuroimaging, behavioral, cognitive, and personality data from 241 individuals meeting diagnostic criteria for a broad range of disorders, as well as individuals who do not meet these diagnostic thresholds (i.e., healthy controls). The data collection provides both processed and analysis-ready neuroimaging data using HCP-validated processing pipelines, as well as raw and anonymized BIDS-formatted data to allow researchers to implement alternate processing. Raw neuroimaging data, processed regional functional timeseries, and all behavioral measures can be accessed via OpenNeuro9 (https://openneuro.org/datasets/ds005237). Processed neuroimaging derivatives are co-deposited on the National Institute of Mental Health (NIMH) Data Archive (NDA)8 (https://nda.nih.gov/study.html?id=2932). NDA is a collaborative informatics system created by the National Institutes of Health to provide a national resource to support and accelerate research in mental health.

Code availability

The HCP processing pipelines are openly available here: https://github.com/Washington-University/HCPpipelines. All network metric code is available here: https://github.com/aestrivex/bctpy (Python) and here: https://sites.google.com/site/bctnet/ (MATLAB). All other code used for post-processing, FC estimation, and quality assurance analyses are available here: https://github.com/HolmesLab/TransdiagnosticConnectomeProject.

References

Holmes, A. J. & Patrick, L. M. The Myth of Optimality in Clinical Neuroscience. Trends in Cognitive Sciences 22, https://doi.org/10.1016/j.tics.2017.12.006 (2018).

Hyman, S. E. The Diagnosis of Mental Disorders: The Problem of Reification. Annual review of clinical psychology 6, https://doi.org/10.1146/annurev.clinpsy.3.022806.091532 (2010).

Romero, C. et al. Exploring the genetic overlap between twelve psychiatric disorders. Nature Genetics 54, https://doi.org/10.1038/s41588-022-01245-2 (2022).

Bycroft, C. et al. The UK Biobank resource with deep phenotyping and genomic data. Nature 562, https://doi.org/10.1038/s41586-018-0579-z (2018).

Poldrack, R. A. et al. A phenome-wide examination of neural and cognitive function. Scientific Data 3, https://doi.org/10.1038/sdata.2016.110 (2016).

Van Essen, D. C. et al. The WU-Minn Human Connectome Project: An Overview. NeuroImage 80, https://doi.org/10.1016/j.neuroimage.2013.05.041 (2013).

Health, N. I. O. M. Mental Illness, https://www.nimh.nih.gov/health/statistics/mental-illness (2023).

Cocuzza, C. V. et al. The Transdiagnostic Connectome Project dataset: a richly phenotyped open resource for advancing the study of brain-behavior relationships in psychiatry (National Institutes of Mental Health Data Archive (NDA) Collection 3552, Study #2932). https://nda.nih.gov/study.html?id=2932 (2024).

Chopra, S. et al. OpenNeuro Dataset ds005237 (The Transdiagnostic Connectome Project), https://openneuro.org/datasets/ds005237 (2024).

Passell, E. et al. Digital Cognitive Assessment: Results from the TestMyBrain NIMH Research Domain Criteria (RDoC) Field Test Battery Report. PsyArXiv https://doi.org/10.31234/osf.io/dcszr (2019).

Van Essen, D. C. et al. The Human Connectome Project: A data acquisition perspective. NeuroImage 4, https://doi.org/10.1016/j.neuroimage.2012.02.018 (2012).

Hariri, A. R., Tessitore, A., Mattay, V. S., Fera, F. & Weinberger, D. R. The Amygdala Response to Emotional Stimuli: A Comparison of Faces and Scenes. NeuroImage 1, https://doi.org/10.1006/nimg.2002.1179 (2002).

Peirce, J. W. PsychoPy—Psychophysics software in Python. Journal of Neuroscience Methods 162, https://doi.org/10.1016/j.jneumeth.2006.11.017 (2007).

Stroop, J. R. Studies of interference in serial verbal reactions. Journal of Experimental Psychology 18, 643–662, https://doi.org/10.1037/h0054651 (1935).

Comalli, P. E., Wapner, S. & Werner, H. Interference effects of Stroop Color-Word Test in childhood, adulthood, and aging. The Journal of Genetic Psychology 100, 47–53 (1962).

Klein, G. S. Semantic Power Measured through the Interference of Words with Color-Naming. The American Journal of Psychology 77, https://doi.org/10.2307/1420768 (1964).

Jensen, A. R. & Rohwer, W. D. Jr. The stroop color-word test: A review. Acta Psychologica 25, https://doi.org/10.1016/0001-6918(66)90004-7 (1966).

MacLeod, C. M. Half a century of research on the Stroop effect: An integrative review. Psychological Bulletin 109, 163–203, https://doi.org/10.1037/0033-2909.109.2.163 (1991).

Scarpina, F. & Tagini, S. The Stroop Color and Word Test. Frontiers in Psychology 8, https://doi.org/10.3389/fpsyg.2017.00557 (2017).

Cohen, J. D., Dunbar, K. & McClelland, J. L. On the control of automatic processes: A parallel distributed processing account of the Stroop effect. Psychological Review 97, 332–361, https://doi.org/10.1037/0033-295X.97.3.332 (1990).

Wright, B. C. What Stroop tasks can tell us about selective attention from childhood to adulthood. British Journal of Psychology 108 https://doi.org/10.1111/bjop.12230 (2017).

Algom, D. C., Eran. Reclaiming the Stroop Effect Back From Control to Input-Driven Attention and Perception. Frontiers in Psychology 10, https://doi.org/10.3389/fpsyg.2019.01683 (2019).

Periáñez, J. A., Lubrini, G., García-Gutiérrez, A. & Ríos-Lago, M. Construct Validity of the Stroop Color-Word Test: Influence of Speed of Visual Search, Verbal Fluency, Working Memory, Cognitive Flexibility, and Conflict Monitoring. Archives of Clinical Neuropsychology 36, https://doi.org/10.1093/arclin/acaa034 (2021).

Hepp, H. H., Maier, S., Hermle, L. & Spitzer, M. The Stroop effect in schizophrenic patients. Schizophrenia Research 22, https://doi.org/10.1016/S0920-9964(96)00080-1 (1996).

Henik, A. & Salo, R. Schizophrenia and the Stroop Effect. Behavioral and Cognitive Neuroscience Reviews 3, https://doi.org/10.1177/1534582304263252 (2004).

Barch, D. M., Braver, T. S., Carter, C. S., Poldrack, R. A. & Robbins, T. W. CNTRICS Final Task Selection: Executive Control. Schizophrenia Bulletin 35, https://doi.org/10.1093/schbul/sbn154 (2009).

Lubrini, G. et al. Clinical Spanish Norms of the Stroop Test for Traumatic Brain Injury and Schizophrenia. The Spanish Journal of Psychology 17, https://doi.org/10.1017/sjp.2014.90 (2014).

Young, S., Bramham, J., Tyson, C. & Morris, R. Inhibitory dysfunction on the Stroop in adults diagnosed with attention deficit hyperactivity disorder. Personality and Individual Differences 41, https://doi.org/10.1016/j.paid.2006.01.010 (2006).

Lansbergen, M. M., Kenemans, J. L. & van Engeland, H. Stroop interference and attention-deficit/hyperactivity disorder: A review and meta-analysis. Neuropsychology 21, https://doi.org/10.1037/0894-4105.21.2.251 (2007).

Vakil, E., Mass, M. & Schiff, R. Eye Movement Performance on the Stroop Test in Adults With ADHD. Journal of Attention Disorders 23, https://doi.org/10.1177/1087054716642904 (2016).

Filippetti, A., Richaud, V., Krumm, M. C., Raimondi, G. & Waldina Cognitive and socioeconomic predictors of Stroop performance in children and developmental patterns according to socioeconomic status and ADHD subtype. Psychology & Neuroscience 14, 183–206, https://doi.org/10.1037/pne0000224 (2021).

Wagner, G. et al. Cortical Inefficiency in Patients with Unipolar Depression: An Event-Related fMRI Study with the Stroop Task. Biological Psychiatry 59, https://doi.org/10.1016/j.biopsych.2005.10.025 (2006).

Mitterschiffthaler, M. T. et al. Neural basis of the emotional Stroop interference effect in major depression. Psychological Medicine 38, https://doi.org/10.1017/S0033291707001523 (2008).

Epp, A. M., Dobson, K. S., Dozois, D. J. A. & Frewen, P. A. A systematic meta-analysis of the Stroop task in depression. Clinical Psychology Review 4, https://doi.org/10.1016/j.cpr.2012.02.005 (2012).

Carpenter, K. M., Schreiber, E., Church, S. & McDowell, D. Drug Stroop performance: relationships with primary substance of use and treatment outcome in a drug-dependent outpatient sample. Addictive Behaviors 31, https://doi.org/10.1016/j.addbeh.2005.04.012 (2006).

Smith, D. G. & Ersche, K. D. Using a drug-word Stroop task to differentiate recreational from dependent drug use. CNS Spectrums 19, https://doi.org/10.1017/S1092852914000133 (2014).

DeVito, E. E., Kiluk, B. D., Nich, C., Mouratidis, M. & Carroll, K. M. Drug Stroop: mechanisms of response to computerized cognitive behavioral therapy for cocaine dependence in a randomized clinical trial. Drug and alcohol dependence 183, https://doi.org/10.1016/j.drugalcdep.2017.10.022 (2017).

McTeague, L. M., Goodkind, M. S. & Etkin, A. Transdiagnostic impairment of cognitive control in mental illness. Journal of Psychiatric Research C https://doi.org/10.1016/j.jpsychires.2016.08.001 (2016).

Barch, D. M. The Neural Correlates of Transdiagnostic Dimensions of Psychopathology. The American Journal of Psychiatry 174, https://doi.org/10.1176/appi.ajp.2017.17030289 (2017).

Smucny, J. et al. Levels of Cognitive Control: A Functional Magnetic Resonance Imaging-Based Test of an RDoC Domain Across Bipolar Disorder and Schizophrenia. Neuropsychopharmacology 43, https://doi.org/10.1038/npp.2017.233 (2017).

Kubera, K. M., Hirjak, D., Wolf, N. D. & Wolf, R. C. Cognitive control in the research domain criteria system: clinical implications for auditory verbal hallucinations. Der Nervenarzt 92, https://doi.org/10.1007/s00115-021-01175-0 (2021).

Tottenham, N. et al. The NimStim set of facial expressions: Judgments from untrained research participants. Psychiatry Research 168, https://doi.org/10.1016/j.psychres.2008.05.006 (2009).

Kleinhans, N. M. et al. Reduced Neural Habituation in the Amygdala and Social Impairments in Autism Spectrum Disorders. American Journal of Psychiatry 166, https://doi.org/10.1176/appi.ajp.2008.07101681 (2009).

Kamp-Becker, I. et al. Study protocol of the ASD-Net, the German research consortium for the study of Autism Spectrum Disorder across the lifespan: from a better etiological understanding, through valid diagnosis, to more effective health care. BMC Psychiatry 17, https://doi.org/10.1186/s12888-017-1362-7 (2017).

Phan, K. L., Fitzgerald, D. A., Nathan, P. J. & Tancer, M. E. Association between Amygdala Hyperactivity to Harsh Faces and Severity of Social Anxiety in Generalized Social Phobia. Biological Psychiatry 59, https://doi.org/10.1016/j.biopsych.2005.08.012 (2006).

Shin, L. M., Liberzon, I., Shin, L. M. & Liberzon, I. The Neurocircuitry of Fear, Stress, and Anxiety Disorders. Neuropsychopharmacology 35, https://doi.org/10.1038/npp.2009.83 (2009).

Pitman, R. K. et al. Biological studies of post-traumatic stress disorder. Nature Reviews Neuroscience 13, https://doi.org/10.1038/nrn3339 (2012).

Kanske, P., Schönfelder, S., Forneck, J. & Wessa, M. Impaired regulation of emotion: neural correlates of reappraisal and distraction in bipolar disorder and unaffected relatives. Translational Psychiatry 5, https://doi.org/10.1038/tp.2014.137 (2015).

Contreras-Rodríguez, O. et al. Disrupted neural processing of emotional faces in psychopathy. Social Cognitive and Affective Neuroscience 9, https://doi.org/10.1093/scan/nst014 (2014).

Stein, M. B., Goldin, P. R., Sareen, J., Eyler Zorrilla, L. T. & Brown, G. G. Increased Amygdala Activation to Angry and Contemptuous Faces in Generalized Social Phobia. Archives of General Psychiatry 59, https://doi.org/10.1001/archpsyc.59.11.1027 (2002).

Davidson, J., Turnbull, C. D., Strickland, R., Miller, R. & Graves, K. The Montgomery‐Åsberg Depression Scale: reliability and validity. Acta Psychiatrica Scandinavica 73, https://doi.org/10.1111/j.1600-0447.1986.tb02723.x (1986).

Lovibond, P. F. & Lovibond, S. H. The structure of negative emotional states: Comparison of the Depression Anxiety Stress Scales (DASS) with the Beck Depression and Anxiety Inventories. Behaviour Research and Therapy 33, https://doi.org/10.1016/0005-7967(94)00075-U (1995).

Kay, S. R., Fiszbein, A. & Opler, L. A. The Positive and Negative Syndrome Scale (PANSS) for Schizophrenia. Schizophrenia Bulletin 13, https://doi.org/10.1093/schbul/13.2.261 (1987).

Young, R. C., Biggs, J. T., Ziegler, V. E. & Meyer, D. A. A Rating Scale for Mania: Reliability, Validity and Sensitivity. The British Journal of Psychiatry 133, https://doi.org/10.1192/bjp.133.5.429 (1978).

Cloninger, C. R. A Systematic Method for Clinical Description and Classification of Personality Variants. Archives of General Psychiatry 44, https://doi.org/10.1001/archpsyc.1987.01800180093014 (1987).

Gard, D. E., Germans Gard, M., Kring, A. M. & John, O. P. Anticipatory and consummatory components of the experience of pleasure: A scale development study. Journal of Research in Personality 40, https://doi.org/10.1016/j.jrp.2005.11.001 (2006).

Brennan, K. A., Clark, C. L. & Shaver, P. R. in Attachment theory and close relationships (eds J. A. Simpson & W. S. Rholes) 46-76 (The Guilford Press, 1998).

Cyders, M. A. et al. Integration of impulsivity and positive mood to predict risky behavior: Development and validation of a measure of positive urgency. Psychological Assessment 19, https://doi.org/10.1037/1040-3590.19.1.107 (2007).

Shipley, W. C. Shipley institute of living scale. The Journal of Psychology: Interdisciplinary and Applied, (1986).

Garnefski, N. & Kraaij, V. The Cognitive Emotion Regulation Questionnaire. European Journal of Psychological Assessment 23, https://doi.org/10.1027/1015-5759.23.3.141 (2007).

Broadbent, D. E., Cooper, P. F., FitzGerald, P. & Parkes, K. R. The Cognitive Failures Questionnaire (CFQ) and its correlates. British Journal of Clinical Psychology 21, https://doi.org/10.1111/j.2044-8260.1982.tb01421.x (1982).

Frederick, S. Cognitive Reflection and Decision Making. Journal of Economic Perspectives 19, https://doi.org/10.1257/089533005775196732 (2005).

Li, X., Morgan, P. S., Ashburner, J., Smith, J. & Rorden, C. The first step for neuroimaging data analysis: DICOM to NIfTI conversion. Journal of Neuroscience Methods 264, https://doi.org/10.1016/j.jneumeth.2016.03.001 (2016).

Gorgolewski, K. J. et al. The brain imaging data structure, a format for organizing and describing outputs of neuroimaging experiments. Scientific Data 3, https://doi.org/10.1038/sdata.2016.44 (2016).

Muschelli, J., Sweeney, E., Lindquist, M. & Crainiceanu, C. fslr: Connecting the FSL Software with R. The R Journal 7, 163–175 (2015).

Jenkinson, M., Beckmann, C. F., Behrens, T. E., Woolrich, M. W. & Smith, S. M. FSL. NeuroImage 62, https://doi.org/10.1016/j.neuroimage.2011.09.015 (2012).

Gulban, O. F. et al. poldracklab/pydeface: PyDeface v2.0.2. https://doi.org/10.5281/zenodo.6856482 (2022).

Rubinov, M. & Sporns, O. Complex network measures of brain connectivity: Uses and interpretations. NeuroImage 3, https://doi.org/10.1016/j.neuroimage.2009.10.003 (2010).

Glasser, M. F. et al. The minimal preprocessing pipelines for the Human Connectome Project. NeuroImage Complete, https://doi.org/10.1016/j.neuroimage.2013.04.127 (2013).

Glasser, M. F. et al. A multi-modal parcellation of human cerebral cortex. Nature 536, https://doi.org/10.1038/nature18933 (2016).

Fischl, B. FreeSurfer. NeuroImage 62, https://doi.org/10.1016/j.neuroimage.2012.01.021 (2012).

Moeller, S. et al. Multiband multislice GE-EPI at 7 tesla, with 16-fold acceleration using partial parallel imaging with application to high spatial and temporal whole-brain fMRI. Magnetic resonance in medicine 63, https://doi.org/10.1002/mrm.22361 (2010).

Risk, B. B. et al. Which multiband factor should you choose for your resting-state fMRI study? NeuroImage 234, https://doi.org/10.1016/j.neuroimage.2021.117965 (2021).

Van Essen, D. C., Glasser, M. F., Dierker, D. L., Harwell, J. & Coalson, T. Parcellations and Hemispheric Asymmetries of Human Cerebral Cortex Analyzed on Surface-Based Atlases. Cerebral Cortex 22, https://doi.org/10.1093/cercor/bhr291 (2012).

Andersson, J. L. R., Skare, S. & Ashburner, J. How to correct susceptibility distortions in spin-echo echo-planar images: application to diffusion tensor imaging. NeuroImage 20, https://doi.org/10.1016/S1053-8119(03)00336-7 (2003).

Smith, S. M. et al. Advances in functional and structural MR image analysis and implementation as FSL. NeuroImage 23, Suppl 1, https://doi.org/10.1016/j.neuroimage.2004.07.051 (2004).

Salimi-Khorshidi, G. et al. Automatic denoising of functional MRI data: Combining independent component analysis and hierarchical fusion of classifiers. NeuroImage 90, https://doi.org/10.1016/j.neuroimage.2013.11.046 (2014).

Griffanti, L. et al. ICA-based artefact removal and accelerated fMRI acquisition for improved resting state network imaging. NeuroImage Complete, https://doi.org/10.1016/j.neuroimage.2014.03.034 (2014).

Aguirre, G. K., Zarahn, E. & D’Esposito, M. The inferential impact of global signal covariates in functional neuroimaging analyses. NeuroImage 8, https://doi.org/10.1006/nimg.1998.0367 (1998).

Macey, P. M., Macey, K. E., Kumar, R. & Harper, R. M. A method for removal of global effects from fMRI time series. NeuroImage 22, https://doi.org/10.1016/j.neuroimage.2003.12.042 (2004).

Murphy, K. & Fox, M. D. Towards a consensus regarding global signal regression for resting state functional connectivity MRI. NeuroImage 154, https://doi.org/10.1016/j.neuroimage.2016.11.052 (2017).

Liu, T. T., Nalci, A. & Falahpour, M. The global signal in fMRI: Nuisance or Information? NeuroImage 150, https://doi.org/10.1016/j.neuroimage.2017.02.036 (2017).

Power, J. D. et al. Methods to detect, characterize, and remove motion artifact in resting state fMRI. NeuroImage Complete, https://doi.org/10.1016/j.neuroimage.2013.08.048 (2014).

Parkes, L., Fulcher, B., Yucel, M. & Fonito, A. An evaluation of the efficacy, reliability, and sensitivity of motion correction strategies for resting-state functional MRI. NeuroImage 171, https://doi.org/10.1016/j.neuroimage.2017.12.073 (2018).